Launching today

Weavable

Give every AI agent persistent work context

133 followers

Give every AI agent persistent work context

133 followers

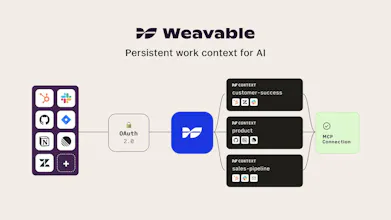

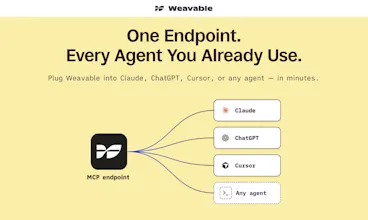

Weavable gives AI agents persistent, live work context from the tools your business already runs on. Through a single MCP endpoint, it turns scattered updates, relationships, and system changes into a usable context layer so agents can reason more accurately without constantly re-ingesting data. The result is lower token usage, better outputs, and more reliable agent behavior across real business workflows.

Hey Product Hunt 👋 I'm Abesh, co-founder of That Works, and today we are launching Weavable.

The Problem

Teams building agentic workflows are sitting on a goldmine of work context: decisions, relationships, pipeline data and support history that is spread across every tool they use. Getting that context into agents reliably is still harder than it should be.

Most approaches follow one of two flawed paths:

❌ Direct app connections - raw API and MCP responses flood the model, token costs balloon, and the agent burns its context window figuring out what matters instead of acting on it.

❌ Static knowledge bases or RAG - context goes stale the moment it's captured. Agents work from the last snapshot and confidently get things wrong.

So we built Weavable.

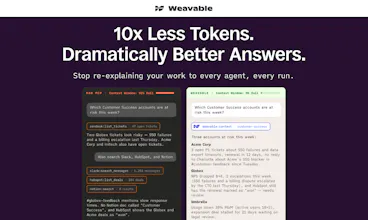

The difference is measurable: one-tenth the tokens compared to direct app connections, with outputs preferred 85% of the time in LLM-as-a-judge evals.

How Weavable is Different 🔌

Weavable is context infrastructure for AI agents. Instead of dumping raw data at the model or freezing a snapshot, Weavable maintains a continuous updating changelog across your actual work tools, so the knowledge graph your agents reason from is always mapped, reconciled, and up to date.

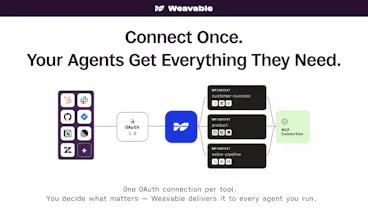

🔷 Connect your tools: one OAuth flow covers HubSpot, Slack, Zendesk, Jira, GitHub, email. Scoped access, no broad permissions.

🔷 Define shared contexts: customer health might live across your HubSpot pipeline, Zendesk queue, and a Slack channel. Weavable pulls that together into a single context your whole team's agents reason from. No per-agent app connections, no duplicated permissions, no visibility gaps.

🔷 Plug it in: one MCP endpoint into Claude, Cursor, n8n, or any client you're running. Live in a few minutes.

Who is this for?

If you're building or operating agentic workflows on top of real work data, and you're tired of silent failures, token blowout, and context that's always slightly wrong - Weavable is built for you.

🚀 Get started today

Start free for 30 days, full access, no card required at weavable.ai

— Abesh & Varun

Nice! I especially like the activity graph/changelog approach because it treats context as something dynamic.

Curious: how do you actually reduce token usage by 90%?

@grantmac_ Thanks! Indeed, nothing about data is every static!

On the tokens: pulling raw data into the context window costs you twice. Once on ingestion, then again on reasoning, while the LLM connects records, sorts by recency, and figures out what matters. Weavable's graph already knows the relationships and what changed when. The agent queries for the specific signals it needs, and the model only reasons over those.

The 90% is what we see on realistic workflows like pre-meeting briefs, renewal analysis, pipeline summaries, compared to the same thing built on raw MCP calls.

@varunn very cool guys!

This is a really interesting point of view here. The activity-graph approach makes sense — context should reflect what's happening, not just what was recorded.

One question from our experience building Faindo: we connect to multiple AI models (ChatGPT, Perplexity, Gemini) and one challenge we keep hitting is that each model interprets the same context differently depending on how it was trained. Does Weavable normalize context before it hits the MCP endpoint, or does it stay model-agnostic and let the agent handle interpretation?

Congrats on the launch, following the progress closely.

@nerijusrimdzius Thanks, and a great question!

Weavable structures context before it hits the MCP endpoint. We rank it, denoise it, and resolve the connections that carry the most signal, so the downstream model gets a high-quality, ready-to-reason-over view rather than raw records to make sense of.

One thing we've noticed: because of how we construct the context, models in the same class tend to reason about it in similar ways. The structure is unambiguous enough that interpretation converges. Different classes of model still unlock different capabilities on top of that, but the floor moves up everywhere, and the variance within a class drops noticeably.

Curious where you've seen the biggest gaps across the three you're running at Faindo. That's exactly the kind of cross-model signal we want to be informed by.

DayOne

Yes! I've been trying to solve this problem for months with various (often questionable) hacks. Love it.

One thing I’ve been thinking about a lot with agentic systems is context governance.

Most team have hugely different sensitivity levels across data, customer conversations, board discussions, HR issues, commercial terms, etc. How does Weavable handle permissions and context boundaries so agents only reason from the information that specific users or teams should actually be able to see?

@iamtherealcmk Thanks Conor! Context governance is the right question to be asking as more sensitive work moves through agentic systems.

Two layers to how we handle it.

First, all connections to source apps are OAuth and read-only, tightly scoped. Weavable only sees what your OAuth scope permits.

Second, agents don't connect to your data sources directly. They connect to contexts you've defined in Weavable: curated views with explicit data scopes. This set of HubSpot pipelines, those Slack channels, this Notion space, scoped to the user or team that should see it. Board discussions, HR, commercial terms each live in their own context with their own access rules. The governance boundary sits at the context layer, where you can model the actual sensitivity structure of your team, not at the data source where it's brittle and easy to overshare.

Every query against a context is logged too: which agent, which user, which signals were retrieved. So you get an audit trail that matches the governance model.

What's the shape of the hacks you've been running? 😅

This is very cool guys, congratulations. How does Weavable do in terms of speed at a practical level compared to connecting say claude code into all the individual data sources?

We've found connecting to the data sources directly is slow as well as being token heavy, claude has to pull some data, build a context, then pull more data, from that figure out what else it needs, it goes on for a while, especially if some are through MCP, does this help with that as well?

@seyed_danesh Thanks Seyed! Yes, this is one of the (many) things that Weavable is designed to fix.

You're describing the iterative-retrieval loop: pull, reason, pull more, reason again. Each round trip costs latency and tokens, and with direct MCPs across multiple sources it compounds fast.

Weavable does that work upstream and continuously. By the time the agent queries, the connections are resolved, the signals are ranked, and the change history is already in the graph. The agent typically gets what it needs in one query against a context, not four against four data sources. Fewer round trips, lower latency, and roughly a tenth of the tokens.

In our testing we've not only found the responses to be of better quality but also found that the downstream model/agent becomes better at adhering to instructions too!

Jumping in as the other maker.

Here’s the bet underneath everything we built: work isn’t just documents or records. It’s activity. The things people and agents do over time. A renewal slips because three signals lined up across CRM, support, and Slack that nobody connected. A deal closes because of a conversation in a thread, not a field.

The record is the residue. The work is what moved.

Most AI context tools either flatten all of that into a snapshot, or stitch together a handful of MCPs that make endless calls against flat records, pollute the context window, and still don’t know what changed or why. We thought both were wrong.

So we built Weavable on a deterministic engine that tracks how information changes, builds a changelog of every meaningful update, and stitches it into an activity graph. That graph is what your agent queries through the MCP endpoint. Not a summary, not a vector blob. A structured, time-aware picture of what’s actually happening. And because your agent can query for the specific signals it needs, it doesn’t ingest an entire workspace to find them. Less context window, less cost, sharper answers.

Would love to hear from anyone who’s tried to solve this differently. We think the activity-graph approach is the right primitive, but we’re early enough that we want to be wrong out loud if we are.

Congrats on the launch. The activity graph / changelog framing is strong.

How do you decide which context should become a reusable workflow signal versus just being retrieved for one agent query?