We just launched cheating detection in AI screening interviews

Remote hiring has a trust problem.

When candidates take automated interviews, recruiters always wonder:

Is the candidate actually present?

Are they reading answers from another screen?

Is someone else helping off-camera?

Did the video “drop” conveniently during key answers?

Until now, there was no reliable way to verify this.

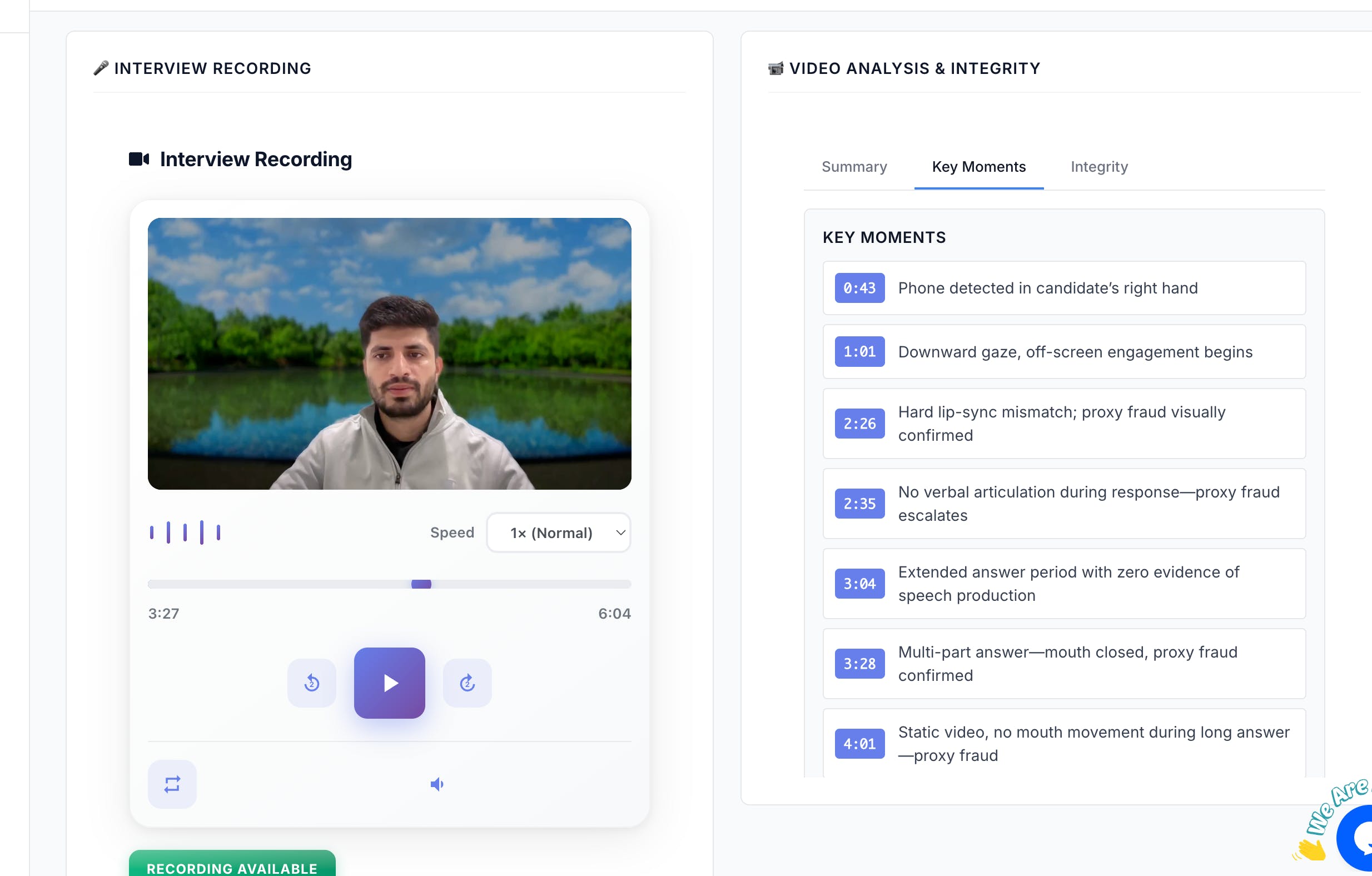

🎯 What we launched today

InterviewFlowAI now detects integrity issues during screening interviews using video, audio, and behavioral analysis.

Our new cheating detection system analyzes:

👀 Eye movement patterns (screen reading vs natural recall)

🙂 Facial expressions & engagement signals

🎙 Speech activity detection (is the candidate actually speaking)

📹 Video blackouts during active answers

🔄 Audio–video mismatch (speech without visual confirmation)

⚠️ High-risk moments flagged with severity levels and timestamps

Instead of just recording interviews, we now surface key integrity moments automatically, so recruiters can review only what matters.

🧠 How it works (high level)

Establishes a clean behavioral baseline at the start

Continuously monitors eye movement, facial cues, video feed, and speech

Detects anomalies against that baseline

Marks suspicious moments on a timeline with exact timestamps

Generates an integrity report alongside the interview score

👀 Why this matters

AI made screening faster.

But it also made cheating easier.

We believe the future of hiring must be:

Fast. Fair. Verifiable.

This update is a big step toward restoring trust in remote hiring.

Would love feedback from recruiters, founders, and hiring teams.

Happy to answer questions in the comments 🙌

— Mukul

Founder, InterviewFlowAI

Replies