Launching today

Oriane

The perception layer for Marketers and their AIs

259 followers

The perception layer for Marketers and their AIs

259 followers

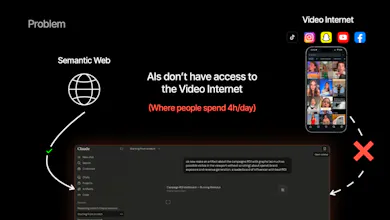

91% of internet bandwidth is led by videos that your AI does NOT watch... Oriane watches millions of social videos a day, and turns what's on screen, in audio and in captions into a structured intelligence layer for teams and their AI apps to find niche creators, spot content trends, identify viral hooks and more!

Interactive

Free Options

Launch Team / Built With

Oriane

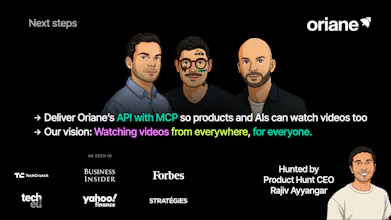

Huge thanks to @rajiv_ayyangar for hunting us 🙏

Hey Product Hunt 👋

Julien here, co-founder of Oriane (with Yuri and Thibaut).

Quick story. Two years ago, Yuri was running product at Jellysmack working with creators like MrBeast. They kept asking him: "What's my actual presence on TikTok? Where are my clips being remixed? The data simply didn't exist.

I was leading growth at DRESSX with clients like Lacoste, Adidas, Tommy Hilfiger... Same problem. Still the same at LVMH today, where brand teams rely on social listening tools like Brandwatch or Meltwater, that treat video like text. Captions and metadata aren't the content anymore.

So we built the perception layer. Oriane watches millions of TikToks, Reels, and Shorts a day, and turns what's on screen, in audio, and in captions into structured intelligence. We're the eyes and ears of the AI stack.

Brain → LLMs.

Eyes & ears → Oriane.

A few things about us:

🍒 Thibaut, our CTO, took our cost to vectorize 100K videos from $60K to $60. That's a 1,000x reduction. It's why this is even a viable category.

🍒 Customers include McCann, LVMH, Woo, Dior, Disney, Pierre Fabre, Estée Lauder, and more.

What I'd genuinely love feedback on:

🍒 If you work at a brand or agency, what's your current workflow for tracking video mentions, and what does it cost you?

🍒 What would make Oriane essential for your team, not just useful?

🍒 Is "the eyes and ears of the AI stack" the framing that lands for you, or is something else clearer?

Special offer for the PH community: 1 month free on Pro plan ($499 value) with code HUNTER-499. Good through May 30.

Yuri, Thibaut and I are here all day.

Bring your toughest questions 🙏

@rajiv_ayyangar @julien_rosilio How do you handle the freshness problem? A trend on TikTok can peak and die in 36 hours — what's the lag between a video going up and being searchable in Oriane?

Oriane

@romejerome Hello! Great question!

We currently have a 24h "freshness" window, meaning that a video post will be indexed and searchable on Oriane roughly about 24h post publication.

We're working towards a near-live time experience!

VentureBeat Insight

Traditional scrapers and social listening tools hunt for hashtags or captions, but they are deaf and blind to what actually happens inside the frame. This platform watches and transcribes social video content, surfacing brand mentions and visual trends that never make it into a text-based description.

It uncovers the specific micro-influencers who are already organically discussing a niche, rather than just the high-priced creators with the right keywords in their bios.

The real utility lies in the data structure. You can analyze video content to deconstruct visual hooks and audio patterns and determine exactly why specific segments engage viewers. Feeding this granular output into an LLM allows for the creation of trend reports that feel like they came from a high-priced agency, but in a fraction of the time.

While the speed is impressive, users should anticipate a learning curve. Navigating the sheer volume of "visual mentions" requires a disciplined approach to filtering, or you risk drowning in data. It isn't a "set it and forget it" solution; it demands an active strategist to turn these observations into a coherent content plan. That said, the team behind Oriane is ready to assist, and the website includes tons of case studies and use cases to inspire you. In summary, it offers a depth of category intelligence that other tools simply cannot match.

Oriane

Oriane

@therealsjr I agree with @julien_rosilio, that's a sharp analysis.

I would add that we are also actively working on the rigorous filtering approach you mentioned. The tool can run extremely powerful searches, but the learning curve exists. That’s exactly why we are building a more AI-driven approach to search.

The direction we are heading in is to better frame and refine queries directly in natural language for equally accurate data extraction, but without any upfront learning curve. The promise is simple: instant access to data, without friction, filters, or complex prompt engineering.

Oriane

@joannarojas98 Absolutely!

In fact, this is already something we’re capable of doing today. You can download raw data with semantic fields, as well as enriched or AI-extracted fields. From there, the analysis layer takes over. We’re integrated with some of the leading LLMs on the market to power that. Check out our prompt library.

Feel free to follow the page and Yuri, Julien, and myself on LinkedIn. We regularly share use-cases showing how to leverage exactly the kind of intent-based analysis you’re describing. Today, you’re essentially 1 search + 1 extract away from generating your report.

And very soon it will be just 1 natural language prompt away, thanks to our MCP integration 🚀

This sounds interesting for creator research. Which platforms are you pulling videos from right now, like TikTok and Reels or more YouTube Shorts?

Oriane

Very interesting. On the technical side, how do you manage to have such low latency with so many videos and what I imagine is a vector search? You used pinecone or qdrant?

Oriane

@adnanp You’re right, there is vectorization in our secret sauce 🧪

But in “secret sauce” there is also “secret”, which refers to the broader set of technologies we use that play a major role in making search feel immediate. That’s really what we are aiming for: response times that match modern web navigation standards.

You mentioned Pinecone and Qdrant, which are indeed two common candidates for vector storage. We have experimented with several of these technologies. Qdrant, for instance, while being a very solid vector database is somewhat limited in our quest of very complex real-time search capabilities, and it becomes quite expensive at large scale.

So the latency you’re seeing is less about a single tool, and more about the full stack design optimized specifically for speed and scale.

Art House

Just gave it a spin on the free version, looks pretty solid. How do you manage to vectorize so much videos without running out of money?

Oriane

@justfred_ar We handle the vectorization pipeline and storage end to end, which helps us keep costs under control without relying on external services.

It does add a fair amount of technical complexity upfront, but that investment stabilizes over time (unlike immediate usage-based costs that scale with volume).

So the real “secret” is deep control over both infrastructure costs and Oriane’s overall system architecture.

Zyphe

Hi Yuri / Thibaut, what other dimensions on top of AI Vision and audio transcripts do you plan to release then? Music ?

Oriane