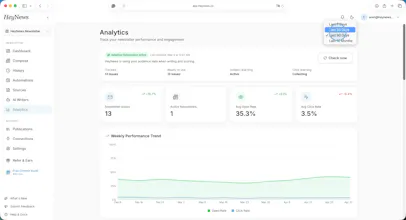

Launched this week

HeyNews

Your newsletter, your voice, ready to publish in 5 minutes

147 followers

Your newsletter, your voice, ready to publish in 5 minutes

147 followers

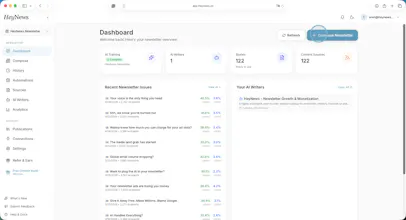

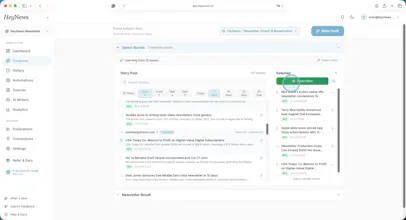

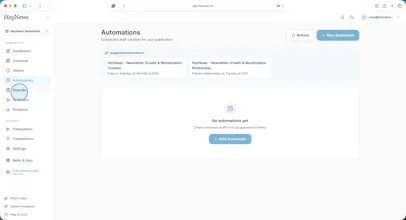

HeyNews learns from your newsletter archive, monitors your sources, and generates publish-ready drafts in your voice. Native Beehiiv & Kit; archive imports from Substack or any newsletter with a public archive. 14-day trial, plans from $39/mo. PH50 → 50% off 12 months.

Interactive

Free Options

Launch Team / Built With

HeyNews

Thrilled to see HeyNews live! To answer Cagri's question from a content marketing perspective: the most 'broken' part of current workflows is usually the friction between research and writing. You often lose your 'voice' while juggling 20 different tabs.

I’m particularly excited about how the platform avoids AI 'hallucinations' by sticking to trusted RSS and social feeds. It’s great to see a tool that keeps the editor in full control!

@cagrisarigoz Voice training actually solving the "sounds like AI" problem is the most interesting part here. Every other tool gets the curation right but fumbles the draft. Curious how it handles niche technical newsletters where the tone is very specific — does it pick up on jargon and formatting quirks too?

HeyNews

@vishal7017 Yes, both. The AI Writers are trained on whatever's in the archive, so jargon and formatting quirks come through with it. If your past issues use a specific term for something, always open with a one-line summary, or have a particular way of citing sources, the AI Writers learn that as part of the format. Nothing needs to be flagged or tagged; it just shows up in the training data.

Niche technical newsletters are actually one of the cases where the per-format AI Writer setup helps most, because the editorial taste (what counts as a relevant story for this audience) is usually more specific than for general-interest newsletters. The scoring side benefits more there than the voice side, in our experience.

The best way to see is to connect your newsletter and generate one draft. The voice match either reads as yours or it doesn't, and a draft is faster to judge than a description.

@cagrisarigoz Is there an integration with Mailchimp?

HeyNews

@natalia_iankovych not yet, but native integration is on the roadmap. In the meantime, you can connect your public newsletter archive or upload a CSV of past issues so HeyNews learns your voice. Happy to ping you when the native integration ships.

The "step 3 sounds like AI" failure mode is the real wall for any voice-as-output product — generic LLMs produce competent-but-anonymous prose, and once a reader has caught that smell they can't unsmell it. Training off the archive solves a different problem than prompt-engineering does: it makes the model write like a specific person rather than like "a knowledgeable newsletter writer in general," which is the difference between something a reader subscribes to and something they unsubscribe from.

I hit a parallel problem on the audio side running ModeLoop, a small podcast on financial modeling. When I tried AI-generated show notes early on, listeners called them out almost immediately — not because they were wrong but because they didn't sound like me. Eventually I stopped using them. Curious how you handle the cold-start problem here — how many archive issues does HeyNews need before the voice match feels native to a reader who knows the publication well?

HeyNews

@samir_asadov "competent-but-anonymous" is the right phrase for it. And the ModeLoop story is the data point most operators end up learning from: readers (or listeners) catch the smell faster than the creator does, because they're seeing the publication as a whole and the AI prose stands out as a deviation from a pattern they've already internalized.

Which is why the cold-start framing matters. The floor for "doesn't sound generic to anyone" is around five issues per format. But the bar you're pointing at, "feels native to a reader who knows the publication well," is higher than the floor, and depends more on what makes your publication recognizable.

The more your voice depends on subtle moves (cadence, atypical sentence shapes, a particular kind of aside), the more issues the model needs to capture the pattern instead of averaging it out. Operators with distinctive voices usually need closer to ten or more issues per format before drafts pass the long-time reader test; operators with more conventional prose need fewer.

Practical move when you're working with a smaller archive: have someone who reads your work closely (not you, you can't unsee your own draft) read the first two or three drafts and flag what feels off. That kind of feedback compresses the smell-test curve faster than iterating from your own seat.

Congrats on the launch — the “review-before-send only” choice feels important. For newsletters, the hardest part isn’t just generating words in a familiar style; it’s preserving editorial judgment when a story should *not* sound like the last 20 issues.

Curious how you handle deliberate voice shifts: e.g. a more urgent issue, a more personal note, or a sponsor-heavy edition where the usual cadence needs to bend without becoming generic?

HeyNews

@jim_jeffers Jim, you've named the actual editorial judgment edge, and the honest answer is the AI Writer won't solve it. That's a design choice.

For your three cases concretely:

Urgency: the system handles the baseline; the urgent-issue restructuring is operator work. We've thought about urgency-signaling on the source-scoring side (story age, cross-source overlap, breaking-news patterns), but right now the operator catches that, not the system.

Personal note: this is the case where I'd recommend writing the personal section from scratch and letting HeyNews assemble the rest of the issue around it. An AI Writer trained on regular issues will flatten a heartfelt anniversary or farewell. It shouldn't try.

Sponsor-heavy editions: if they're structurally different (more ad slots, a different opener, sponsor-led sections), they benefit from being their own format with their own AI Writer trained on past sponsored issues. If it's the same template with one extra ad block, that's a compose-time placement question, not a voice question.

The wider answer is the one you opened with: editorial judgment doesn't transfer. The product's job is to make the baseline cheap so the operator's attention is free for the moments that need it. Review-before-send is the explicit rule precisely because of cases like these.

This is a good one. Voice training angle is what separates this from the generic tools. Training on actual past issues allows to pick up jargon, quirks and sentence patterns. Looks like the right approach! To stress-test - how it handles newsletters that deliberately vary their tone issue-to-issue? Does the model average out your style?

HeyNews

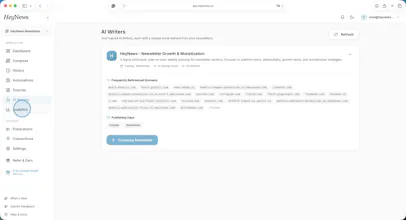

@artstavenka1 Real edge case. The per-format AI Writer setup handles part of it: if the variation is structural (Monday deep-dive vs Friday Q&A), each format gets its own Writer trained only on matching issues, and they stay separate. No averaging across them.

Where it actually struggles is intentional variation within the same format. Same newsletter, same template, but the operator deliberately shifts the tone of the issue. In that case, the AI Writer does converge on the central tendency. The workaround that works in practice is splitting systematic variation into separate formats so each gets its own AI Writer, but for mood-driven variation that isn't easily labeled, it stays a real limitation.

One related point worth flagging: what gets averaged is tone, not topic. Source scoring is a separate system, so the mix of sources brought into any specific draft still shifts the result.

That partly compensates for the averaging on the voice side, but it's a manual lever rather than something the model resolves on its own.

Curious about the voice training minimum. how many past issues does the model need before the output actually sounds like you and not generic AI? most tools I've tested fall apart under ~20 examples

@romain_delgado Our trials have shown that our voice matching works even with 3 past issues, but to create a sustainable and balanced outcome, we recommend to use more than 5 issues minimum.

HeyNews

@romain_delgado great question. ~5 issues from a single format is the practical floor for us.

That's lower than the 20-example wall you've hit on other tools because of the architecture: we train a separate AI Writer per format rather than one Writer per publication, so each AI Writer only has to learn one cadence (Monday deep-dive vs Friday roundup, say) instead of disambiguating multiple cadences from one shared pool.

The reason most tools fall apart under 20 is usually that they're trying to learn the operator's voice across mixed-format examples. Five issues of "Monday deep-dive" together are more useful for that format than fifty issues of "everything you've ever shipped." The signal isn't diluted by formats with different rhythms.

More is better. There's a noticeable gain going from 5 to 10. But the marginal return flattens fast. Operators we've onboarded with 20-30 issues per format don't get a clearly better AI Writer than those onboarded with 6-8. What helps more after the floor is variety within the format (a mix of strong and average issues, not just the greatest hits), so the Writer sees what "normal for you" looks like, not just "best of you."

The Catch: it's per format, not total. An operator with 100 issues across 5 wildly different formats is in worse shape on day one than an operator with 25 issues across a single format.

The "learns from your archive" part is clever. I've seen so many newsletter tools that generate generic content — having it adapt to your voice is what makes it usable long-term.

I'm curious about the learning curve. How many past issues does it need before the suggestions start feeling like "you"? I run a bilingual blog (Spanish/English) for a YouTube creator tools platform and voice consistency across languages has been my biggest challenge.

@ytubviral Thanks! As my co-founder @cagrisarigoz mentioned in one of his previous replies, 5 issues per format is the minimum amount we need to make it sound like you. But more is definitely better, so we use as many issues as possible.

You have an interesting challenge on your hands. I think we can manage to ensure voice consistency across languages, but the problem is that nobody on our team speaks Spanish, so it would be really challenging for us to test cross-language voice fidelity in this case.

The "in your voice" part is what makes this interesting to me. I've tried AI draft tools before and the problem is always that they smooth out the rough edges that made my writing feel like me. Curious how HeyNews handles that does it pick up on style over time or is it more template-based? The Substack import is also a smart touch for people with an existing archive. Congrats on the launch.

HeyNews

@sagar_kalra1, the "smoothing out rough edges" failure mode is the exact one we tried to design against. Most tools end up there because they generate against a style description ("write like Sagar's newsletter, which is conversational and witty") rather than against your actual writing. A description of your voice is a flattened version of it by definition.

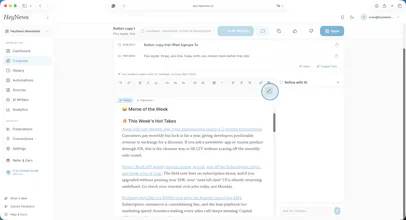

What HeyNews does instead: the AI Writer trains on your past issues directly. The rough edges come through as data: signature phrases, sentence patterns that aren't textbook-correct, the way you open sections, formatting quirks. Not as instructions, as patterns. That's what survives generation.

On the "over time" question: it's trained, not templated. The trained profile is refined after each send (performance data from your platform shifts weights on the scoring side, thereby choosing which stories surface). The voice side is more stable. Once it knows your voice, it knows it and rebuilds only if you retrain it on new issues.

Honest caveat: some voice tics still get smoothed, especially subtle rhythm choices that the model reads as noise until enough examples show otherwise. The operator review step is where those get re-added. A practical signal that voice training is working: a few issues in, you should be editing for content, not for "this doesn't sound like me." If you're still doing the second kind of edit, something's off.