Apideck is a unified API platform that lets B2B SaaS and Fintech companies connect to accounting, HRIS, and CRM software without building and maintaining each integration separately. One API, dozens of connectors, normalized data models out of the box.

This is the 6th launch from apideck. View more

Apideck MCP Server

Launching today

Don't let Claude and Codex roam free on your customers' SaaS data.

Apideck MCP gives AI agents permissioned access to 200+ apps, including Accounting, CRM, HRIS, ATS, and more, through a single endpoint.

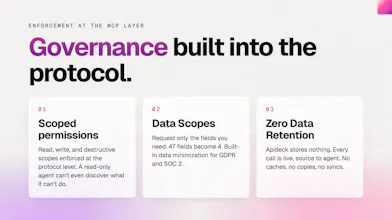

Scoped read/write permissions and field-level redaction are enforced at the MCP layer.

Works with any MCP client (Claude, Cursor, Codex, Windsurf, LangChain, Vercel AI SDK) and agent runtimes like OpenClaw and Hermes.

One MCP server. 200+ apps. Production-ready.

Free Options

Launch Team / Built With

apideck

Hey Product Hunt 👋

We shipped something different with this one.

Apideck is a Unified API. One integration gives developers access to 20+ accounting systems, 20+ HRIS platforms, file storage, and more. That means our MCP server doesn't expose "QuickBooks invoices" it exposes "accounting invoices," and the connector fires based on what the user has authorized. Our tool surface is 229 operations and growing.

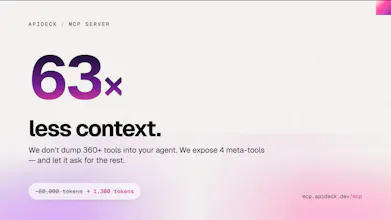

The harder problem was tokens. Static mode at 229 tools costs 25-40K tokens before an agent reads a single message. We solved it with dynamic tool discovery: 4 meta-tools at startup (~1,300 tokens), and agents discover what they need on demand. It means adding ecommerce and CRM won't cost a single extra token at initialization.

The server is live at mcp.apideck.dev/mcp. Code is open source at github.com/apideck-libraries/mcp. Full write-up on the stack, hosting tradeoffs, and the analytics debugging is on our blog.

Happy to answer questions about the OpenAPI-to-MCP generation pipeline, the dynamic discovery architecture, or why we picked Vercel over Cloudflare Workers.

The data normalization layer is where unified API platforms either win or quietly accumulate debt. Connectivity to 200+ platforms is the easy part — the hard, irreversible decisions are the data model choices: how do you reconcile QuickBooks' chart of accounts structure with NetSuite's multi-subsidiary model or DATEV's tax-first schema into one object? Once customers are in production on your model, you can't break it. Curious how Apideck handles model versioning and whether breaking changes in upstream connectors surface as silent data drift or noisy failures.

apideck

Yes, I agree, and you can test our unified APIs to get the idea of how we normalize data. You can already find the schemas and guides on our docs.

MCP server hitting 200+ apps is the kind of leverage I keep wishing existed for one-off automation. Quick question: how do you handle write actions that are not idempotent across the underlying APIs (e.g. Salesforce vs HubSpot create-contact dedupe)? Do agents see a unified shape or each provider's quirks?

apideck

@whateverneveranywhere

Yes, the agents see a unified schema/unified API response, which can be used for read/write operations.

Oh, love this idea - used to have a lot of problems with comparing MRR in CRM & accounting systems. If I could have data in one place back then, it'd save my hours and a lot of frustration.

apideck

@philip_kubinski thanks for the validation!

apideck

@philip_kubinski

Yes, that is the pain point we're trying to solve with AI agents. We already have our unified API, and adding the MCP on top of it, you can easily combine data from different data sources, and then take that to create combined reports, take actions, etc.

What used to take hours can be done in less than <20 minutes.

Congrats on the launch!

The dynamic tool discovery part is interesting, especially if it keeps the token cost down. For accounting/CRM write actions, do you expose enough context for audit/review before the agent actually writes? That feels like the scary part with MCP + business data.

apideck

Hi Ihor, mutating data is the scary part. That's why we added scoped permissions before the agent can run anything destructive.

@gertjanwilde Makes sense. Scoped permissions help a lot. For destructive actions, a quick "review before write” step would make it much easier to trust.

apideck

@ihorperkovskyi Exactly, we're working on an agent builder (preview below) that embeds this. As you correctly identified, this is overlooked in agent design.

Really like this direction. Tool access for agents sounds simple until you’re juggling huge tool surfaces, token limits and permissions. The dynamic discovery part is clever. Wonder how performance looks when workflows get long and agents keep discovering more tools on the go.

apideck

@jaidevxb you can already limit what permissions your MCP will have in the Apideck's dashboard.

apideck

Co-maker here 👋

Small thing worth mentioning: every tool call is instrumented through PostHog, with `waitUntil`-flushed batches so events survive Vercel's serverless lifecycle.

Which tools agents actually call out of 330, latency per operation, error rates by scope, all of it feeds back into what we prioritize next. That includes workflow tools like `apideck-month-end-close-check` (accounting) that fan out 4 reports in parallel behind one MCP call, analytics tell us when composition above the protocol is actually paying off versus when agents would rather chain the underlying tools themselves.

Hard to build for agents without seeing how they use the surface.