Parallax by Gradient

Host LLMs across devices sharing GPU to make your AI go brrr

827 followers

Host LLMs across devices sharing GPU to make your AI go brrr

827 followers

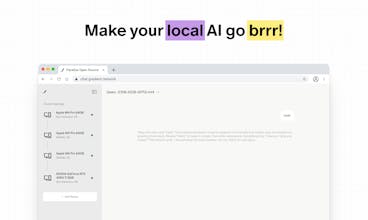

Your local AI just leveled up to multiplayer. Parallax is the easiest way to build your own AI cluster to run the best large language models across devices, no matter their specs or location.

Parallax by Gradient

Hello Product Hunt 👋,

Everyone loves free, private LLMs. But today, they’re still not as scalable or easy to use as they should be.

We’ve always felt that local AI should be as powerful as it is personal, and this is why we built Parallax.

Parallax started from a simple question: what if your laptop could host more than just a small model? What if you could tap in to other devices — friends, teammates, your other machines — and run something much bigger, together?

We made that possible. It’s the first framework to serve models, fully distributedly, across devices, regardless of hardware or location.

No one will ever be gpu-poor again!

In benchmarks, Parallax already surpasses other popular local AI projects and frameworks, and this is just the beginning. We’re working on LLM inference optimization techniques and deeper system-level improvements to make local AI faster, smoother, and so natural it feels almost invisible.

Parallax is completely free to use, and we’d love for you to try it and build with us!

@rymon Wow! This is the project I was "hunting" for so long! Thank you Gradient for building this and open sourcing it. Here comes the wave of innovators. I see so many possibilities - are you partnering with HuggingFace, Apple/MLX, Ollama...? Would love to hear about your roadmap and hopefully contribute to this lovely project.

Agnes AI

Pooling devices for distributed LLMs is terrific.... my laptop always struggles solo :( Does Parallax handle dynamic connections if friends drop in and out mid-session? Would love to try out asap!

Parallax by Gradient

@cruise_chen we will add node auto-rebalancing very soon! so anyone can tap in and tap out mid-session. stay tuned!

Parallax by Gradient

@pavel_evteev Yes!Sovereignty is great!

This is such a smart direction , distributed local AI cloud really change how people think about compute access .

Have you thought about adding a way for users to share or rent out idle GPU power within a trusted network ?

Parallax by Gradient

@farhan_nazir55 As more and more users start using Parallax, this is totally possible! Let‘s build together!

Is Parallax designed mainly for LLM inference, or could it also support other workloads like diffusion models or RAG pipelines?

Parallax by Gradient

@clayccc Exactly! Parallax will support a wider range of models and establish a flexible workflow that keeps your memory private in the future. Stay tuned for our upcoming updates!

RewriteBar

This is cool! Is it also possible to connect the GPU to the public network so that it can be accessed remotely from different locations?

Parallax by Gradient

@m91michel Tweaking the code a bit can make this happen. You’re welcome to fork and tailor it to what you want!

Wow! This is the project I was "hunting" for so long! Thank you Gradient for building this and open sourcing it. Here comes the wave of innovators. I see so many possibilities - are you partnering with HuggingFace, Apple/MLX, Ollama...? Would love to hear about your roadmap and hopefully contribute to this lovely project.