Mindra

Agent Teams You Can Actually Delegate To

891 followers

Agent Teams You Can Actually Delegate To

891 followers

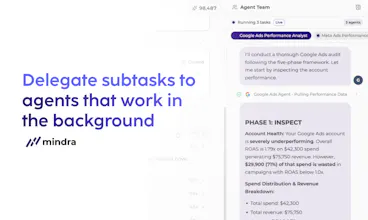

Mindra is the command center for your non-sleeping, 24/7 awake agentic team. Explain your task, and Mindra will create the best agentic team for you. Automate your marketing, supply chain and more. With Mindra's built-in governance, human oversight, and support for your existing stack, you can finally trust your agents.

Mindra

Hey Product Hunt 👋. I’m Zeynep, co-founder of Mindra. We started building Mindra with my co-founders @denizsoylular and @ilker_yoru 6 months ago.

The problem

AI agents are powerful, but isolated. Each does one thing, none collaborate, and when one fails, the whole workflow breaks.

Most tools fall into two dead ends:

• Single-agent assistants → great at drafting, not at doing. A summary isn’t a shipped campaign.

• Pre-defined chains (Zapier, n8n, LangChain) → look clean on a whiteboard, but in production every step is a fragile point of failure. No critic, no retry, no fallback.

What we built instead

Mindra runs teams of specialized AI agents that actually execute work across your tools—with humans in the loop where it matters, and tight permissions everywhere else.

Why it’s different

• Teams, not chains → Every workflow gets a purpose-built agent team tailored to your company

• Self-healing, always-on → Agents run 24/7, re-plan and retry when things break, and only escalate when truly stuck

• 3,000+ integrations → Meta Ads, Google Ads, HubSpot, Salesforce, Slack, your ERP—no glue code

• Compounding memory → Agents learn your business (tone, policies, playbooks). Context gets stronger over time

Who it’s for

Marketing, sales, ops, support, supply chain, any team buried in repetitive execution. Our users are running campaigns end-to-end, automating outbound, handling tier-1 support, and closing books weeks faster.

Check it out

👉 mindra.co

For the Product Hunt community: we’re onboarding a small group of pilot customers this quarter. Bring one workflow you’d love to delegate—we’ll scope it with you, no pitch.

We have an online launch party where you can ask your questions directly to us. 5 pm May 4th

Register here: https://luma.com/dmph2nle

Drop a comment or DM me. I’ll be here all day 🙌

RiteKit Company Logo API

The cost unpredictability is why a lot of teams still hesitate on autonomous agents, even when the ROI math works. Are you guys implementing hard token limits or retry budgets that the agents respect, or is there more of a safety net approach where you catch runaway behavior and adjust?

Mindra

@osakasaul There are multiple guardrails we have implemented to prevent it from happening.

- Mindra is self-healing: It does not blindly repeat failing tool-calls like static workflow builders. it checks the error, documentation, tries different parameters or finds different tools to complete the task.

- It keeps you in the loop: if an action fails consecutively despite all efforts and Mindra thinks that action is essential for the completion of the task, it reaches out to you and waits until you provide an input.

- You can configure the maximum running time and maximum number of tool failures before terminating, per heartbeat of the agent. In the case it wakes up and starts a task at 2 am, but encounters a completely unexpected error and can not reach task completion, you might want it to terminate it at a hard deadline of 1 hour or after 10 failed tool calls.

the agent-team angle is interesting — most agent products today still ship as a single agent that pretends to be a team. real delegation needs a coordinator that owns task decomposition + retry logic, not just parallel chat windows. curious what you're using under the hood for the orchestration layer? we hit a similar problem on a different category of agent work and ended up writing the orchestrator as a state machine because LLM-driven orchestration was too non-deterministic for production. great to see this shipped today, congrats.

Mindra

@whateverneveranywhere This is exactly the tension we wrestled with for months. You're right that pure LLM-driven orchestration is too non-deterministic for production. We learned that the hard way. What we landed on is a hybrid: a structured coordinator layer that owns task decomposition, state, and retry logic, with LLM reasoning used selectively for the parts that genuinely need it (edge cases, re-planning when something unexpected happens, deciding how to escalate). The state machine instinct makes a lot of sense. Predictability matters more than flexibility at the orchestration layer. The LLM shouldn't be deciding whether to retry it should be deciding how to recover, within a frame the coordinator controls.

The 'agentic team you can actually delegate to' framing is what nails it for me — I run finance content (financial modeling YouTube at https://www.youtube.com/@Mod3Loop) on top of a day job in M&A, and the bottleneck has never been ideas, it's the production layer (briefs, thumbnails, repurposing, distribution). Curious how Mindra handles agents that need long-running domain context (e.g. a recurring "audit my LBO model walkthrough script for IRR/DSCR accuracy before publishing") vs. one-off tasks. Where does the human governance step usually slot in?

Mindra

@samir_asadov

For something like a recurring 'audit my LBO script for IRR/DSCR accuracy before publishing,' you're right that this doesn't need a dedicated agent spun up each time. If the task is stateless (just: here's the script, check the math), the orchestrator handles it as a single LLM call with the right financial context baked into the system prompt. Fast, low overhead.

Where persistent agents start to earn their keep is when the task needs memory across runs e.g. the system knowing your previous episode's model assumptions, your channel's notation style, or that you always use a 5-year hold period in your LBO frameworks. That's when a long-running agent with domain context actually saves time vs. re-prompting from scratch every time.

On human governance: for content like yours where accuracy really matters (you're publishing financial models to an audience that will use them), the natural slot is a 'review gate' before publish — agent does the heavy lifting, flags anything uncertain, you make the final call.

With YouTube integration, briefs, thumbnails, and analytics handled at the platform level, that audit step is really the last meaningful human touchpoint before a video goes live.

The naming is spot on - "agent teams you can actually delegate to." When I was scaling from 15 to 120 engineers as CTO, the hardest part wasn't hiring - it was learning to delegate effectively without losing quality. If AI agents can genuinely own a workflow end-to-end with proper human oversight, that changes the game for lean teams trying to punch above their weight. Curious how the governance layer works in practice.

Mindra

@avrisimon Thank you for the insight! We actually put the governance layer outside of the LLM to make sure the decision-making process is deterministic.

For the always-on 24/7 piece, how do you keep costs predictable? An agent team that re-plans and retries sounds great until I'm staring at an LLM bill that 10x'd because something kept failing overnight.

Mindra

@iamryan Great question Ryan, that's a valid concern.

There are multiple guardrails we have implemented to prevent it from happening.

- Mindra is self-healing: It does not blindly repeat failing tool-calls like static workflow builders. it checks the error, documentation, tries different parameters or finds different tools to complete the task.

- It keeps you in the loop: if an action fails consecutively despite all efforts and Mindra thinks that action is essential for the completion of the task, it reaches out to you and waits until you provide an input.

- You can configure the maximum running time and maximum number of tool failures before terminating, per heartbeat of the agent. In the case it wakes up and starts a task at 2 am, but encounters a completely unexpected error and can not reach task completion, you might want it to terminate it at a hard deadline of 1 hour or after 10 failed tool calls.

Mindra

@anusuya_bhuyan You’re totally right, “actually” has to earn its keep here.

The thing we learned is that delegation breaks when agents are treated like loose chat threads. For Mindra, delegation is more structured: the orchestrator breaks work into scoped tasks, creates or selects the right specialist agents, assigns only the tools they should use, tracks their progress, and then reconciles the results back into one answer.

The hard part is not just “can an agent call a tool?” It’s: did it get the right tool, did auth work, did the tool result come back, did the sub-agent finish, and can the orchestrator recover when one part fails?

So we’re building Mindra around real execution state, tool-call visibility, retries, auth recovery, and agent accountability. That’s what makes multi-step work much more reliable than just asking one model to improvise through a long task.