Launching today

HallucinationBuster

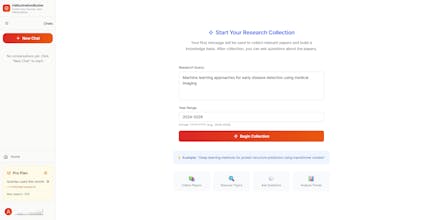

AI research tool where YOU choose which publishers to trust

7 followers

AI research tool where YOU choose which publishers to trust

7 followers

ChatGPT fabricates 20-56% of academic citations. HallucinationBuster takes a different approach: it searches real academic databases, lets you pick which publishers and years to include, then processes ONLY your approved papers. AI-powered topic clustering, relevance scoring, and RAG chat, all grounded in verified sources with clickable DOIs. You control the sources. The AI never sees a paper you didn't approve. Free tier available, no credit card needed.

Hey Product Hunt Family!

I'm Priyabrata, and I built HallucinationBuster because I got burned by fake citations during my research work.

I was using ChatGPT to help with literature reviews and kept finding references that looked perfect with correct formatting, plausible author names, real-sounding journal titles, except the papers didn't exist. When I checked the DOIs, they went nowhere.

This isn't a rare problem. A 2025 study from Deakin University found that 56% of ChatGPT's academic citations contain errors or are completely fabricated. Another study found a 91% hallucination rate for Google Gemini on research queries. Even Big Four consulting firms aren't immune. KPMG delivered a government report on research integrity to Australia's NHMRC that contained a fabricated citation. Similarly, Deloitte was caught using AI in a $290,000 report to help the Australian government crack down on welfare fraud, after a researcher flagged hallucinations including non-existent references and a fake court quote.

So I built something different. HallucinationBuster doesn't ask AI to remember papers. It:

→ Searches real academic databases with 200M+ papers

→ Collects 1,000+ papers on your topic

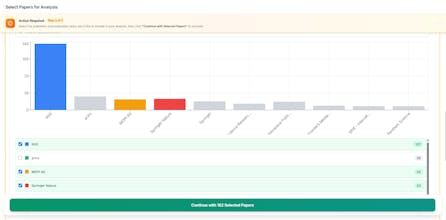

→ Lets you filter by publisher (IEEE, Springer, Nature, etc.) and year range

→ Only THEN does AI process your selected papers for clustering topics, scoring relevance, and powering a RAG chat

The key difference: you control the source universe before AI touches anything. No tool I've found (Elicit, Consensus, Perplexity) gives you this level of pre-processing source control.

It's free to try: 2 queries/month, 50 papers per query. No credit card needed.

I'd genuinely love your feedback. What would make this more useful? What's missing?

🔗 https://hallucinationbuster.com

Finally, a tool that offers source control! I have been looking for an alternative to Elicit’s automated pull for a while and HallucinationBuster hits the mark. What sparked the decision to give the user more oversight at the start of the funnel?

@loan_dinh Great question! In recent days, during my lit review of my research, I kept running into the same problem of AI tools that would pull papers from everywhere without letting me control the source quality. In academia, where a paper is published matters as much as what it says. Different fields trust different publishers, and I wanted that decision to stay with the researcher, not the algorithm. So I designed the pipeline to let users filter by publisher and year before AI processing begins. This way the AI only works with sources you've already vetted.

I work in legal research, and verifying citations is one of the most tedious aspects of preparing reports. The publisher-filtering feature is very useful, since different fields rely on different trusted publishers. Does your system also allow filtering by specific journals, or only by publisher?