Launching today

HeyNews

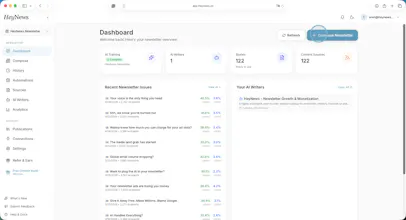

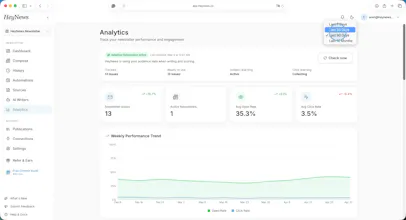

Your newsletter, your voice, ready to publish in 5 minutes

86 followers

Your newsletter, your voice, ready to publish in 5 minutes

86 followers

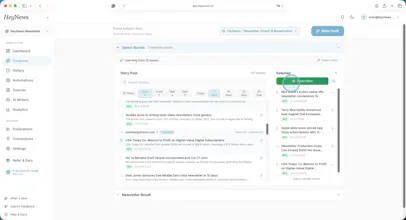

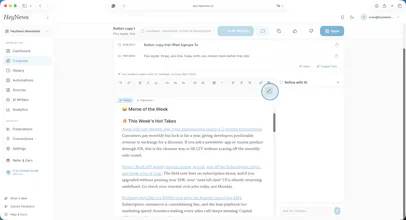

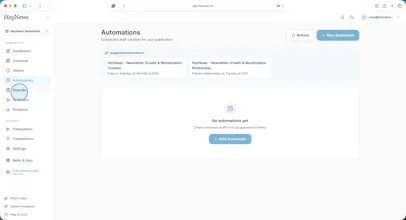

HeyNews learns from your newsletter archive, monitors your sources, and generates publish-ready drafts in your voice. Native Beehiiv & Kit; archive imports from Substack or any newsletter with a public archive. 14-day trial, plans from $99/mo. PH50 → 50% off 12 months.

Interactive

Free Options

Launch Team / Built With

HeyNews

Thrilled to see HeyNews live! To answer Cagri's question from a content marketing perspective: the most 'broken' part of current workflows is usually the friction between research and writing. You often lose your 'voice' while juggling 20 different tabs.

I’m particularly excited about how the platform avoids AI 'hallucinations' by sticking to trusted RSS and social feeds. It’s great to see a tool that keeps the editor in full control!

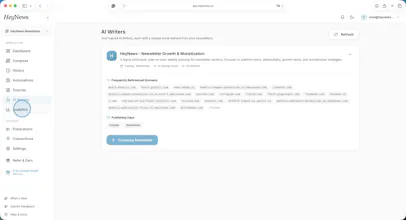

@cagrisarigoz Voice training actually solving the "sounds like AI" problem is the most interesting part here. Every other tool gets the curation right but fumbles the draft. Curious how it handles niche technical newsletters where the tone is very specific — does it pick up on jargon and formatting quirks too?

HeyNews

@vishal7017 Yes, both. The AI Writers are trained on whatever's in the archive, so jargon and formatting quirks come through with it. If your past issues use a specific term for something, always open with a one-line summary, or have a particular way of citing sources, the AI Writers learn that as part of the format. Nothing needs to be flagged or tagged; it just shows up in the training data.

Niche technical newsletters are actually one of the cases where the per-format AI Writer setup helps most, because the editorial taste (what counts as a relevant story for this audience) is usually more specific than for general-interest newsletters. The scoring side benefits more there than the voice side, in our experience.

The best way to see is to connect your newsletter and generate one draft. The voice match either reads as yours or it doesn't, and a draft is faster to judge than a description.

This is a good one. Voice training angle is what separates this from the generic tools. Training on actual past issues allows to pick up jargon, quirks and sentence patterns. Looks like the right approach! To stress-test - how it handles newsletters that deliberately vary their tone issue-to-issue? Does the model average out your style?

HeyNews

@artstavenka1 Real edge case. The per-format AI Writer setup handles part of it: if the variation is structural (Monday deep-dive vs Friday Q&A), each format gets its own Writer trained only on matching issues, and they stay separate. No averaging across them.

Where it actually struggles is intentional variation within the same format. Same newsletter, same template, but the operator deliberately shifts the tone of the issue. In that case, the AI Writer does converge on the central tendency. The workaround that works in practice is splitting systematic variation into separate formats so each gets its own AI Writer, but for mood-driven variation that isn't easily labeled, it stays a real limitation.

One related point worth flagging: what gets averaged is tone, not topic. Source scoring is a separate system, so the mix of sources brought into any specific draft still shifts the result.

That partly compensates for the averaging on the voice side, but it's a manual lever rather than something the model resolves on its own.

Curious about the voice training minimum. how many past issues does the model need before the output actually sounds like you and not generic AI? most tools I've tested fall apart under ~20 examples

@romain_delgado Our trials have shown that our voice matching works even with 3 past issues, but to create a sustainable and balanced outcome, we recommend to use more than 5 issues minimum.

HeyNews

@romain_delgado great question. ~5 issues from a single format is the practical floor for us.

That's lower than the 20-example wall you've hit on other tools because of the architecture: we train a separate AI Writer per format rather than one Writer per publication, so each AI Writer only has to learn one cadence (Monday deep-dive vs Friday roundup, say) instead of disambiguating multiple cadences from one shared pool.

The reason most tools fall apart under 20 is usually that they're trying to learn the operator's voice across mixed-format examples. Five issues of "Monday deep-dive" together are more useful for that format than fifty issues of "everything you've ever shipped." The signal isn't diluted by formats with different rhythms.

More is better. There's a noticeable gain going from 5 to 10. But the marginal return flattens fast. Operators we've onboarded with 20-30 issues per format don't get a clearly better AI Writer than those onboarded with 6-8. What helps more after the floor is variety within the format (a mix of strong and average issues, not just the greatest hits), so the Writer sees what "normal for you" looks like, not just "best of you."

The Catch: it's per format, not total. An operator with 100 issues across 5 wildly different formats is in worse shape on day one than an operator with 25 issues across a single format.

The "learns from your archive" part is clever. I've seen so many newsletter tools that generate generic content — having it adapt to your voice is what makes it usable long-term.

I'm curious about the learning curve. How many past issues does it need before the suggestions start feeling like "you"? I run a bilingual blog (Spanish/English) for a YouTube creator tools platform and voice consistency across languages has been my biggest challenge.

@ytubviral Thanks! As my co-founder @cagrisarigoz mentioned in one of his previous replies, 5 issues per format is the minimum amount we need to make it sound like you. But more is definitely better, so we use as many issues as possible.

You have an interesting challenge on your hands. I think we can manage to ensure voice consistency across languages, but the problem is that nobody on our team speaks Spanish, so it would be really challenging for us to test cross-language voice fidelity in this case.

The "in my voice" v. "more human" piece of the prompt is so key. Especially as we see more and more second and third human AI layers like Sinceerly and others start to pop up. Now it just needs the originality that the author brings to the table to be fully autonomous

@joe_setpoint indeed! This was our starting point as well. The exact question was: Should we create more "artificial" stuff when we can create unique content in the relevant author's tone&style? The answer was a resounding no, and here we are. 😌

Concentrating a full day labor into about 5 minutes while keeping the operator's voice intact for that price is something! Reach out to mete '' at '' heybe . ai for partnership opportunities with a great product like HeyNews!