Bench for Claude Code

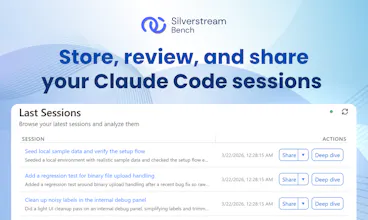

Store, review, and share your Claude Code sessions

718 followers

Store, review, and share your Claude Code sessions

718 followers

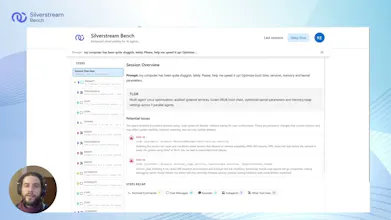

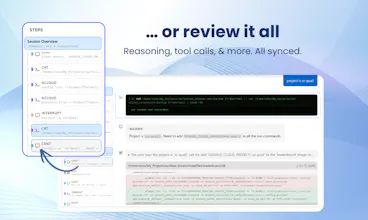

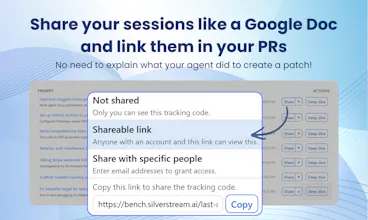

Claude Code just opened a PR. But do you really know what it did? By using Bench you can automatically store every session and easily find out what happened. Spot issues at a glance, dig into every tool call and file change, and share the full context with others through a single link: no further context needed. When things go right, embed the history in your PRs. When things go wrong, send the link to a colleague to ask for help. Free, no limits. One prompt to set up on Mac and Linux.

Bench for Claude Code

Hey Product Hunt! 👋

I’m Manuel, co-founder of Silverstream AI. Since 2018, I’ve been working on AI agents across Google, Meta, and Mila. Now I’m building Bench for Claude Code with a small team.

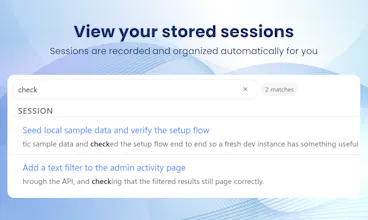

If you use Claude Code a lot and want to store, review, or share its sessions, this tool is for you. Once connected, Bench automatically records and organizes your sessions, letting you inspect and debug them on your own or share them with your team to improve your workflows.

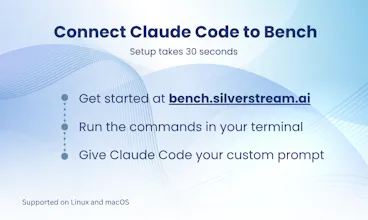

Getting started is simple:

• Go to bench.silverstream.ai and set it up in under a minute on Mac or Linux

• Keep using Claude Code as usual

• Open Bench when you need to understand or share a session

That’s it.

Bench is completely free. We built it for ourselves and now want as many developers as possible to try it and shape it with us.

We’ll be here all day reading and replying to feedback (without using Claude 😂). Would love to hear what you think!

Btw, support for more agents is coming soon, so stay tuned!

@manuel_del_verme Many congratulations on the launch, Manuel and team! :)

This brings much-needed visibility into Claude Code sessions, especially for debugging + collaboration for async teams. Do you also plan to add deeper analytics or insights (like patterns across sessions or common failure points) to help developers improve workflows over time?

Bench for Claude Code

@manuel_del_verme @rohanrecommends Hey! A warm thank you from the team side! :)

We do already provide a few basic but effective ways to speed up session analysis, precisely through key insights and recaps, and our next goal is just to iterate on this more and more, to provide easier and faster ways to analyse your sessions. So, we are absolutely going to push further in that direction.

The idea of spotting cross-session patterns however is something we haven't considered yet, and it's really intriguing! We'll totally consider it as well. After all, that's the goal of this launch: to have as many people as possible to test Bench out, and identify how we could improve it! :)

Thank you!

@manuel_del_verme congrats on the launch. The subagent visibility part is what caught my attention. I run sessions that spawn 3-4 levels of subagents and half the time I don't even know one of them went off and rewrote a config file until something breaks later. Being able to trace back to which subagent did what, and why, would save me a lot of "git diff, wait what changed" moments. Does the timeline view let you jump straight to a specific subagent's actions, or do you still need to scroll through the whole session?

Bench for Claude Code

@manuel_del_verme @alan_silverstreams Hey! :) Thank you from all the team! I totally share your frustration, the subagent logs in particular are somehow "hidden" from the Claude Code output, so it's really hard to understand what is going on there, apart from when Claude needs for some specific permission. And the short answer is yes, that's exactly why we built a collapsible tree of steps, where the hierarchy is given precisely to separate the various subagent runs.

So, through Bench, you have a few options:

you can use the "recap" interface to spot exactly the subagent run you are interested about, click on it and skim through what it did

you can use the tree navigator in the sidebar to identify who did what, and dig deeper only on the substeps you really care about

or, sometimes, you don't really care about who did what, and you prefer to just have a list of all the bash commands that happened to take a look at the full list of "side effects", spotting the most delicate ones even before starting to scroll in the steps list

Right now Bench allows you to support all these flows quite intuitively (or at least, I hope so), so I really encourage you to try and take a look: you can just analyze a simple session where you explicitly ask Claude Code to spin up subagents, if you don't want to share real data, and see how easily you get to pinpoint the details about it! :)

Just please note that, right now, the hierarchical tree visualization is a bit "limited" in that sense: we obviously sort everything chronologically, but subagents "talk" to each other and perform operations in parallel, out of order, and this is something we cannot possibly show accurately there, while grouping steps by subagent owner. The tool use recaps, however, are sorted chronologically, and we are working on introducing a separate timeline view, to simplify showing what happened from a chronologically sound point of view as well, so we'll get there soon! Stay tuned :)

How deep does it go when tracking tool calls and file changes across a session?

Bench for Claude Code

@hamza_afzal_butt as deep as possible :) The whole goal of Bench is to trace as many details as possible on every action performed by the agent, and then to allow you to review spot the details were looking for easily and quickly! The limit is just on what Claude Code allows us to extract, which is quite a lot anyways! In terms of tool calls, we can extract all the details about the command used to launch the tool, and the "origin" of that call, whether it's the conversation that led the agent there or a subagent run that had a specific goal to reach.

About file changes, it's basically the same thing: we obviously can show the delta, but also why and when the agent took the decision to apply that specific change.

Raycast

Why aren't your Claude Code sessions as easy and convenient to share as Google Docs?

Well now they can be, thanks to Silverstream's Bench!

This free tool gives you insight into your agent runs so you can see where things went off the rails, and then share a link to your colleagues or coworkers to track down — and fix — the issue.

This is a simple, useful tool that can start using now to get a handle on your agents' performance.

Bababot

I’m curious how detailed the tracking is. If I can really see every tool call and file change clearly, I can imagine using this for debugging more than anything else.

Bench for Claude Code

@aarav_pittman That's as detailed as Claude Code allows to get, which is quite a lot :) Of course we get everything about tool call and file changes, but also about subagent runs and all the steps that sometimes are even hidden from Claude's terminal output. And yes, debugging has been our first reason to build bench: as a development tool allowing us to finetune automated task prompts and make them more reliable.

Once the tool was there, we then realized that it also had lots of other uses: being able to also store the whole conversation that led to develop a feature in a certain way, and then being able to share it with colleagues was also very useful, so we had to pick which aspect to focus the most on, for this launch, but yeah, debugging is definetely another great way to use Bench for! :D

Congrats on the launch!

Quick question, any plans to add Windows support? Would love to use this but currently stuck on Windows 🙂

Bench for Claude Code

@julia_zakharova2 Hey Julia! Support for windows is in our top-3 feature list so will come soon! You can subscribe for updates on bench.silverstream.ai to get notified when this comes in :)

Premarket Bell

How granular is the session tracking? Can you trace decisions step-by-step or it is more of a high level overview?

Bench for Claude Code

@daniel_henry4 We have both! And, personally, that's what I love about Bench. You can quickly have an overview of what the agent did and which tool it used during the session. Then, you can also open a single step and see what happened in there and what Claude has seen. What are you looking for in your use case?

Premarket Bell

How granular is the session tracking? Can you trace decisions step-by-step or it is more of a high level overview?

Bench for Claude Code

@daniel_henry4 the goal of the tool is to allow you to get each specific detail about the whole process: you can follow all actions, subagent calls, and decisions taken during a session, so we try to store data in the most detailed possible way.

Then, of course, this gets quite quickly a lot to manage, especially on longer sessions: imagine having a 200-steps session to troubleshoot, or more, for example! For this reason we are providing a set of tools to also allow you to skim through the steps and highlight the ones you may really care about. Some tools are incredibly simple, such as just grouping steps by type of action, while some other tools are more refined, such as sending warnings on commands that may be potentially concerning. This is the area where we'll focus the most in the future as well, trying to provide as many details as possible, while allowing session analysis to be as quick as possible!