Parallax by Gradient

Host LLMs across devices sharing GPU to make your AI go brrr

827 followers

Host LLMs across devices sharing GPU to make your AI go brrr

827 followers

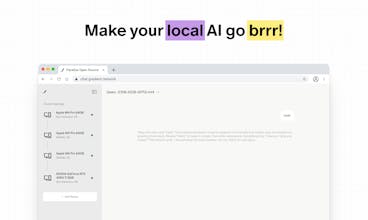

Your local AI just leveled up to multiplayer. Parallax is the easiest way to build your own AI cluster to run the best large language models across devices, no matter their specs or location.

GraphBit

Really love what you’re building here, Parallax tackles distributed inference beautifully. We’re working on GraphBit, which focuses on the orchestration layer- making AI agents run reliably and concurrently once those models are deployed. Feels like what you’re building (how models run) and what we’re solving (how intelligence executes) could complement each other perfectly. Would love to explore possible collaboration! My email- musa.molla@graphbit.ai

Parallax by Gradient

@musa_molla Thanks for your kind words! Maybe we’ll have a chance to collaborate in the future

YouMind

Really exciting idea — turning idle devices into a distributed inference cluster feels practical and privacy-friendly.

Quick question: how do you handle latency and bandwidth variability across WAN peers to keep inference smooth for real-time apps? Would love clarity on any built-in QoS or fallback strategies.

Looks pretty great! I wonder if it's possible to add more models, as it seems like there are only two available right now.

Parallax by Gradient

@aemondsensei We have many available models if you host your own AI cluster! the two you see is a frontend chatbot interface that we built to showcase how parallax works. You should definitely try hosting your own cluster with parallax!

Parallax makes it easy to build your own AI cluster run top-tier LLMs across any device, regardless of specs or location. Scalable intelligence, now in your hands.

Is there a cost to self host this and use the models?

Parallax by Gradient

@mike_rod1 completely free!

Is there any latency overhead when coordinating inference across heterogeneous hardware?

Parallax by Gradient

@damon98 depends on your internet condition, there may be some latency overheads. you could also connect your devices physically (Eg. Macs with thunderbolt) which also works with parallax!

RewriteBar

This is cool! Is it also possible to connect the GPU to the public network?