Ollama Explorer

Find the right local AI model for your hardware instantly

7 followers

Find the right local AI model for your hardware instantly

7 followers

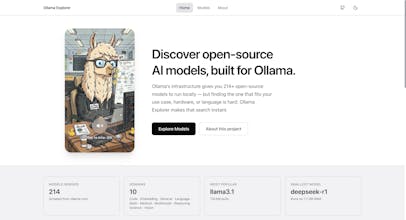

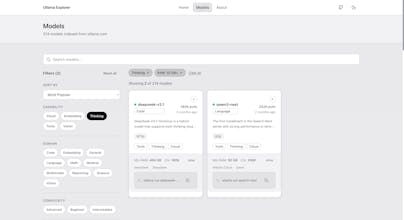

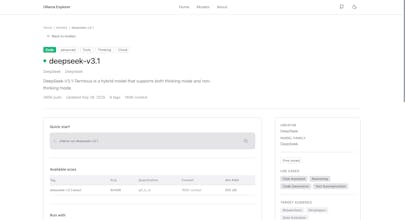

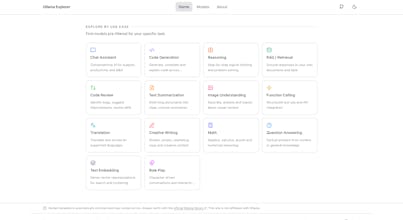

Ollama has 200+ open-source AI models — but finding the right one is painful. Ollama Explorer fixes that. Filter by use case (coding, reasoning, RAG, vision), domain, RAM requirement, parameter size, and context length. Each model shows hardware needs, pull counts, and recommended tasks. No signup. No ads. Just a fast, open-source tool to find the model that fits your machine.

Hey Product Hunt! 👋

I built Ollama Explorer because I kept wasting time trying to figure out which Ollama model would actually run on my machine — and which one was best for what I needed.

Ollama's library page is great, but it's just a list. No filtering by RAM, no use-case discovery, no way to compare models side by side.

So I built the browser I wished existed. 214+ models, filterable by RAM, domain, context length, parameter size, and use case. It's fully open source: github.com/serkan-uslu/ollama-explorer

Would love your feedback — especially if you're an Ollama user. What filters or features would make this more useful for you?

Oh, and when you first land on the site — Olli will greet you. He's a llama with opinions about your model choices. Don't say I didn't warn you. 🦙

i am just getting into my first foray into local models and ollama this should be a big help in figuring out which is the biggest and best model I can use!