Launched this week

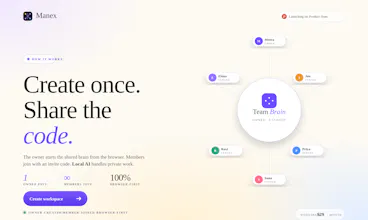

Manex

Preserve useful answers, corrections, and context as memory

126 followers

Preserve useful answers, corrections, and context as memory

126 followers

Manex is a private AI memory for documents and team knowledge. Upload files, ask grounded questions, and preserve useful answers, corrections, and context as memory. It runs locally where supported, keeps data private by default, and lets teams create a shared brain without per-seat pricing.

Interactive

Free Options

Launch Team / Built With

Manex

This nails a real pain point. When I was CTO scaling from 15 to 120 engineers, the biggest knowledge drain wasn't people leaving - it was context dying between conversations. Someone would figure out why a system was designed a certain way, explain it in Slack, and three months later the next person would make the same mistake because that context was buried. The private-by-default approach is smart too. Most teams I've worked with won't adopt knowledge tools if there's any friction around what gets shared externally vs kept internal. How does the correction preservation work in practice - can you override an earlier answer if circumstances change?

Manex

@avrisimon Absolutely, that “context dying between conversations” is exactly the problem we’re trying to solve.

In Manex, we treat a correction differently from a normal answer. A normal answer is just a response grounded in the available documents. A correction is treated as a higher-signal memory because it usually represents domain judgment: “this interpretation is wrong,” “use the newer policy,” “this client exception matters,” or “that old decision no longer applies.”

In practice, yes, you can override earlier context. The goal is not to pretend memory is permanent truth. Teams change their minds, policies get superseded, and project assumptions expire. So the memory layer needs to preserve the history while making the latest accepted correction the one that influences future answers.

That is the workflow we’re building toward: documents provide evidence, conversations create interpretations, and corrections keep the team’s understanding current.

The 'preserve corrections as memory' angle is the part most knowledge tools miss — the value isn't the original answer, it's the corrected one after a domain expert pushed back. I run into this constantly when teaching financial modeling (I have an Excel for Financial Modelling course on Udemy: https://www.udemy.com/course/exc...), where 80% of the value of a senior modeler's review is in the corrections, not the original draft. Most courses and team wikis throw that layer away. Curious whether Manex distinguishes between an answer and a correction in its memory layer, or treats them as equally weighted snippets?

Manex

@samir_asadov Yes, exactly. That distinction is one of the main things we’re trying to preserve. In Manex, the original answer and the correction are not meant to be treated as equal raw snippets. The correction becomes a stronger memory signal because it represents expert judgment applied to the source material. The document gives the base evidence, the AI answer gives an interpretation, and the correction captures the domain-specific rule or decision that should influence future answers. We are still refining the weighting and propagation, but the product direction is very much correction-aware memory rather than just storing chat history.

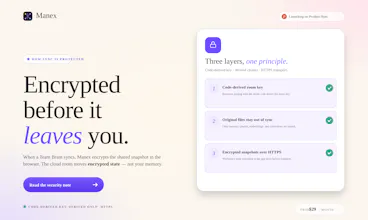

"Local / in-browser" is the part that matters most for files I can't upload to a third party. How big can the input get before the browser chokes — and is there a desktop or CLI version planned for the cases where the browser tab isn't the right surface?

Manex

@sounak_bhattacharya That is exactly the tradeoff. The browser is great for privacy and zero-install testing, but it does have practical limits. Right now Manex works best with small to medium document sets, and performance depends heavily on the device/browser because OCR, chunking, embeddings, and local inference are happening client-side. For larger document libraries, we are thinking in terms of progressive indexing, resumable ingestion, and more explicit “this is still processing” states rather than pretending the browser has infinite capacity. Having said that I have tried ingesting text books and it has worked without breaking. You might get a "Browser busy, wait or kill" once in a while. Thanks

How large of a context can the service handle?

Manex

@natalia_iankovych Right now Manex is designed more around document libraries than one giant prompt. the documents are extracted, OCR’d if needed, chunked, embedded locally, and retrieved into context only when relevant. So the user can work across many documents without sending the whole library into the model at once.

Does it work as more of a personal RAG ?

Manex

@nitin_k_shorey It started off as a RAG architecture but we took it further and introduced a memory layer that accommodates short term, long term and also graph retrieval to serve. Thanks