Launching today

Glass

Continuous Improvement for your AI Agent

64 followers

Continuous Improvement for your AI Agent

64 followers

Glass turns AI anomalies into automated improvements through an infinite feedback loop that detects misbehaviors and feeds them into continuous evaluations to refine your agent.

Hey! Alex building Glass here.

I've built LLM solutions and AI Agents for the last 3 years and every singe project, even on every different markets, always had the same issues : some crazy issues would happen in prod when interacting with real users, which I'd have never imagined during development, so I had to do this back-and-forth between monitoring and evals all the time.

With the team at Glass, we're now fixing this problem. Glass catches your AI anomalies in production and automatically turns them into evaluations.

We also added Signals in other platforms than Slack; because we never understood why existing tools only support this.

Open to all feedback!

@alex_glass7 This resonates a lot.

One pattern that seems to be emerging with agent systems is that building the agent itself is often the easy part. The real difficulty starts once the agent is running in production and interacting with unpredictable users.

Traditional observability tells you what happened. But with agents the harder question becomes whether the behavior was actually correct.

Turning production anomalies directly into evaluations is interesting because it starts to close that loop automatically.

Curious how you think about Glass internally.

Do you see it mainly as an observability layer for agents, or closer to reliability infrastructure that continuously improves agent behavior over time?

@cauan_martins

Hey Cauan, thanks for the thoughtful question!

You nailed it — building the agent is the easy part. The real challenge starts when real users do things you never anticipated.

To answer directly: we see Glass as something that improves agent behavior right away, not just an observability layer you stare at.

The way we think about it: observability alone gives you dashboards and logs, but then what? You still need to manually figure out what went wrong, write new test cases, and hope you covered the edge case. That loop is slow and painful — I've lived it on every single AI project I've shipped over the last 3 years.

Glass closes that loop automatically. When we catch an anomaly in production, we don't just flag it — we turn it into an evaluation that feeds back into your development cycle. So your agent gets better from every weird interaction it has with real users, not just from the scenarios you imagined at your desk.

Think of it less as "monitoring that tells you something broke" and more as "a system that makes sure the same thing doesn't break twice." The observability piece is there, but it's a means to an end — the end being agents that continuously get more reliable.

Happy to chat more about it if you're curious!

Glass

Hey PH! 👋

Glass started from a frustration we kept running into while building AI agents. At first everything looked fine: we had logs, traces, dashboards, metrics. The system was “observable.”

But whenever something went wrong, we still had to manually dig through thousands of traces trying to understand things like:

Why did the agent hallucinate here?

Why is it so confidently wrong?

Is this a new issue or something that’s been happening for weeks?

Traditional observability tells you what happened. With AI systems, the real question is whether the behavior was correct. So we built Glass. Glass is the full flywheel for AI agents. Instead of just collecting traces, it analyzes them and helps you understand where and why your agent failed.

With Glass you can:

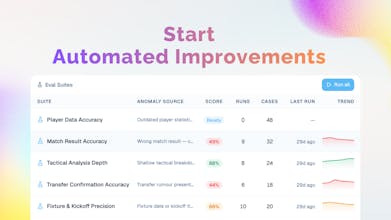

Have all your anomalies detected in real time: behavioral drifts, hallucinations, loops, jailbreaking etc

Get insights on how your users are actually interacting with your agents

Receive daily digests to help you iterate and fix fast!

Turn all this knowledge into autonomous evaluations to constantly refine your product

The reality of AI today is that building the agent is the easy part. The hard part is making sure it behaves correctly in production.

Glass is built to help with exactly that.

Excited to get your feedback!!

It's finally out!

You can use Glass to fix your AI agents and ensure seamless, high-quality client interactions