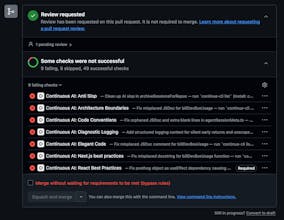

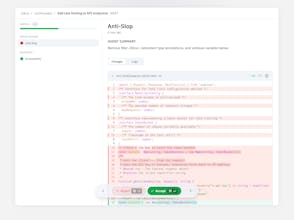

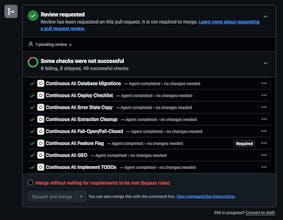

AI agents write most of your code now. But who's checking it? Continue is quality control for your software factory: source-controlled AI checks that run on every GitHub pull request. Each check is a markdown file in your repo that runs as a full AI agent, flagging only what you told it to catch and suggesting one-click fixes. Your standards, version-controlled, enforced automatically. No vendor black box. Just consistent, reliable quality at whatever speed your team ships.