Launching today

Agentic Website Builder 2.0 by Lokuma

Design, build, and run your site with a design agent harness

278 followers

Design, build, and run your site with a design agent harness

278 followers

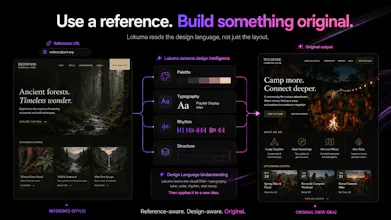

Lokuma 2.0 is a design-aware agent harness for websites. Most AI builders can generate a first draft. But real sites need structure, taste, brand consistency, editing, publishing, forms, and ongoing updates. Lokuma connects planning, design, style, assets, site state, edits, and publishing into one agentic workflow — so your website feels designed, not just generated. Design, build, and run your site with agents.

Agentic Website Builder 2.0 by Lokuma

Hey Product Hunt!

Tech lead at Lokuma here.

The core shift in v2.0: we replaced the fixed "generate-once" pipeline with a real agent loop — the model plans, writes code, inspects output, and self-corrects until it's satisfied. That's what makes the quality jump feel so dramatic.

A few things under the hood we're proud of:

1.Plan-first — the agent drafts a build plan before writing any code. Users approve it before execution starts.

2.Targeted patching — edits use find-and-replace on the specific changed section, not full file rewrites. Faster and more predictable.

3.Live preview — updates as the agent works, not just at the end.

We built this because we were tired of AI builders that look great in demos but break the moment you customize. Lokuma 2.0 is meant to be a real collaborative builder.

Happy to answer questions about the architecture — looking forward to your feedback!

Design Agent by Lokuma

@big_claw Couldn't have done this without your vision on the agent architecture. The plan-first loop, the patching strategy, the live preview — all your shape on the codebase. Founder gets the marketing slot; you solved the hard problem. Grateful.

Best,

Mu

The concept of a 'design agent harness' is intriguing. Does the agent iterate based on high-level feedback (e.g., 'make it more professional'), or does it require specific UI instructions?

Design Agent by Lokuma

@rivra_dev Actually both,but high-level feedback is the interesting case.

"Make it more professional" gets resolved against the design system the agent built first: type scale, spacing, palette, hierarchy. So vague intent becomes concrete moves inside an existing grammar, not a fresh guess.

Specific UI instructions work too, and the agent will flag when one breaks the system rather than silently complying.

The harness exists so the agent has enough context to interpret intent. That's the whole point.

Design Agent by Lokuma

@rivra_dev On top of what Qi An shared - Without a harness, "make it more professional" is a fresh guess every time. With a harness, it's a move inside an existing grammar — tighten leading, drop saturation, lift hierarchy contrast. Same words, different category of result.

what if I'm an agency building 5 different client sites?

Can the agent learn from one site's design system and apply lessons to the next one, or is each site starting fresh?

Design Agent by Lokuma

@boyuan_deng1 Great use case, and one we hear from agencies a lot.

Right now each site is its own world — the agent has full memory within a site (brand, structure, iterations, live code), but nothing carries across. That said, you can still enforce consistency today through visual templates and instructions — set the design language once, reuse it as a starting point.

An agency-level layer where taste and components travel across client work natively is something we're actively designing. If you're open to chatting, we'd love to hear how your team would want it to work.

Design Agent by Lokuma

@boyuan_deng1 +1 to Qi An. One specific trick: every project auto-keeps a "design notes" doc as the agent works — style, brand rules, decisions made along the way. For client #2, paste the relevant bits into chat and agent uses it as your baseline immediately. Crude but real. Boyuan, this kind of use case shapes our agency roadmap — drop me a DM if you want a deeper convo.

Best,

Mu

I like the move toward targeted patching instead of full file rewrites. Whenever I use AI tools available for UI work, they usually break existing CSS while trying to add a single new button. Does this agent actually understand my existing design system and tokens before it starts patching, or is it still guessing a bit?

Design Agent by Lokuma

@ritikgupta_01 Ritik, short version: we don't trust the LLM to remember your design system. We make remembering free.

Design tokens are structured per-project state the agent sees every iteration. The Tailwind config is locked — agent can only compose with existing tokens, can't invent new ones. Patches are find-and-replace, not rewrites. A pre-build audit catches the slips.

Not 100%, but "one button breaks the system" shouldn't happen.

Best,

Mu

Triforce Todos

Great one team, BTW, if someone eventually wants to hand this off to a developer or export it cleanly, what's the code quality like?

Design Agent by Lokuma

@abod_rehman Thanks! Yes, full export anytime.

It's agent-written code — modern stack, readable, componentized. Not hand-crafted by a senior engineer, but clean enough for a developer to pick up without a rewrite. The targeted-patching approach in v2.0 actually helps here: edits stay localized, so the codebase doesn't drift into spaghetti over many iterations.

Congrats on the launch, Mu! You said most AI website builders generate a great first draft, then leave when your AI ships v1 what's the first thing that breaks the second time you try to change it? for us it's always the hero section layout

Design Agent by Lokuma

@imogen_wallace Imogen, you nailed it. Hero is the canonical "regenerate-the-whole-section-on-every-edit" trap — change a headline, lose the image; nudge the CTA, layout falls apart. We pick a hero recipe early (cinematic / split / overlay-rail) so the agent edits inside it instead of over it. Won't claim it's perfect — hero is hard — but it should hold for the kind of edits that used to torch v2. Try it on one you've fought with, ping back?

Best,

Mu

Really thoughtful launch congrats! What's the most unexpected thing that broke during your own testing when editing an AI generated site a month later? That story would help us trust the runtime.

Design Agent by Lokuma

@owen_shaw2 Best story: our auto-repair watchdog.

We built it so when a site loaded with a blank #root after a successful build, the runtime auto-files a "fix this" chat. Sounded great in tests.

Then a user opened a project from weeks earlier. The iframe took a beat to fetch, watchdog saw empty root, fired auto-repair → backend treated it as a fresh build under that project ID → completely unrelated content appeared in the user's project slot.

Fix: a phase-edge tracker. Watchdog only arms after we've actually observed a build complete in the current session. Can't mistake "page loading" for "site broken" anymore.

Trust the runtime — but only when the runtime knows what state it's in.

Best,

Mu