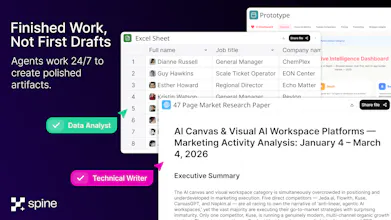

I've been an early tester and user. Really like how it helps me see iterations on an output (e.g. image generation using nano banana) so I can go back, branch from an earlier version, add more metadata / context to a branch etc. And then when I go back the next day it's all there for me.