mvntSTUDIO

Dance generation AI for every song!

95 followers

Dance generation AI for every song!

95 followers

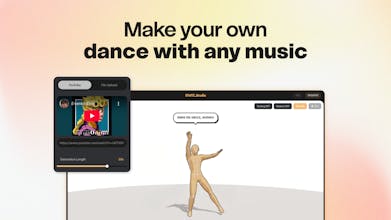

Drop your banger, get the choreo. The vibe dancing era is here! mvntSTUDIO is a dance generation playground that turns any song into production-ready dance animation. From TikTok challenges to K-pop choreographies, visualize your imagination with dance. Built by an Epic MegaGrant recipient team.

mvntSTUDIO

Hi Product Hunt! I'm Joon from MVNT.

We believe everyone deserves dance - so we are building the world's best dance gen model! While the tech is a long-term journey, we just couldn't wait for user validation and feedback 😛

So we built a simple, fast, funny web-based playground where anyone can play around with dance with ease! Here's what's inside v0.1:

- Music to Dance: paste a YouTube link or upload a file to get a dance in under 3 minutes. (Powered by our diffusion-based model mvnt-m4)

- Image to Dance: upload any character image and bring them to life dancing. Powered by Tripo AI's v3 model.

- Screen Recording: export your dance as video. Dance along to it, or use it as motion reference for tools like Kling AI or Seedance.

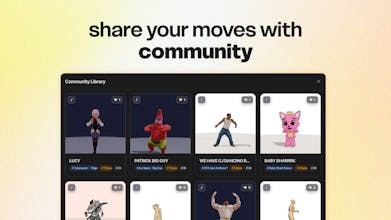

- Community Gallery: the funniest part. See what other creators are generating in real time.

We've long been a research-focused team just getting started with product. We are still working on better dance quality, faster generation, MP4 downloads, finger & facial motion.

Come join our playground, and thanks for being with us from day one!

Discord: https://discord.gg/hpWFbjb6

LinkedIn: https://www.linkedin.com/in/jooooooon-jung/

@joooooooonjung How intuitive is the interface for non-dancers? Can users customize styles? Like hip-hop, ballet, contemporary, or is it fully automatic?

mvntSTUDIO

@kimberly_ross As intuitive as dance language is, we highly prioritized easiness gen UX over all. We came up with 'music-to-dance' as the most intuitive approach - because music is the fundamental layer where usually dance sits on top of it / and there are certainly music-dance pairs that match expectations.

There are different generative approach we can take for each dance genres. For example contemporary and breaking tend to be less reliant on music, whereas their spacio-temporariness makes it hard to connect the moves in-between. Tiktok-style dances turned out to be the most modular and impactful (fun) so they became our initial public release.

We do also have rich dataset (mocap / label) of hiphop, ballet, contemporary, and other types of dance genre - still developing each model design per genre. Gen-UX wise we are also proposing like 'text prompt for styling', or 'pose-to-dance'

mvntSTUDIO

Thanks Shreya! So our tech stack is quite complex, I'd separate them into model side (3D motion generation) and product side (3D web app). Which part are you more interested in?