Launching today

CodeScene: CodeHealth MCP Server

Keep AI-generated code healthy and maintainable

95 followers

Keep AI-generated code healthy and maintainable

95 followers

CodeHealth MCP Server ensures agents and AI coding assistants write maintainable, production-ready code without introducing technical debt. Using deterministic CodeHealth feedback, it guides agents to spot risks, improve unhealthy code, and refactor toward clear quality targets. Run it locally and keep full control of your workflow while making legacy systems more AI-ready. The result is more reliable AI-generated code, safer refactoring, and greater trust in real engineering workflows.

CodeScene: CodeHealth MCP Server

Hey Product Hunt 👋

I’m Adam Tornhill, a software developer for over 30 years.

I’ve spent the past decades watching teams plan to fix technical debt... and then not do it.

Now we’ve added AI to the mix, which is fantastic at writing code fast. Unfortunately, it’s just as good at scaling your technical debt if you let it.

This is where it gets interesting: AI agents depend on code health even more than we do.

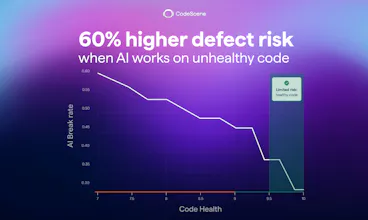

Sceptical? Here's what the research shows:

AI increases defect risk by more than 60% when working in unhealthy code

At low code health, AI wastes 35–50% more tokens unnecessarily

Most codebases aren’t even close to AI-ready

AI is an accelerator. It amplifies both good and bad in your codebase. So AI doesn’t make technical debt less important. It makes it critical.

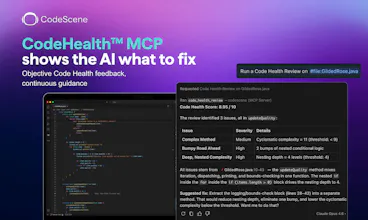

That’s why we built the CodeHealth MCP. It plugs code health directly into your workflow so your AI can:

Auto-review AI-generated code before it becomes a problem.

Safeguard code health so it stays maintainable

Help uplift unhealthy code to make it AI-ready

Generating code fast is easy.

Healthy systems at AI speed are the real challenge.

👉 Try it for free. Your code will notice: https://codescene.com/product/code-health-mcp

CodeScene: CodeHealth MCP Server

@adam_tornhill_cs Really resonates. MCP flips this from insight → action.

Instead of just knowing where technical debt is, teams can now operationalize it in real-time workflows, prioritizing hotspots, guiding AI agents, and preventing bad code from scaling.

AI doesn’t just need code. It needs context. That’s where MCP becomes a force multiplier.

CodeScene: CodeHealth MCP Server

@matti_hanell Yes, I think that's the key: Code Health provides objective signals about maintainability and risk. The MCP exposes those signals as actionable tools, turning abstract engineering principles into executable guidance that agents can follow consistently.

@adam_tornhill_cs instructing an agent is hard enough trying to do it in a messy codebase is impossible. CodeHealth MCP feels like 'cleaning up the room' before you ask a guest to come over. Makes the agent way more effective. Congrats on the ship!

This hits a nerve. When I was CTO scaling an engineering team from 15 to 120 people, code review was already our biggest bottleneck - senior engineers spending 30-40% of their time reviewing junior code. Now multiply that by AI-generated PRs that look clean on the surface but silently introduce coupling and complexity. The fact that CodeHealth MCP runs deterministic checks locally is the right call - you need something that catches structural issues before they compound, not after three sprints of building on top of them. Curious how the feedback loop works in practice: when an agent gets a CodeHealth warning, does it typically self-correct in one pass or does it tend to need multiple iterations to converge on healthy code?

CodeScene: CodeHealth MCP Server

@avrisimon You can instruct AI to self-correct by having instructions in your generic `AGENTS.md` or `CLAUDE.md` file (depending on agent), which the agent will read as sort of a global context. We have an example `AGENTS.md` file in our repository here if you want to take a look: https://github.com/codescene-oss/codescene-mcp-server/blob/main/AGENTS.md.

The number of iterations it needs to do to achieve healthy code depends on a few factors, so it's hard to give a concrete number. How bad is the code? The worse the code is, the harder it will be for AI to one-shot the solution. How good is the AI model you use? The better the model, the better it can understand instructions given by the CodeHealth MCP. In general though, with the latest Opus models from Claude and with code health even as low as 2 out of 10, I've personally seen it able to get to 10 out of 10 in just 2 iterations.

The MCP is also great at safeguarding already healthy code so that AI can't start introducing subtle defects or code smells into your code. This is important because healthy code requires a lot less tokens to understand and you need to spend no tokens at all on refactoring, saving you money.

Does that answer your question?

The speed of generating code with Claude Code or Cursor is incredible but the "did I just create six months of tech debt in 20 minutes" anxiety is real. Having an opinionated quality gate that doesn't change its mind based on how you phrase the prompt is exactly what you need when the code itself is generated by a probabilistic system. Does it catch structural issues too, like functions that are doing too many things or classes that have grown beyond a reasonable scope? Those are the kinds of problems that AI agents love to create - technically correct code that's architecturally messy.

CodeScene: CodeHealth MCP Server

@ben_gend Yes, those are first class citizens in the Code Health score. Functions doing too many things are caught as Brain Methods, a dedicated metric for complex functions that centralize too much behavior. Classes that have grown beyond reasonable scope show up as Brain Classes (large modules with too many responsibilities) or Low Cohesion, which specifically measures whether a class has multiple unrelated responsibilities breaking the Single Responsibility Principle.

There's also Bumpy Road, which catches functions with multiple dispersed chunks of logic that should have been extracted into their own functions.

You can read more about our Code health metric here: https://codescene.io/docs/guides/technical/code-health.html#code-health-identifies-factors-known-to-impact-maintenance-costs-and-delivery-risks

Deterministic is doing a lot of work here and in the best way possible. In a world of AI-generated everything, having a non-LLM signal for code quality feels underrated. What does the scoring model actually look at — cyclomatic complexity, coupling, something proprietary?

CodeScene: CodeHealth MCP Server

@tadej_kosovel Deterministic is the only way in the world of non-deterministic AI, I think.

The scoring model looks at many things; module smells, function smells and implementation smells. Part of those are things such as cyclomatic complexity and coupling indeed, but there's a whole lot more that goes on, and we keep continuously improving that metric as we go along. You can read more specific info on the CodeHealth metric here: https://codescene.io/docs/guides/technical/code-health.html#code-health-identifies-factors-known-to-impact-maintenance-costs-and-delivery-risks.

Does that help answer your question?

I use AI assisted code a lot now. Actually AI writes most of my code now. One thing has become very clear: AI is great at producing a lot of code. But it amplifies the code quality of what is already in the code base. Bad code gets worse. Good code can stay good, but it is very much the responsibility of the developer to keep it good.

The combination of Codescene extension (free) of the Codescene MCP makes this so much easier. The extension will surface potential problems instantly and show you code smells you probably want to adress. The Codescene MCP allows the coding agent to to be aware of problems and get more details and context on how to fix them.

I love the fact that the agent can end each session with asking codescene mcp for a code review so see where it didn't really cleared the bar, and automatically correct itself.

I also use the MCP server to ask about code that I might think is too complex, or where I sense something is wrong, but can't really put words on it. The MCP is so good at evaluating the code quality and give suggestions for improvements.

The more you work with AI assisted coding, the more important this product becomes. I highly recommend it and it is always the first thing that goes into custom instructions for the AI when I start working on a project.

CodeScene: CodeHealth MCP Server

@johan_nordberg Thanks a lot for your feedback!

I like that. It's a really important aspect of going agentic. Our research finds that AI requires even better code quality than humans, not less. The CodeHealth MCP allows us to pull that risk forward, and strategically refactor code to make it AI-ready.

Hi PH! I'm Adna, Developer Advocate at CodeScene.

I tested Claude, Copilot, and Cursor on the same legacy file and ended up with the same result: all three passed tests and all three made the code worse - and it happened silently, with no signal telling them they had.

The problem isn't the model. It's that agents have no idea which parts of a codebase are already load-bearing and fragile. They write confidently into broken areas because nothing stops them.

With the MCP Server in the loop: same file, same task, 4.82 → 9.1. Iteratively. The agent verified the delta after each step before moving on. That behavioral shift, knowing where not to be reckless, is what actually changed. Server runs locally, is model-agnostic, and finally, no code leaves your machine.

Happy to answer anything - especially if you've hit this problem yourself: how are you currently catching structural degradation in agent-assisted workflows?

DiffSense

Cool! is it like SonarQube but as an MCP?

CodeScene: CodeHealth MCP Server

@conduit_design It's CodeScene analysis tools as an MCP, which works in the same space as SonarQube. It can help you do code health reviews, uplifting of unhealthy code and safeguarding AI generated code. Is there a specific use case you're interested in?

CodeScene: CodeHealth MCP Server

@conduit_design Thanks and lot, André!

CodeScene's MCP is based on the Code Health metric. It's the only validated code-level metric with a proven impact in terms of faster (shorter lead times) and better (fewer defects).

Compared to linting aggregators like SonarQube, Code Health works at a higher level. Think of linters like the line-by-line commenting whereas Code Health checks the design and structure of the code to guide agents.

Does that help explaining the difference?

DiffSense

@adam_tornhill_cs SonarQube is not a linter. its a: static code analysis platform that scans source code across 35+ languages to detect bugs, vulnerabilities, code smells, duplication, coverage gaps, and technical debt. My question is. How is code health metric different? Im very into this right now, so im genuinly interested in finding out. Thanks.

CodeScene: CodeHealth MCP Server

@adam_tornhill_cs @conduit_design We have a very in-depth explanation of our CodeHealth metric available here: https://codescene.io/docs/guides/technical/code-health.html#code-health-identifies-factors-known-to-impact-maintenance-costs-and-delivery-risks. There's a lot of overlap between what CodeScene does and what SonarQube does, but our analysis is validated by academic research, viewable here: https://codescene.com/hubfs/web_docs/Business-impact-of-code-quality.pdf. We've also written more about how we fair against SonarQube here: https://codescene.com/blog/6x-improvement-over-sonarqube.

Does this clear up the similarities and differences between the two?

DiffSense

@adam_tornhill_cs @askonmm That article doesnt read well. It bashes sonarqube. the industry standard without proof. It does not go into details on how codeScene is better. what particular thing makes it better? Like show benchmarks. Show examples. For instance do a case study on a popular code repo, and do head to head compare with SonarQube. Im all for trying something better than SonarQube, but prove it. Dont just say it. you know what I mean? Proof is in the pudding as they say. Also some more details into how CodeScene does things. Is it all AI? or is there heuristics, or is there some exotic engines that run this. If its AI, then its only as good as the guardrails it uses. Some insight into these things would be great and bring a lot of credability and lower friction to adoption. Full disclosure. I run SonarCube on local runners with lots of customizations added on top, and its fantastic. Also big fan of Codebeat.io but they kinda dropped of a while ago. Anyways. great space! this is the new battlefield. when AI writes all our code, the output is only as good as whatever keeps it in line. # my 2 cents