Launched this week

AgentReady

Cut your AI token costs by 40-60% with one API call

127 followers

Cut your AI token costs by 40-60% with one API call

127 followers

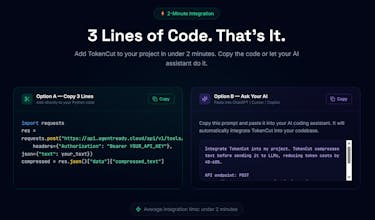

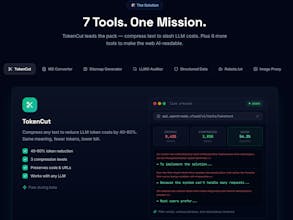

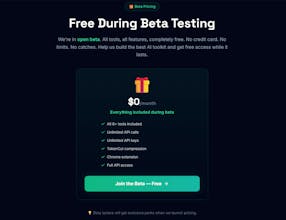

AgentReady is an API toolkit that makes the web readable for AI agents. Our flagship tool TokenCut compresses text before it hits GPT-4, Claude, or any LLM — same meaning, fewer tokens, lower bill. Plus 6 more tools: MD Converter, Sitemap Generator, LLMO Auditor, Structured Data, Robots.txt Analyzer, and Image Proxy. Free during beta. 3 lines of code to integrate.

Congrats on the launch

The idea of helping teams deploy AI agents faster is super relevant right now especially with how many companies are experimenting but struggling with real implementation.

One quick conversion thought: a short visual demo showing an agent being created + deployed in real time could really help visitors grasp the speed and workflow immediately. That kind of clarity usually boosts trial signups a lot.

Excited to see how this evolves great timing for this product.

AgentReady

@growthfilmstudio Thank you so much for your feedback!

I will follow your suggestion and create a demo ASAP. 😄

AgentReady

@growthfilmstudio following your comment, we created a docs page that can help integrate the tools in your saas that you can find here: https://agentready.cloud/docs

We will create a video demo asap😄

Thank you again!

@christian_b_1 Nice, that’ll definitely help clarify the product for new visitors.

If you want, I can also share a few ideas on what usually works best in SaaS demos so it converts well, not just explains the product.

AgentReady

@growthfilmstudio sure, can’t wait to hear your ideas :)

Thank you

@christian_b_1 Awesome

I checked your product and I already have a few ideas on how the value and workflow could be presented more clearly to new users so they understand it faster.

I’ll share a quick outline with you shortly.

AgentReady

interestig product. so it basically acts as a layer between the tool and the llm?

AgentReady

@douwe_tjerkstra Yes exactly :)

Your user writes text → you compress it via agentready.cloud → you send the compressed text to your LLM.

And in this simple way you save up to 90% of the API costs. :)

Very cool! Can you see what was trimmed from prompt for visibility?

AgentReady

@daniele_packard sure!

You can signup for free and copy your text in tokencut page to see the results of what was trimmed and also the cost you saved and the exact tokens saved😄

AgentReady

@daniele_packard Here you can see a screenshot from the webapp where you can test the compression level.

(if you want you can signup and use it for free to try with your text😄)

Example result with the Italian homepage of Wikipedia:

Hi @christian_b_1 Congratulations on the launch! it cuts the load of input tokens. Are you thinking of some how compressing the output tokens as well in near future?

AgentReady

@linapisom yes i’m thinking also about that, i’m thinking in how i can implement it well. Thank you for the suggestion! I will let you know when this feature is introduced 😄

@christian_b_1 Nice to hear that Christian. All the best.

AgentReady

@linapisomyou too, and thank you for your feedback!😄

Token costs sneak up on you fast when you're running multi-step AI workflows. How does the compression actually work - is it semantic chunking, or something closer to selective context pruning? I've been trying to trim context windows manually in a few apps and it's messy to maintain.

AgentReady

@mykola_kondratiuk it’s an algorithm that cut’s all the unnecessary stuff from the prompt, in the homepage you can see what it cuts, and in the tokencut dashboard you can try and directly see what it’s cutting with the Diff tab :)

Let me know if you want to learn more about it i’m here to help😄

AgentReady

If you need help or want to connect, You can also find me on X here:

https://x.com/christalingx