Launched this week

OpenHuman

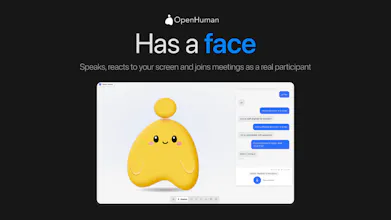

An open source AI harness built with the human in mind

1.4K followers

An open source AI harness built with the human in mind

1.4K followers

90% of people who try AI agents give up. Three reasons: memory that resets every session, your data sitting in someone else's cloud and a terminal just to get started. Real blockers. OpenHuman fixes all of it. Local-first, privacy-first. It remembers everything about you and actually gets smarter the more you use it. Every feature lives in one simple interface. Fully open source. One-click setup. P.S. The product is in beta, so expect bugs, but we're building and shipping fast.

OpenHuman

Heya! I'm Steven, founder of TinyHumans.

A few months ago I tried to set up an open-source AI agent for my dad. Three hours later and after wrestling with API keys, YAML and a terminal he had never opened in his life, we both gave up.

That's when I realised that every powerful AI agent today is built for the 0.01% who can spin up their own runtime. The other 99.99% are watching the agent revolution from the sidelines.

So we built OpenHuman.

OpenHuman is a super-intelligent AI agent that anyone can use. Two-minute setup. No config files. A simple GUI you'd hand to your parents and they'd actually figure out. Connect Gmail, Slack, Telegram, Notion, and GitHub in one click and it just works.

A few things I'm proud of:

* It runs locally. Encrypted vault. We never sell your data.

* It never forgets. Real memory across sessions, not session-only.

* It's open-source under GNU.

* It's free to start: no engineer, no GPU, no $6k setup bill.

Early signal has been wild: 8000+ GitHub stars, 5000+ users in the first 7 days, and 150% week-over-week growth.

Today, we're opening it up to all of you. Note that it is still in beta, so you're super early if you find bugs. Feel free to report them to me over at the Discord.

I'll be in the comments all day. You can break it, roast it, tell me what's missing, ask anything. We ship fixes live.

Would love your feedback!

@enamakel @kunal_karani this seems to be an interesting product. looking forward to experimenting with it it in my daily life!

OpenHuman

@kunal_karani @dipakgr yeah go for it. it should super simple and let me know what you think about it!

@enamakel Hi Steven, awesome product and congrats on the launch. Too lazy to see the GH now but what local slm do you use to orchestrate and memorize before invoking LLMs?

OpenHuman

@zolani_matebese Hey Zolani, Great question. It's a 1B Gemma3 model from Google which runs on most laptops. You can choose the local model you'd like to use in the settings page.

You can also select local LLMs for all the inference you want to do. It's possible to run OpenHuman completely local.

@enamakel Congrats Steven! this looks really good. Just wanted to know how are you handling long-term memory locally — vector DB, structured knowledge graph, or some hybrid approach? And do users have control over what gets stored/forgotten?

OpenHuman

@ferdi_sigona Hey Ferdi, thanks for the great question.

Short version: chunks carry temporal metadata plus a source weight and at retrieval the agent reasons over both rather than relying purely on similarity.

Recency is one signal but not the only one. So, a confirmation from your finance team about a contract amount outweighs a casual slack message from three weeks ago even if the slack message is more recent.

Source authority is computed per connector.

We also track explicit revisions where a later document or message overrides an earlier claim so the canonical state is the latest non-contradicted assertion rather than just the latest chunk.

Recall stays fast because we keep a rolling summary tree per entity that gets updated incrementally rather than recomputed. Long-horizon contradiction handling is one of the things we're actively improving.

OpenHuman

@ferdi_sigona So chunks are yes stored by time, and scored by interactions. But the interesting thing about memory is that it's a clickable plug and play model. so you can literally choose any other memory system you want or like.

The default memory itself is pretty good yes.

The privacy angle is a big reason to go local-first, but the persistent memory is what actually makes it usable day to day. I I am tired of re-explaining my tech stack and project goals every single time I open a new session.

Since you mentioned that it is in beta and remembers everything, I want to know how you handle context window limits or database bloat over time. Does it start getting sluggish once it knows too much about my work history, or is there some kind of automated cleanup?

OpenHuman

@ritikgupta_01 Great question! That re-explaining loop is exactly why we built the Memory Tree the way we did. We don't dump your entire work history into the prompt. The Memory Tree canonicalizes everything into chunks (3k tokens max), scores them by relevance, and folds them into summary trees: per-source, per-topic, per-day. When the agent needs context, it retrieves the most relevant chunks and summaries, not a raw dump. TokenJuice compacts verbose tool output before it ever hits the model, so even sweeping months of email stays cheap. And on the sluggishness end - The retrieval layer is local SQLite with indexed chunks. The agent isn't scanning a massive log every time. It pulls what matters for the task at hand. So yes, it remembers everything, but it doesn't remember everything all at once in the expensive way. It remembers like a human does: details for what matters, summaries for the rest.

OpenHuman

@ritikgupta_01 So context window limits, are handled like any agent harness. it compresses context when it hits 90% of the window. but it also does a ton of token compressing and cost cutting so that everything is smartly put in the window.

See this for more infor https://tinyhumans.gitbook.io/openhuman/features/token-compression

And it also uses lower end models for a lot of the low quality work such as summarization and cleanups. See this for more info https://tinyhumans.gitbook.io/openhuman/features/model-routing In many cases you can run it completly locally using a local AI

How did this not exist before?! Love the idea, want to give it a try even though I don't necessarily need an agent in my life.

Something I've seen that feels related: the concept of moving our personal data from the custody of vendors (social, doctors, marketers, employers etc) back to us - the owners. Eg a graph of all your data with granular sharing and privacy policies that you directly control. There's an obvious privacy aspect to this, but it's also natural to imagine how agents can thrive with (controlled) access to it, as an extension of the permanent memory you've built.

OpenHuman

@aleksandr_rakitin right? That's the reaction we keep getting. and honestly, the I don't necessarily need an agent thing is fair. Most people don't need another chatbot. but here's the shift: once an agent actually remembers your world, your preferences, your projects, your people, you stop thinking of it as a tool you use. It becomes context you live inside. you realize how much mental overhead you were carrying just to keep all your apps and threads straight. That's why we built the Memory Tree into a local Obsidian vault with plain .md files. the graph of your data shouldn't live in Notion's cloud, or Google's servers, or Anthropic's memory. Ii should live on your machine, in formats you can read, edit, export, or delete. Granular control by default.

@heyitapoojai I'm sure I'll discover use cases as I go.

And, beyond the immediate needs of agents, can I have my medical records in my OWN space? So that instead of me begging my dentist to send me my xrays, they land in my vault right away, and then I decide who to share it with and how.

OpenHuman

@aleksandr_rakitin exactly. the use cases show up once the memory layer is actually yours. so medical records are not automatic yet. most dentists lock data in their EHR. you can request exports (DICOM, PDFs) and drop them in your vault, but no push-to-you standard exists today, surely someday. apple health FHIR is spotty tho. but yes, you can manually import once it's in your space, you control sharing, and your agent can actually read your history.

OpenHuman

@aleksandr_rakitin f***k yeah... So you I kid you not, but in the next 24 hours a new release is coming out where you can use your own API keys and absolute full control over your data and costs.

So this way you don't need to use our cloud if you're tech savvy enough. Privacy is yours basically. We keep the cloud (if chosen) completly stateless.

OpenHuman

@hazal362526 Everything is open source so you can have a look at the source code.

You can optionally run everything locally which means nothing ever hits the cloud even. You can find the documentation over at https://tinyhumans.gitbook.io/openhuman and source code over at https://github.com/tinyhumansai/openhuman

Slazzer

What does OpenHuman feel like as a desktop app, specifically? Does it feel polished and intentional, like a native tool you’d keep open all day, or more like a very capable web app wearing a desktop costume and hoping nobody asks too many questions?

OpenHuman

@mahin_makkhy It's like having a macintosh.

OpenClaw and other agent harnesses are super complex to use and work with.

This is supposed to be more like a easy and simple experience to get started with basically.

can it use my skills and maybe commands? Tool calls? Is it good for coding?

OpenHuman

@robert_douglass yep. 118+ integrations (Gmail, Slack, Notion, etc.) full tool calling with chaining plus retries, and a built-in code sandbox for writing, running, and debugging. it actually does stuff, not just chats about it. :)

OpenHuman

@robert_douglass Yes tool calls yes. It's not good for coding just YET. it'll be ppretty soon as it's evolving and people are contributing.

That's the beauty of OpenSource.