Launching today

Montage

The runtime framework for agentic user interfaces!

89 followers

The runtime framework for agentic user interfaces!

89 followers

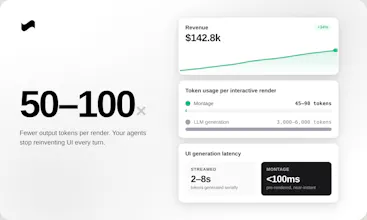

AI agents render UI slowly, expensively, and inconsistently — and a huge chunk of inference bills gets eaten by UI generation. Montage fixes it. Your agent emits a tiny intent schema; we compile it server-side into production components. 10x faster hydration, 50-100x fewer output tokens, model-agnostic, framework-agnostic, themed to your brand. Don't let your agents reinvent UI every turn - ship them on Montage!

Asa.team

The token waste on UI generation is something I only started noticing once I was running agents at scale. You don't feel it on one conversation, but when you're running 10+ parallel sessions the inference bill tells a different story. The intent schema approach makes a lot of sense. What does the fallback look like when the schema doesn't match a component you have, does the agent degrade gracefully or does it just error?

@ng_junsheng Great question!

Short answer: it's not really possible — once you wire your agent into Montage, it's only ever picking from the 200+ components in our atlas. The intent schema is constrained to what's actually available, so the agent can't request something that doesn't exist.

That said, we still defend against edge cases:

1. Component-level fallback. Deprecated or missing components fall back to the closest semantic match — e.g. an unknown pricing-table renders as a data-table with props mapped over.

2. Prop-level coercion. Malformed or partial props get filled with LLM safe defaults. The render still ships; you get telemetry to fix it later.

3. Last-resort text rendering. If nothing matches at all/service is somehow down, we fall back to clean text/markdown so the user gets something coherent. Never a broken UI or raw error.

Every fallback emits telemetry to our team — we see the failure modes before you do, and patch them upstream!

Philosophy: the agent should never be the thing that breaks the experience.

Sounds like you've felt this pain firsthand — would love to compare and understand more from your use case: aarav@usemontage.ai.

Hey Product Hunt 👋

@aboss123 and I have been heads-down on Montage for the past several months — it's the runtime framework for agentic UI!

Why we built it:

After talking to dozens of founders building customer-facing agents, the same pattern kept showing up.

• Their agents rendered UI slowly, expensively, and inconsistently — and a huge chunk of their inference bill was getting eaten by UI generation.

• Every chart, table, kanban, or form regenerated from scratch, token-by-token, every single turn.

• And the UI still looked broken to customers half of the time.

Montage fixes this end-to-end. Instead of generating UI token-by-token, your agent emits a tiny intent schema and we compile it server-side into production HTML/CSS/JS. Components are pre-rendered, themed to your brand, accessible, and responsive.

What's in v1:

• 10x faster hydration vs streamed generation

• 50-100x fewer output tokens per render

• Model-agnostic — Claude, GPT, Gemini, Llama, custom fine-tunes

• Framework-agnostic — React, Vue, Svelte, vanilla, mobile

• Full theming so it looks like your product, not ours

• One-line install

Get started in minutes:

1. Make an API key at usemontage.ai/get-started

2. Read the docs at usemontage.ai/docs/overview (Or hand the docs URL to your coding agent and let it set Montage up for you)

We're starting with agent-building companies because that's where the pain is sharpest, but the thesis is bigger: every AI output that reaches a human is a rendering problem. Montage is the layer underneath all of it!

If you're building customer-facing agents, we'd love your feedback + questions/insight!

Email us at founders@usemontage.ai today, we'll offer extended hands-on integration support for your first agent setup!

you don't even mention that front end with AI is a weak spot to begin with, so you're really addressing three problems: doing front end work with AI, making it faster, making it cheaper. Kudos.

@robert_douglass Thanks! You nailed it — these are exactly the three problems we set out to solve:

1. Quality -> Pre-rendered components will always beat what an LLM streams from scratch — they're production-grade, designed for accessibility, and continuously refined.

2. Speed -> Hydrates 10x faster than streamed UI. Skeleton loads instantly, data fills in.

3. Cost -> Your model doesn't have to rebuild the UI from scratch every turn — that's where the 50–100x output token savings come from.

Most agent experiences today still feel like talking to a chatbot — flat, text-heavy, not really interactive. Closing that gap is the whole point of Montage!

Montage

Hey Product Hunt!

I am so excited for our launch today. We would love to know your thoughts on Montage, what you would like to see, and whether it's the right fit for your company. For now, we are letting everyone use it for free as we understand your needs, but we will eventually move on to a paid model for our services.

If you're a bit more curious about our story, you can read our article here, too: https://dev.to/aboss123/your-ai-agent-shouldnt-write-html-it-should-call-a-ui-runtime-3f96

It also helps explain a bit, with examples of what Montage is capable of!