Launching today

HasData

Web scraping service for AI agents

355 followers

Web scraping service for AI agents

355 followers

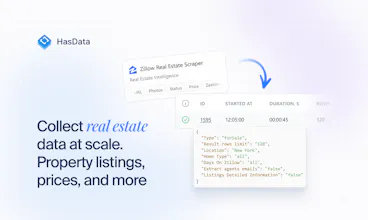

HasData is the managed web scraping service for data pipelines and AI agents. Send any URL, get clean JSON or Markdown back in one API call. We handle proxies, browser rendering, retries, and anti-bot. 50+ ready scrapers cover Google Search, Maps, News, Zillow, Indeed, and major e-commerce. AI extraction handles any other URL from a plain-text prompt. Use it from Claude, ChatGPT, or your own AI agent via MCP. CLI for everything else.

HasData

Hey Product Hunt 👋

Sergey here, co-founder at HasData.

HasData is the managed web data service for AI agents and pipelines. We handle the messy infrastructure like proxies and anti-bot. We also maintain dozens of ready APIs for Google, Maps, Zillow and e-commerce.

And one thing that sets us apart from every other API in this space: we only bill on success. Failed requests cost nothing. You pay for data that actually arrives, not for retries on a broken proxy.

Today we are launching our AI Agent, an MCP server, a CLI and Agent Skills for Claude Code and OpenClaw. You can now connect HasData straight to your AI stack.

The catalog is pretty powerful if you know exactly what you need. But it gets frustrating if you do not. Picking the right tool, learning the parameters and parsing the output takes time away from actual building.

The new AI Agent fixes that. Describe what you need. The Agent picks the matching API, runs the job, and returns a dataset. Enrich any row from the same chat with contacts, firmographics, or whatever's missing.

The MCP server, CLI, and Agent Skills give the same flow to anyone working from Claude, ChatGPT, the terminal, or Claude Code.

Two things worth knowing:

We're giving 10,000 free credits during launch week.

If you're a HasData user already, everything works on your existing account, catalog, and workspace. No separate plan, no migration.

It's live at app.hasdata.com/chat. I'm here all day, so is the team. Drop a comment, break it, tell us what's missing.

2pr

@ermakovich_sergey congrat with the launch! This is what new agentic ai worlds need!

HasData

@islam_midov Thanks Islam! Agents need clean data more than anything else right now, glad it resonates.

Product Hunt

HasData

Two different problems, two answers.

For the 40+ pre-built APIs (Amazon, Google, Booking, Zillow, etc.) we don't use AI extraction at all. They're maintained scrapers with fixed schemas. Synthetic tests run daily comparing live responses against the expected ones, so when a site changes its layout we ee it the same day and fix it before customers notice. Schema stays stable for you across releases. This is where most production AI features actually run, since high-value targets are concentrated on a small set of sites.

For genuinely long-tail URLs via the Web Scraping API, you pass an extraction schema and we run AI extraction against it. The schema constrains what comes back, so out-of-schema fields don't leak in. For ambiguous pages the AI Agent can cross-check against a secondary source.

Hallucinations are still a risk on sparse pages. The schema constraint and the cross-check handle most of it; for high-stakes use cases I'd still recommend wrapping with your own validation.

The success-only billing is the headline for me, it's one of those things that sounds obvious in hindsight but almost nobody does it. Scraping infrastructure is inherently flaky, so charging for failures has always felt like passing your infrastructure debt onto the customer.

Quick question though: how do you define "success" when the request technically completes but returns stale or incomplete data? That edge case is where I'd expect the billing model to get complicated.

Also curious whether the MCP server handles rate limiting and retry logic transparently, or does the developer still have to think about that?

Rooting for this one — clean problem, clean solution.

HasData

@saumya_jainn we run synthetic tests daily across all our APIs to catch regressions. They compare real responses against the expected ones, so if something breaks, our team is on it. If we didn't catch it - we return the credits for these failed requests.

The MCP server has built-in rate limiting and retry. The developer (or the LLM) just makes a request and gets back JSON.

HasData

@saumya_jainn Success for us is a 200 from the target after our anti-bot layer clears. Blocks, captchas, timeouts are not billable. The stale/incomplete case you raised is harder - if the upstream returned a degraded but technically-200 page, we currently bill, since from our side the request succeeded. Content-level validation is on the roadmap as an opt-in layer.

Overall dope features but I have one question as well. I have seen while extracting webpage data from Firecrawl, that the extracted text contains all the advertisements links as well, this sorts of corrupt the data and need some cleaning before feeding to LLM. So I want to understand does HasData remove those links in some way ?

HasData

@abhishekr_ai we have a flag for that. Default behavior keeps the page as-is, but you can toggle it to strip ads and nav clutter before the response goes back. Some users actually want the ad data, so we left it configurable rather than forcing one mode.

Congrats on launch, guys! The scraper catalog covers the obvious targets, but what happens when someone needs a niche source not in the library? Does the agent fall back to AI extraction on raw html, or wait for the team to build a dedicated scraper?

HasData

@julia_peremitina good catch, we were iterating with this workflow a lot lately. So basically, we set up our Agent in such way that it has to pick one of the existing sources first for a better search quality. If there's no relevant source - it can always decide to use basic search and general web scraping tools to build its reply. It works good for now, will be adding more sources and improving our web scraping capabilities in the future!

HasData

@julia_peremitina Thank you for question! For niche sources you do not need a dedicated scraper at all. Our web scraping API has special parameter where you just describe the fields you want (name, type, optional description) and our LLM returns clean JSON. No CSS selectors, no waiting on us.

And extraction happens on our side, so your agent gets structured JSON instead of raw HTML. Your LLM tokens stay low.

Hey guys, congrats on launch! I've recently seen Firecrawl doing the same (I guess?) AI craping agent - what's the difference between them and you if any?

HasData

@gregory_yashkin Hey man, cheers, appreciate your kind word and support! That matters a lot to us 🫶

Yeah, we do love guys from Firecrawl, I see them doing some amazing things lately. I believe that healthy competition helps everyone, especially the end users. I could say we have some different approach to UI/UX and also separating no-code and API scrapers for different user segments - don't know, will let PH users decide today :)

most scraping services charge you whether the data comes back clean or not and you end up paying for broken responses. how does the openclaw skill integration work in practice though, does the agent automatically pick which scraper to use or do you still have to configure each one manually

HasData

@tina_chhabra hey, that's right - HasData only charges for successful api calls, you don't pay for failed executions and don't receive broken respones.

Speaking if AI related features - we build it in such way that any agent could pick and configure the right scrapers itself. So far works good I think :)

HasData

@tina_chhabra Thanks Tina, glad the billing model landed.

As for OpenClaw, the Skill is fully automatic. You describe what you need in plain English ("get me top reviews for this restaurant"), and the Skill picks the right scraper from our catalog, runs it, and returns structured data.