KushoAI transforms your inputs into a comprehensive ready-to-run test suite. Test both web interfaces and backend APIs in minutes with our AI Agents.

This is the 6th launch from KushoAI. View more

APIEval-20

Launching today

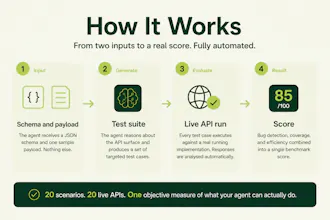

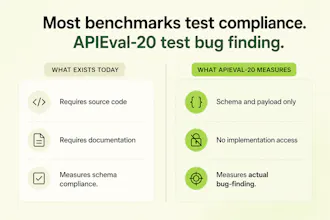

APIEval-20 is a black-box benchmark for API testing agents. Each agent gets only a JSON schema and one sample payload, then generates a test suite. We run those tests against live reference APIs with planted bugs and score bug detection, API coverage, and efficiency. Unlike LLM-as-judge evals, scoring is fully objective: a bug is either caught or it isn’t. Tasks span auth, errors, pagination, schemas, and multi-step flows. Open on Hugging Face.

Free

Launch Team / Built With

KushoAI

Do you publish per bug breakdowns so people can see exactly what types of failures each agent misses?

KushoAI

@karimbenkeroum Karim, yes, that is part of the plan for the leaderboard.

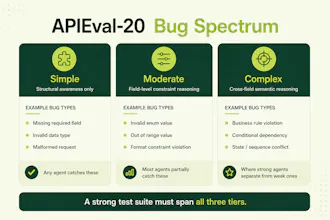

We want the breakdown to go beyond one aggregate score and show which types of failures each agent catches or misses, across auth, schema constraints, pagination, error handling, field relationships, and multi-step flows.

That is where the benchmark becomes more useful, because two agents can have similar overall scores but fail in very different ways.

Socrati

Really like the black-box setup. Feels much closer to how teams actually test APIs than benchmarks that assume source code access. Curious how you’re thinking about the planted bugs: do auth, pagination, schema issues, multi-step flows, etc. all count the same, or are you planning to weight them by severity/commonness?

KushoAI

@davidsolsonap David, great question.

For v1, we are keeping the core score simple and objective: did the agent catch the planted bug or not. That makes the benchmark easier to reproduce and harder to game.

But we don’t think all failures are equal in practice. An auth bypass, a broken multi-step flow, and a minor schema edge case should not carry the same business impact.

So the plan for the leaderboard/report is to show both:

An unweighted objective score for comparability

A breakdown by bug class, and potentially severity/commonness as a second lens

I think the breakdown matters as much as the overall score. Two agents can look close on aggregate but be very different in where they fail.

KushoAI

@lakshminath_dondeti Lakshminath, I agree. LLM-as-judge is useful when the output needs semantic evaluation, like judging reasoning quality, intent coverage, or whether a generated explanation is useful.

For API testing, we tried to keep the core scoring executable wherever possible. If the generated test catches the planted bug, it scores. If it does not, it does not. That removes a lot of ambiguity.

We don’t have a classifier for choosing eval type yet, but the rough rule we use is:

If the outcome can be executed or verified deterministically, avoid LLM-as-judge.

If the outcome needs human-like interpretation, use LLM-as-judge carefully with rubrics and calibration.