Sup AI

AI ensemble that scored #1 on Humanity's Last Exam

143 followers

AI ensemble that scored #1 on Humanity's Last Exam

143 followers

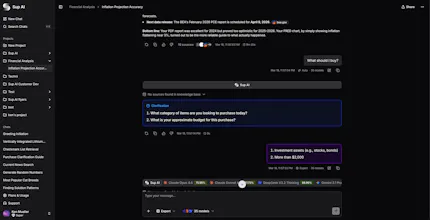

Every LLM hallucinates. They just don't hallucinate the same things. Sup AI runs multiple LLMs (out of 339) in parallel, then synthesizes answers by measuring confidence on every segment. High entropy = likely hallucination, downweighted. Low entropy = likely accurate, amplified. Result: 52.15% on Humanity's Last Exam, 7.41 points ahead of any individual model. $10 starter credit. Card verified. No auto-charge.

Sup AI

Hey Product Hunt. I'm Ken, a 20-year-old Stanford CS student. I built Sup AI.

I started working on this because no single AI model is right all the time, but their errors don’t strongly correlate. In other words, models often make unique mistakes relative to other models. So I run multiple models in parallel and synthesize the outputs by weighting segments based on confidence. Low entropy in the output token probability distributions correlates with accuracy. High entropy is often where hallucinations begin.

My dad Scott (AI Research Scientist at TRI, PhD from UCLA) is my research partner on this. He sends me papers at all hours, we argue about whether they actually apply and what modifications make sense, and then I build and test things. The entropy-weighting approach came out of one of those conversations.

In our eval on Humanity's Last Exam, Sup scored 52.15%. The best individual model in the same evaluation run got 44.74%. The relative gap is statistically significant (p < 0.001).

Methodology, eval code, data, and raw results:

https://sup.ai/research/hle-white-paper-jan-9-2026

https://github.com/supaihq/hle

Limitations:

We evaluated 1,369 of the 2,500 HLE questions (details in the above links)

Not all APIs expose token logprobs; we use several methods to estimate confidence when they don't

We tried offering free access and it got abused so badly it nearly killed us. Right now the sustainable option is a $10 starter credit with card verification (no auto-charge). If you don't want to sign up, drop a prompt in the comments and I'll run it myself and post the result.

Try it at https://sup.ai. My dad (@scottam) is in the thread too. Would love blunt feedback, especially where this really works for you and where it falls short. If Sup ends up being useful, we added a Product Hunt offer that expires in a week: 20% off your first month with code PRODUCTHUNT.

If you're unsure what I meant by entropy and output token probability distributions, whenever an LLM outputs a token, it's choosing that token out of all possible tokens. Every possible output token has a probability assigned by the model. These sum to 1, forming a probability distribution. APIs typically return these probabilities as logprobs (logarithms of the probabilities) because raw probabilities for rare tokens can be so small they underflow to zero in floating point, and because logprobs are the natural output of how models actually compute their distributions. We use these directly to calculate entropy. Entropy is a measure of uncertainty and can quantify if a token probability distribution is certain (1 token has a 99.9% probability, and the rest share the leftover 0.1% probability) or uncertain (every token has roughly the same probability, so it's pretty much random which token is selected). Low entropy is the former case, and high entropy is the latter.

There is interesting research in the correlation of entropy with accuracy and hallucinations:

https://www.nature.com/articles/s41586-024-07421-0

https://arxiv.org/abs/2405.19648

https://arxiv.org/abs/2509.04492 (when only a small number of probabilities are available, which is something we frequently deal with)

https://arxiv.org/abs/2603.18940

tons more, happy to chat about if interested

Looks interesting, especially when you dealing with sensitive docs and information. The pain is real for me.

Quick tech question: do you use deterministic functions, like code execution, calculation, aggregation, SQL, etc, or is the output completely determined by the LLM consensus and confidence score?

I'm asking because for number sensitive tasks, raw LLM output even with consensus, usually does not match the reliability of using deterministic tools. How do you solve this?

Sup AI

@nowaffl The synthesizer (consensus model) can use code execution or web search to verify the results of each individual model. I agree with you that deterministic tools are better, but the issue is that if it were possible to check the accuracy of each AI with deterministic tools easily, we would not have hallucinations anymore! We have found (and based on research) that the logprob scoring of the models is very highly correlated to the hallucinations, and it works astonishingly well to weed them out.

The entropy-based confidence scoring is very interesting part here — but how does it handle cases where multiple models are confidently wrong in the same direction? If 8 out of 10 models hallucinate the same plausible-sounding fact (low entropy, high consensus), does the system amplify that error rather than catch it?

Sup AI

@sounak_bhattacharya Good question. It is possible that most or all models are confidently wrong in the same way. And this will likely, though not always, mean that Sup will also be confidently wrong. However, this is rare. The reason an ensemble mechanism is so powerful is that mistakes and hallucinations don't correlate well among different models. So, Sup can hallucinate, but it is far less likely than hallucinations from individual models.

The Token Expenditure might be really high here! How have you optimized Sup AI for that?

Sup AI

@nayan_surya98 Yes! I'm glad you asked. The key is the fact that multiple models that are individually cheaper (and less capable) when ran in an ensemble will outperform a more expensive & intelligent model running on its own. That combined with our compaction algorithm which is highly optimized for prompt caching, results in around a 1.25x increase in cost/speed at the high end. A lot of work has been put into driving that down!