World’s first comprehensive evaluation, observability and optimization platform to help enterprises achieve 99% accuracy in AI applications across software and hardware.

FramerLaunch websites with enterprise needs at startup speeds.

Future AGI Launches

Fix My Agent (FMA)See what breaks your AI agent and fix it automatically

Launched on January 21st, 2026

Simulate by Future AGIThe voice AI auto-testing loop to simulate, evaluate & ship

Launched on August 7th, 2025

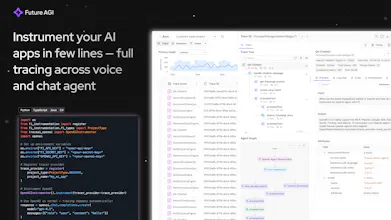

Future AGIAutomate error detection and ensure high accuracy of your AI

Launched on September 20th, 2024