DCompute

Serving GPU Compute, Not Highway Robbery

5 followers

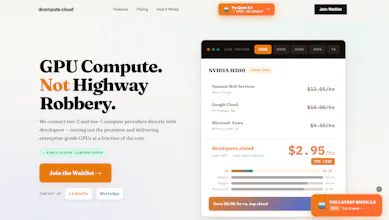

Serving GPU Compute, Not Highway Robbery

5 followers

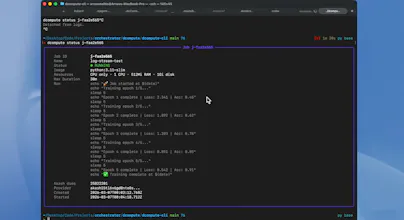

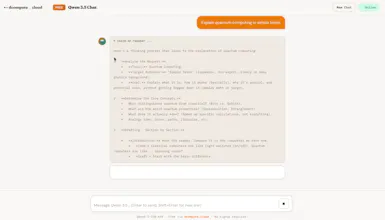

DCompute is a GPU cloud powered by connecting tier-2/tier-3 datacenters with 99%+ reliability, eliminating overpricing and idle billing. Run H100s at ~$1.5/hr vs ~$12/hr on AWS with true per-second pricing. Launch pre-configured GPU VMs, Run Multiple GPU jobs from CLI (docker image supported), and deploy models like Qwen, DeepSeek, and LLaMA - across training, experimentation, and inference in one seamless workflow. All while paying for seconds worth of GPU usage.

GPUs aren’t just expensive,they’re hard to get when you actually need them.

We kept seeing the same thing:

either you overpay on hyperscalers, or you struggle to find available compute.

And even when you get a machine, you’re paying for a lot of idle time.

That’s what we’re building DCompute to fix.

We connect to tier-2/3 datacenters to unlock more GPU supply at lower cost (with 99%+ reliability), so you can actually get access when you need it.

You can:

• launch pre-configured GPU VMs

• run multi-GPU jobs directly from CLI (Docker supported)

• deploy models like Qwen, DeepSeek, and LLaMA

All while paying only for the seconds your GPU actually runs. No hourly billing, no idle charges.

If you're working on ML workloads, happy to help you try it,just comment or DM.

Curious,what’s been more painful for you: cost, availability, or setup?

How does pricing compare to traditional cloud GPU providers for long running training jobs? Interesting infrastructure play, congrats on the launch!

@mateuszjacni Pricing is almost 10x less than the traditional players. check website for pricing and contact details.