Launched this week

YourMemory

AI that remembers and forgets like humans.

110 followers

AI that remembers and forgets like humans.

110 followers

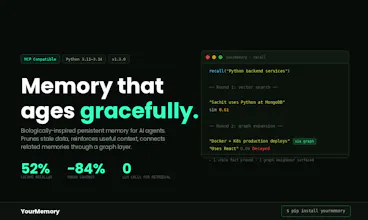

Most agents are either amnesiacs or "hoarders" that choke on stale context and break their own reasoning. YourMemory brings biological logic to the workflow. Using the Ebbinghaus curve, it prunes the junk so only the important stuff sticks. -84% Token Waste: Leaner context, sharper reasoning. 52% Recall: (LoCoMo benchmarked). v1.3.0 Graph Engine: Finds what you forgot to ask for. 100% Local.

YourMemory

Hey everyone, I’m Sachit.

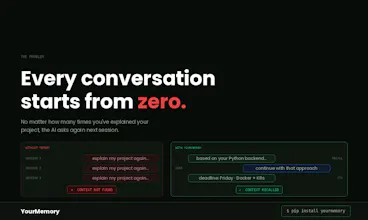

I built YourMemory because I hit a wall with my own coding workflow. My agents were brilliant, but their memory was a mess. They either forgot my architectural 'gotchas' by lunch, or they got so bogged down in stale bug fixes from last week that they started hallucinating.

I realized we don't need a digital filing cabinet for our agents, we need a filter.

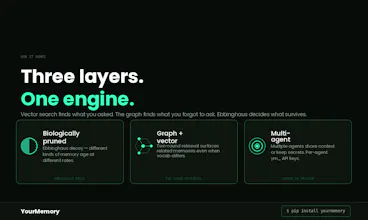

YourMemory treats context as a living thing. It uses 'biological decay' to let transient noise fade away while reinforcing the patterns and facts you actually use.

For the skeptics:

I’ve provided the full benchmarking scripts and the LoCoMo dataset on GitHub. We’re hitting 52% Recall@5, which nearly doubles the industry average. Why? Because our v1.3.0 Graph Engine doesn't just do keyword matching, it pulls in related architectural 'neighbors' that standard vector search completely misses.

It’s 100% local first (DuckDB), zero infra, and it’s finally stopped me from repeating myself to my terminal.

I’d love to hear how you’re all handling context amnesia right now. Tell me what your agent keeps forgetting that drives you the most crazy!"

Ebbinghaus decay plus a graph engine is a clever combination, but the edge case I keep running into is old

architectural decisions that are still load bearing. A design choice made six months ago that constrains current code

is rarely in active use, so the forgetting curve would drop it, but the graph edges to it are exactly what new code

needs. How does the engine decide when decay wins versus when the graph pulls something back into scope?

YourMemory

@myultidev Great observation. Two things protect load bearing decisions:

First, chain-aware pruning, a memory is only deleted if all its graph neighbours are also below the prune threshold. If your old architectural decision is linked to anything still active, the whole chain stays alive. A memory is as strong as the strongest node it's connected to.

Second, for decisions stored in isolation (no graph edges), importance score controls the decay rate directly. High importance = slower effective λ = survives much longer without being accessed. Setting importance=0.9 on an architectural decision gives it a half-life of months, not weeks.

So the short answer: link your critical decisions to related memories (graph protects them), and mark them high importance (decay protects them). Both layers working together !

Self-pruning memory is the right instinct — stale context is what makes most "personalized" AI apps lose their edge over time. I ran into this when building DishRoll (https://dishroll.netlify.app/), a weekly AI meal planner — old preferences (the chicken recipe you loved three months ago that's now boring) kept contaminating suggestions until we added explicit decay and recency weighting. MCP-level memory hygiene is a much cleaner place to solve this than at the app layer. What signals do you use to decide what gets pruned vs. kept?

YourMemory

Great insight, @samir_asadov DishRoll is the perfect example, app layer "clean up" is a nightmare to maintain manually. Solving this at the MCP level is exactly why I built this.

To answer your question, we use a formula that balances retrieval frequency with an importance score to set the decay rate. The more a memory is used, the "stickier" it stays.

The real safety net is the Graph Engine. If a specific memory starts to fade but is part of a strong "chain" of other important memories, we don't prune it. It’s about keeping the whole story intact, not just isolated facts.

Hope that answers it!

Cheers for the support.

Simple Utm

Using the Ebbinghaus curve for context decay is a genuinely clever framing. The biggest failure mode I have seen with agent memory is not forgetting too much but forgetting the wrong things. Architectural decisions that were made months ago and rarely referenced can still be load-bearing. Does the graph engine help protect those kinds of low-frequency but high-importance memories from decay?

YourMemory

@najmuzzaman Great question! The short answer is yes, the graph engine protects those critical links. Basically, a memory is only as strong as its chain, if it’s connected to high-signal info that’s above the threshold, it won't get pruned. For those 'lone wolf' memories, we use an importance score to set a custom decay rate. So, the high-stakes stuff stays sticky, while the noise fades away.