variA/Bly

Delivering production-grade prompt performance for AI Teams

3 followers

Delivering production-grade prompt performance for AI Teams

3 followers

There is no way you can measure your AI drift. variA/Bly helps you evaluate and A/B/n test prompts scientifically, so you catch issues before users complain. Differentiator: → 41-dimensional evaluation -quality scored across multiple dimensions → Statistical A/B testing - confidence intervals, not gut feeling → AI-powered optimization - generates better prompts from data → Prompt Registry - version control and deployment Other tools wait for user complaints. variA/Bly measures continuously.

Hey Product Hunt!

I'm Amit from variA/Bly.

The problem

Teams shipping AI applications are flying blind. They're iterating on prompts through gut instinct, manual testing, and expensive trial-and-error.

It's hard to know:

Which prompt variant actually performs better (not just "feels" better)?

How to measure quality consistently and scientifically across safety, accuracy, and coherence, etc.?

Is that $0.03/call GPT-4-as-Judge evaluation worth it at scale? Just imagine the cost of running it for 100K events?

What to optimize next after you've shipped v1?

The result? Teams leave 20-40% performance on the table. Every. Single. Time.

Why we built Variably

We saw AI engineers spending weeks manually testing prompts, using expensive LLM-as-Judge evaluations ($10K-$30K/month at scale), and still shipping without confidence.

Even after deploying a "good" prompt, there was no systematic way to continuously improve or catch regressions before users did. Using LLM-As-Judge to find the best prompt is more like judging a coin flip with another coin flip.

How Variably works

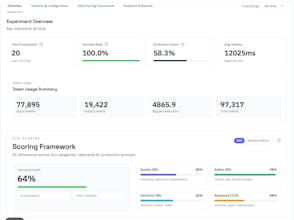

41-Dimensional Evaluation Framework that scores prompts across quality, safety, semantics, and advanced metrics - deterministically and 100x cheaper than LLM-as-Judge.

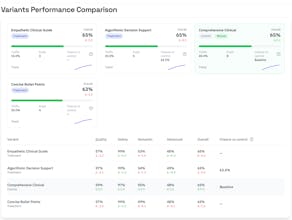

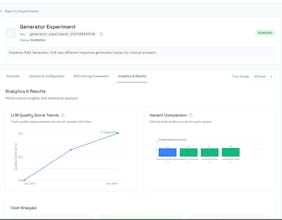

Statistical A/B Testing with Bayesian analysis, confidence intervals, and automatic winner detection (95% confidence, not vibes).

AI-Powered Optimization that automatically generates better prompt variants using Bayesian optimization and genetic algorithms, and the generated 41-Dimensional Evaluation.

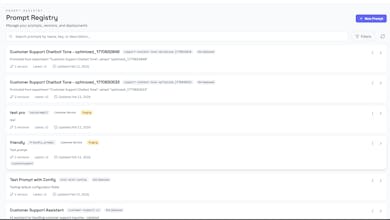

Prompt Registry with version control, environment deployments (dev/staging/prod), and one-click rollbacks.

Real-time Analytics showing exactly what's working, what's not, and why.

How we're different

Langfuse tells you what your LLM is doing (observability).

Braintrust lets you evaluate quality manually.

variA/Bly tells you how to make it better - and does it automatically.

We're the only platform combining comprehensive evaluation, statistical experimentation, and AI-powered optimization in one tool.

Typical outcomes

25%+ quality improvement through AI-powered optimization.

30-50% cost reduction via efficient evaluation.

7 days median to finding a statistically significant winner.

40% faster iteration with automated recommendations.

Launch day of

Sign up today and get:

- Extended free tier (normally 50 evaluations → 500 evaluations/month).

- Hands-on onboarding with our team.

- Early access to our upcoming agent evaluation features.

Just mention "Product Hunt" when you sign up or email us at info@variably.tech.

We'd love your feedback, tough questions, and wild use cases. We'll be here all day!

Happy Optimizing!