Loreguard

AI NPC engine that runs on players machine, no pay per token

2 followers

AI NPC engine that runs on players machine, no pay per token

2 followers

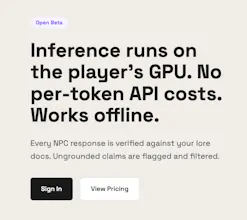

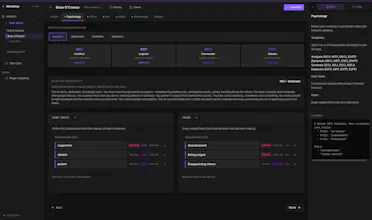

AI NPC engine that runs inference on the player's GPU. Every response verified against your lore docs. No per-token APIs Web console to build NPCs: personality, voice, knowledge base, trust gates, goals Trust gates — NPCs reveal secrets based on the player's trust level. Social engineering becomes a game mechanic. Runs on small open-source models (Qwen 3.5 9B works well)

I started building a game to simulate internet in the 90s, one of the features I thought was, the internet should be alive, like same way like cities on GTA games do with NPCs driving cars on the streets, but for my game would be only text based, no path walking.

A thought crossed my mind, what if the NPCs in games (collaborative or enemies), could be also powered by AI?

It was not clear for me exactly how I would transform this into a game mechanic, but I had to give it a try, the idea was too juicy, to not attempt it (and some of you may disagree here)

NPCs need personality + the content of the world or the story they live, when you roleplay with AI characters online they have some lossy boundaries, they can fabricate what ever they want because you cannot verify if it is true or not (and it is not the intention either), but for games, this is different, you want them to be grounded on the lore, stay on character.

I also didn't want players to have to pay an additional subscription of ChatGPT for example to empower the NPC dynamics, doesn't make sense, so the way to solve this was going local. When you go local for LLM Inference, things starts to get hard, right now, it is like going back to 80s or 90s where you had a small memory pool to deal with, bit bashing, and this is how it looks like to make local LLMs behave on consumer hardware.

So, the first one started as simple as a markdown prompt with NPC personality and some constrained knowledge, and the second one started with open source models as is, of course NPC started to drift out from its knowledge and character, no matter how many times you would put "STAY ON CHARACTER" in camel case in the prompt, and it was not fault of the local model, the ChatGPT and others would also fail eventually, I needed to think in a more reliable way to avoid "hallucination".

I discovered the hard way, that this task was still hard for a small model that can run on consumer machine, so the pipeline was still useful, breaking a complex problem that ChatGPT could answer in one shot, into smaller problems that the local model could focus its attention. My pipeline has a very specific input/output way for it to work properly, so I also learned how to do LoRA training and fine tune models, so I started training open source models for my own pipeline (input / output), and then, fine tuning for specific game lores (my hacking simulation game), with the characters from the game, the way they speak, the terms that exists on the game, improving results significantly.

This whole venture became its sole product separated from my game, I romanticized it a bit thinking that other indie devs would might be interested to add such capability to their games as gameplay, and I could transform this in a product, but first of all the product would have it to work for me, and yesterday I finally connected it to my game, and I'm enjoying the results so far, but still working on fine tuning it.