Launching today

HasData

Web scraping service for AI agents

587 followers

Web scraping service for AI agents

587 followers

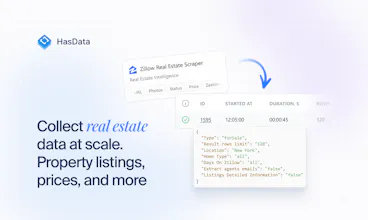

HasData is the managed web scraping service for data pipelines and AI agents. Send any URL, get clean JSON or Markdown back in one API call. We handle proxies, browser rendering, retries, and anti-bot. 50+ ready scrapers cover Google Search, Maps, News, Zillow, Indeed, and major e-commerce. AI extraction handles any other URL from a plain-text prompt. Use it from Claude, ChatGPT, or your own AI agent via MCP. CLI for everything else.

most scraping services charge you whether the data comes back clean or not and you end up paying for broken responses. how does the openclaw skill integration work in practice though, does the agent automatically pick which scraper to use or do you still have to configure each one manually

HasData

@tina_chhabra hey, that's right - HasData only charges for successful api calls, you don't pay for failed executions and don't receive broken respones.

Speaking if AI related features - we build it in such way that any agent could pick and configure the right scrapers itself. So far works good I think :)

HasData

@tina_chhabra Thanks Tina, glad the billing model landed.

As for OpenClaw, the Skill is fully automatic. You describe what you need in plain English ("get me top reviews for this restaurant"), and the Skill picks the right scraper from our catalog, runs it, and returns structured data.

Hey guys, congrats on launch! I run an AI agency and we have quite a lot of projects where scraping is a big part of the stack - market research, price monitoring, lead enrichment, competitor tracking, AI agents with web data. So this looks super relevant for us. Scraping is always one of those parts that sounds easy in the begining, but then you spend a lot of time on proxies, blocks, JS rendering and weird site structures. Just one question how well does it work with websites that have heavy anti-bot protection or dynamic pages with a lot of JS rendering?

HasData

@konstantin_pinchukovskiy looks really relevant. About handling anti-bot and JS rendering, it is all on our side, so you don't have to think about it. The request goes through our infrastructure and full browser rendering and then you get clean data back. I'd say the fastest way to see how it works for your cases is to just try it, we give 10 000 free credits during launch week :)

HasData

@konstantin_pinchukovskiy Thanks Konstantin! Both are handled. Residential proxies, headless browsers, auto-retry, all under the hood on the ready scrapers. And we only bill on success, so failed retries cost you nothing.

Scraping for AI agents is a huge bottleneck right now. How does HasData handle complex anti-bot measures like Cloudflare or dynamic JS-heavy sites without constant manual tweaks?

HasData

@rivra_dev the whole point is that a request from us should be indistinguishable from one a real person makes in their browser. So we rotate proxies across several providers, we negotiate TLS the way an actual browser does (the cipher suites, the order, the extensions - all of it), and the servers our browsers run on are set up to look like real consumer hardware, not a datacenter VM.

Hey guys, congrats on launch! I've recently seen Firecrawl doing the same (I guess?) AI craping agent - what's the difference between them and you if any?

HasData

@gregory_yashkin Hey man, cheers, appreciate your kind word and support! That matters a lot to us 🫶

Yeah, we do love guys from Firecrawl, I see them doing some amazing things lately. I believe that healthy competition helps everyone, especially the end users. I could say we have some different approach to UI/UX and also separating no-code and API scrapers for different user segments - don't know, will let PH users decide today :)

Congrats on the launch! Used enough unstable scrapers to appreciate when someone takes this problem seriously.

Btw, is the tiktok scraper coming anytime soon?

HasData

@msvst hey man, appreciate your support today, means a lot! Yeah, we're now thinking of covering all the short videos platforms for scraping the statistics, etc. Definitely in our roadmap, you just gave it an extra score!

Scaling scrapers for AI agents is usually a massive headache because of proxy rotation and constant site updates. Paying only for successful requests feels like a fair model. Do you provide a way to train the AI extraction for specific non standard layouts, or is it purely automated? Also, what system is being used behind it?

HasData

@ritikgupta_01 Thanks Ritik! You guide it with AI Extract Rules, basically a schema you describe (fields, types, descriptions), and the LLM returns clean JSON. No selectors, no training.

Congratulations on the launch! Does the agent run on a schedule for recurring tasks, or only on demand?

HasData

@margarita_lubovskaya Thanks Margarita! Right now on-demand only. Scheduling is in the plans (pun not intended). Coming soon.