Do you use Groq Chat?

What is Groq Chat?

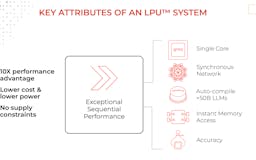

A new type of end-to-end processing unit system that provides the fastest inference for computationally intensive applications with a sequential component to them, such as AI language applications (LLMs)

Maker shoutouts

Makers get only 3 shoutouts per launch

Storipress workflow uses Groq to power our 'Grammarly for brand voice and compliance' feature, where we detect text snippets that breach your style guide and suggests changes

Combined with LLama 3, it allows us to offer the fastest top LLM performer. Personally, my favorite LLM so far.

We integrated Groq into the pipeline that Touring uses to create the stories. The incredible speed that Groq offers makes it possible to provide users with a smoother UX and shorter waits.

Recent launches

Groq®

An LPU Inference Engine, with LPU standing for Language Processing Unit™, is a new type of end-to-end processing unit system that provides the fastest inference at ~500 tokens/second.

Groq Chat

This alpha demo lets you experience ultra-low latency performance using the foundational LLM, Llama 2 70B (created by Meta AI), running on the Groq LPU™ Inference Engine.