Launching today

Fluiq

LLM observability, evals & optimize in two lines of Python

3 followers

LLM observability, evals & optimize in two lines of Python

3 followers

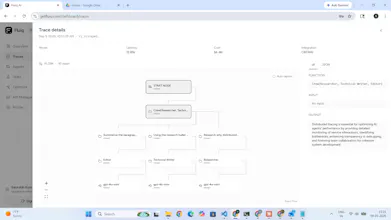

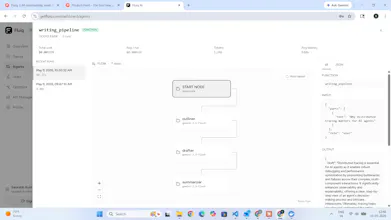

Most tools stop at dashboards. Fluiq doesn't. 1. Two lines of Python auto-instruments OpenAI, Claude, Gemini, LangChain, LangGraph, Google ADK, CrewAI, and MCP. No manual wrapping. 2. Evals blocks merges when hallucination rate spikes. 3. Cross-pipeline benchmarking see how your pipeline compares to similar ones across all Fluiq users. Built by someone who felt this pain in production.

Hey Product Hunt! I'm Saurabh, solo founder of Fluiq.

I built this after a year of deploying production LLM agents at Tarifflo and constantly debugging cost spikes, hallucination regressions, and agent failures with tools that weren't built for this.

Fluiq is two lines of Python that automatically traces every OpenAI, Claude, Gemini, LangChain, LangGraph, Google ADK, and CrewAI call — cost, latency, tokens, and RAGAS evals in one dashboard.

Free tier, no credit card. Would love brutal honest feedback from anyone shipping LLMs in production.

Ask me anything!