ConvoProbe

Automated scenario testing for Dify chatbots

3 followers

Automated scenario testing for Dify chatbots

3 followers

ConvoProbe lets you design multi-turn conversation scenarios and run them against your Dify chatbot automatically to measure response quality. Existing eval tools (LangSmith, Langfuse, Opik) work great for tracing and single-turn evaluation — but they don't support designing and executing multi-turn conversation scenarios end-to-end. ConvoProbe fills that gap.

Multi-turn evaluation is a huge gap in the current tooling. How do you measure "response quality" across turns, is it rule based checks or LLM as judge? Awesome idea!

@mateuszjacni

Thanks! Great question — it's LLM-as-Judge, not rule-based.

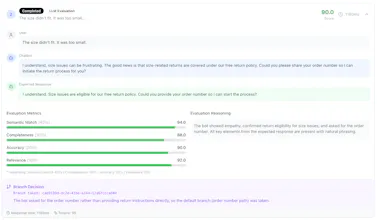

Each turn is scored on 4 criteria: semantic alignment, completeness, accuracy, and relevance. You write an "expected response" for each turn in the scenario, and the judge LLM compares the bot's actual response against it.

I considered rule-based checks early on, but they're too brittle for natural language — the same correct answer can be phrased in many ways. LLM-as-Judge handles that well, especially when you give it clear evaluation criteria rather than exact string matching.

The branching logic also uses LLM evaluation — for example, "if the bot mentioned a specific product, ask a follow-up about pricing; otherwise, ask it to clarify." The LLM decides which branch to take at runtime based on what the bot actually said.