Launching today

ClearMesh

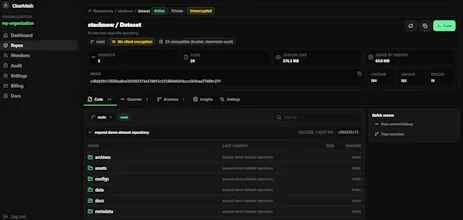

A Git-like platform for datasets, models, and binary folders

9 followers

A Git-like platform for datasets, models, and binary folders

9 followers

ClearMesh brings Git-like version control to large files. It helps AI, VFX, research, data, and engineering teams commit, push, clone, sync, branch, and mount datasets, model files, media assets, CAD exports, and binary folders. Files are stored as chunks in S3/R2-compatible Vault storage, with optional client-side encryption. Unchanged chunks can be reused across versions, and repos can be mounted read-only so tools can stream files from a normal path. Would this fit your workflow?

Hey Product Hunt, I’m Ravik, the dev behind ClearMesh.

Source code has Git, but large files are still stuck in shared drives, zip files, Git LFS pain, full re-uploads, and messy S3 folders.

ClearMesh gives large binary folders a Git-like workflow.

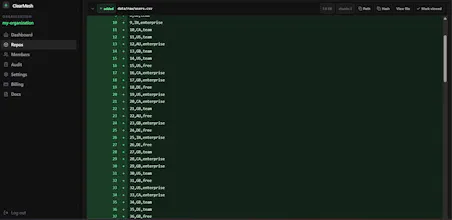

You can commit a dataset, model checkpoint, media folder, CAD export, research output, or any large project folder, push it as chunks to object storage, clone or sync it on another machine, browse it in a web UI, and mount it read-only when a tool needs a normal file path.

The practical win is that ClearMesh does not treat every version like a brand-new upload. Files are split into chunks, so when only part of a large folder changes, unchanged chunks can be reused instead of uploaded and stored again.

Simple example:

Say your team has a 100 GB dataset.

Without a versioned chunk workflow, version 1, version 2, and version 3 may become three separate 100 GB folders in object storage. If only 5 GB changed each time, you are still storing and moving a lot of duplicate data.

With ClearMesh, unchanged chunks can be reused across versions, so the new version mostly stores the changed parts. When another machine syncs the repo, it can skip chunks it already has instead of pulling the same bytes again.

The read-only mount helps when you do not need the whole repo immediately. Instead of downloading a full 100 GB folder before doing anything, you can mount the repo as a normal read-only directory. Your tools see regular files and paths, while ClearMesh fetches the underlying chunks only when those byte ranges are read.

If a script only reads configs, metadata, previews, or part of a large artifact, it does not need to pull everything first.

If a tool reads the whole file, ClearMesh will fetch all required chunks. It is not magic zero-download storage; it is on-demand access through a normal filesystem path.

For teams dealing with datasets, model artifacts, media, VFX assets, CAD exports, or research folders, this can mean:

• less duplicate storage across versions

• fewer full-folder re-uploads

• less repeated transfer and egress waste

• faster repeat syncs when most files are unchanged

• cleaner history for large artifacts

• normal S3/R2-compatible storage underneath, instead of a black box

I tested the mount with a real GGUF model file: the mounted file hash matched the original, random reads matched, boundary reads matched, and llama.cpp loaded it from the mounted path.

ClearMesh includes:

• Rust CLI

• chunked storage

• S3/R2-compatible Vault

• commits and branches

• clone and sync

• read-only mount

• optional client-side encryption

• web repo browser

I’m launching here because I want feedback from people who deal with large files every day.

Thanks for taking a look.