Launched this week

Cerememory

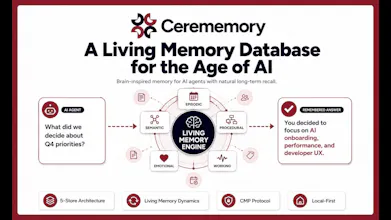

A Living Memory Database for the Age of AI

7 followers

A Living Memory Database for the Age of AI

7 followers

Brain-inspired memory architecture. Connect AI agents to lifelike memory shaped by the concepts of time and dreams, building natural long-term recall. Cerememory is a living memory database for the age of AI. Built in Rust, it provides persistent, brain-inspired memory that any LLM can use -- regardless of provider. Memories stored in Cerememory are not static rows in a table; they decay, evolve, form associations, and respond to emotion, just as biological memory does.

Hey There!

Today we're launching Cerememory, an open-source, brain-inspired memory database that gives AI systems persistent, evolving, lifelong memory.

I want to start with the question that drove this entire project, because I think it matters more than any feature list.

Long-term memory for AI agents is one of the most active problems in our field right now. Vendor memory features, vector DBs paired with RAG, sophisticated context engineering. There are already a lot of brilliant implementations out there.

But when we stepped back and looked at all of them, we kept arriving at the same question.

What is the ideal endpoint for AI memory? What are we actually trying to build toward?

The answer was sitting closer than we expected. It is the living memory system that every human being already runs.

Human memory is not just storage and retrieval.

Important experiences stay vivid, trivial ones fade. Similar memories blur into each other. Pulling on one thread reactivates a whole web of connected memories. A familiar smell can transport you back to childhood. While you sleep, the day gets reorganized and consolidated into long-term knowledge.

This is an extraordinarily sophisticated information processing system, polished by hundreds of thousands of years of evolution.

So the question Cerememory is built around is not "how do we give AI memory?"

It is "how much of this beautifully refined human memory architecture can we actually bring into the AI memory layer?" Implemented not as a data structure, but as a runtime.

◆ Three principles guide every design decision

Memory is dynamic, not static.

Important things get reinforced. Unused things fade. Recalled memories shift slightly each time. We implement decay, interference, reactivation, sleep-like consolidation, and integration as live runtime processes, not as schema fields.

Memory must carry not just content, but reason.

Every record has a structured meta-memory plane recording why it exists. Intent, rationale, evidence, alternatives, decisions, typed context graph. You can search not just what an agent did, but why it decided to do it.

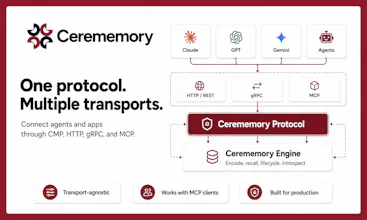

The memory layer must be independent of any one AI.

Through our own protocol called CMP, Claude, GPT, Gemini, or any future model can read and write to the same memory layer. Data is local-first and fully exportable. Switch your AI, cancel a service, the memory still belongs to you.

◆ Architecture: modeled directly on the brain

Episodic store (hippocampus): time-series events, what happened when and where.

Semantic store (neocortex): facts and concepts as a typed graph.

Procedural store (basal ganglia): habits, preferences, ways of doing things.

Emotional store (amygdala): affective metadata that modulates retention across all other stores.

Working store (prefrontal cortex): high-speed, capacity-limited, in-memory cache.

Alongside these five curated stores, a separate Raw Journal preserves the verbatim conversation, tool I/O, and scratchpad as a forensic plane.

Summaries flow into the five stores. Originals live in the journal. Backlinks let you jump from a summary back to the source in one hop.

◆ The dynamics are where it stops feeling like a database

Memories decay along a modified power-law curve. Repeated recall stabilizes them. Strong emotional intensity slows the decay.

Similar memories accumulate interference noise over time, reproducing the blurring you experience yourself.

Recall fires spreading activation through related memories, partially restoring decayed ones.

A "dream tick" lifecycle runs in the background (default every 24 hours), groups raw journal entries by topic, summarizes them into episodic memory, and conditionally promotes factual content into semantic memory. Just like sleep does for us.

Emotional modulation deserves a dedicated note. Every memory carries an 8-dimensional emotion vector based on Robert Plutchik's model (joy and sadness, trust and disgust, fear and anger, surprise and anticipation).

Emotionally intense memories decay more slowly and surface preferentially when the query carries similar emotion.

The result: an agent on Cerememory does not "remember everything equally." It remembers the moments that mattered to you.

◆ Three technical points worth highlighting

Meta-memory plane.

Search reasoning, not just content. Track long-running decisions. Let agents explain themselves. Let multiple agents debate inside a shared rationale space.

Raw Journal with Human and Perfect modes.

Human mode returns realistic, fidelity-weighted, slightly noisy recall. Perfect mode returns the verbatim original. You get the natural "imperfect" feel of human memory and full forensic auditability inside the same system, because the raw journal is a separate plane by design.

CMP protocol.

We treat HTTP, gRPC, and MCP as three transports for one underlying protocol. The semantics are identical regardless of how you connect. Claude Code, Codex, Gemini CLI, Cursor, Windsurf, any MCP client can plug in today.

Our position: the memory layer for the AI era should be defined by a protocol, not by an API.

◆ Why open source

Long-term memory for AI agents is still an open problem. There is no agreed best practice yet. New architectures and context designs ship every week.

We did not want to hoard a layer this fundamental inside one company.

Memory is the foundation of identity. Whatever shape it takes for AI, it is too important to be owned by any single vendor.

We are based in Japan, and we wanted to send out from Tokyo an honest attempt at the memory system that the next era of AI and humans will share.

Open source is our way of contributing to that. We built the first version. From here, we want the global community to build on it with us.

Built in Rust. MIT licensed. Production-shaped from day one (Bearer auth, optional ChaCha20-Poly1305 at-rest encryption, enforced gRPC TLS, Prometheus metrics, x-request-id correlation, tamper-evident JSONL audit log).

We would love your honest feedback. Especially from anyone who has fought LLM amnesia in production, built agentic systems that need long-term context, or has strong opinions on what AI memory should look like five years from now.

The repo is open. PRs and Issues are welcome.

Thanks for taking the time to look. Let's build the memory layer for the AI era together.