TrueFoundry AI Gateway

Connect, observe & control LLMs, MCPs, Guardrails & Prompts

2.1K followers

Connect, observe & control LLMs, MCPs, Guardrails & Prompts

2.1K followers

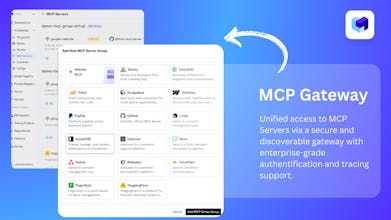

TrueFoundry’s AI Gateway is the production-ready, control plane to experiment with, monitor and govern your agents. Experiment with connecting all agent components together (Models, MCP, Guardrails, Prompts & Agents) in the playground. Maintain complete visibility over responses with traces and health metrics. Govern by setting up rules/limits on request volumes, cost, response content (Guardrails) and more. Being used in production for 1000s of agents by multiple F100 companies!

Interactive

Free Options

Launch Team / Built With

TrueFoundry AI Gateway

Hey Product Hunt, Anuraag here, co-founder at TrueFoundry 👋

When we first thought about a “gateway”, we imagined a simple LLM routing layer in front of models. Pick a model, send traffic, switch if needed. Easy… or so we thought.

Once teams started putting agents and MCPs into production, we realised the hard stuff wasn’t just about routing. It is:

Different MCP auth flows for every internal system.

Traces & logs that break once you chain models, tools, and agents

Data residency and “this data must stay in this region” rules,

Security asking “who called what, when, with which payload?”,

Product teams need to swap models without rewriting everything.

So the “router” slowly turned into a proper control plane that sits between your apps, LLMs, and MCPs - making sure traffic is reliable, auditable, compliant, and still fast for developers to ship on.

Today, TrueFoundry’s AI Gateway sits at the center of production traffic across 10+ Fortune 500s, powering their internal copilots and agents while platform teams use it to keep costs, safety, and observability under control - rather than maintaining a pile of custom glue code.

🔗 Sign Up Link: Please try and give us feedback! 🙏

🎁 Launch perk: 3‑month free trial for the PH community

If you’re wrestling with MCP auth, logging, or data policies, drop your setup in the comments - curious to see how you are wiring your stack today!

@agutgutia Love it. Identity is the new control plane for agentic AI. this gateway is glueing all components in a seamless way for enterprise use.

TrueFoundry AI Gateway

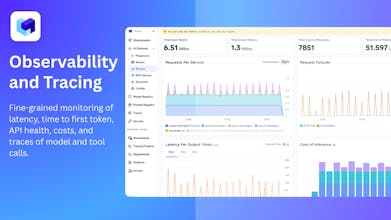

It seems useful from security and monitoring perspective, the fact that we get detailed traces could help. I wonder what all gets traced here?

TrueFoundry AI Gateway

@shrey_shrivastava We provide very detailed traces with LLM, MCP, Guardrails, etc invocation

TrueFoundry AI Gateway

@shrey_shrivastava Basically, AI Gateway is the entry point to all components like - models, MCPs, Guardrails & Prompts and all of those get traced here. Some examples,

If a request went to the LLM, ended up invoking an MCP server, and then implement a guardrail - you will see all of them logged in the traces.

You get to see your prompts, completions, tool call results, latency, time to first token etc.

If one of your model fallback or rate-limiting rules got applied - that also gets captured in the traces and these will also be flagged separately.

Besides these, you can also monitor aggregate metrics at request level, MCP level, guardrail level where you can observe costs, error rates, overall latency and you can dissect these by users, models, or any custom metadata as well.

Please provide feedback if you ideally wanted something else to be logged / traced that we are not covering here. One of the major goal of this launch with us is to collect feedback on the product! :)

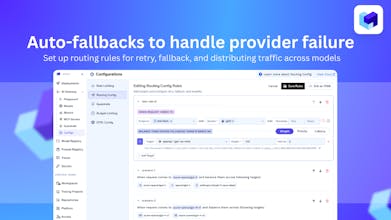

Really cool! Quick question — how does fallback actually work under the hood? Does the gateway retry with a secondary model automatically?

TrueFoundry AI Gateway

@bhavesh_patel1 You need to enable routing config for enabling fallback. If a model fails with a non-retriable error (401, 403, 5xx) - we fallback to the fallback models. If there is a spike in errors in the model, we mark that model unhealthy and it is sent to "cooldown" mode for 5 minutes.

Who is your main competitor and how does TrueFoundry AI Gateway differentiate itself from them?

TrueFoundry AI Gateway

@danagoston For competitors, we usually encounter incumbent API gateways or internal, in-house gateway solutions. They start out simple but quickly become complex to build and maintain - especially when it comes to data residency, advanced configurations, and overall time to market.

Reindeer

Great work on the launch!

Curious…how seamless is it to integrate existing internal copilots or agents without modifying their current workflows?

TrueFoundry AI Gateway

@_muzammil_kt integrates seamlessly with any copilot or agent - anything built on OpenAI compatible endpoints - without requiring changes to your existing workflows.

Ripplica

Congrats on the launch, this looks really well thought out!

I’m curious about your approach to regional data rules - can customers control where data at rest is stored so it complies with local regulations (EU, US-only, etc.)?

TrueFoundry AI Gateway

@dhruv_bhardwaj1 Totally! You can attach your own storage bucket in that particular region to ensure your data compliance needs are met.

TrueFoundry AI Gateway

@dhruv_bhardwaj1 Yes, you can configure the destination where you data at rest is stored. You could also have our Gateway run on-prem where even your data-at-motion can be within your VPC and fully in control on which region you want to spin up your Gateway in.

I have used OpenRouter, LiteLLM, Vercel AI Gateway, how is it better than those? Just trying to understand, specifically from a developer perspective.

TrueFoundry AI Gateway

@iamshnik OpenRouter allows you to talk to different models without signing up on different providers. Vercel AI gateway works similarily for models.

Truefoundry and LiteLLM are AI gateways where user brings their own keys and this can be self-hosted within the enterprise. We also provide complete observability by default, load-balancing, rate-limiting configs that can automatically switch your application when a model provider is down. It also adds a prompt management layer where in the gateway itself can substitute the prompt for you, making your client side code very small. It also enables you to talk to multiple MCP servers with a single token - so that as a developer you don't need to worry about implementing token management