Flux

Fix production bugs by replaying them locally

96 followers

Fix production bugs by replaying them locally

96 followers

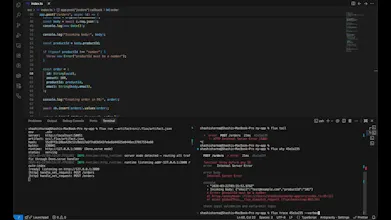

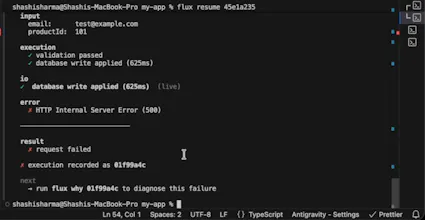

Flux records API executions so you can replay failures locally, fix them, and resume execution safely. Instead of guessing from logs, you get the exact request, inputs, and behavior. Same request. Same IO. Same outcome.

Flux

Flux

One thing that surprised me while building this:

The hardest part wasn’t capturing requests — it was making them replayable deterministically.

Especially when:

- external APIs change

- async workflows are involved

- retries behave differently

That’s where most debugging tools break.

Curious — for people working with APIs or AI pipelines:

What’s the hardest bug you’ve had to debug in production?

@shashisrun Had a webhook that started sending different payload shapes on weekends. The third party's A/B testing was hitting a different serializer, but only on Saturdays. Took two days of adding logs and waiting for the next Saturday to reproduce it. Staging never saw it because their test environment didn't have the same A/B config.

Being able to just replay the actual request would've cut that from days to minutes.

Flux

@alan_silverstreams that’s such a perfect example — the “only on Saturdays” bugs are the worst 😅

A/B configs + third-party behavior is exactly where things become impossible to reproduce reliably.

And yeah — that’s the core idea. Instead of adding more logs and waiting for it to happen again, just replay the exact request with the same context.

Curious — in cases like this, do you usually end up adding more observability, or building custom replay/debug tooling internally?

@shashisrun Usually more observability first, structured logs, request dumping for the weird ones. Never really had a team with time to build proper replay tooling internally, so something off-the-shelf is appealing if it captures enough context.

@shashisrun How do you deal with non-deterministic bits like timestamps or external API flakiness during replay?

Flux

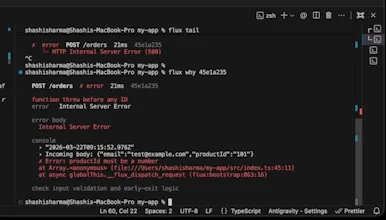

@swati_paliwal great question — this is actually the hardest part.

What I’ve been doing is separating deterministic vs non-deterministic parts of execution.

– For things like timestamps/randomness → they get recorded and replayed as-is

– For external APIs → responses are captured and stubbed during replay

– For retries/async flows → the sequence + timing is preserved from the original execution

So instead of trying to simulate behavior, you’re effectively “re-running” the same execution with controlled inputs.

Still evolving this, but that’s the general approach so far.

The resume-after-fix part is the piece I haven't seen before. Most replay tools let you reproduce the bug, but you still have to re-trigger the whole flow manually. How does the resumption work in practice - does Flux hold state between the failure and the fix, or is it more like re-running from a checkpoint?

Flux

@mykola_kondratiuk great question — this is actually the core of Flux.

Flux doesn’t just replay from logs or checkpoints.

It records the exact execution state (inputs, external calls, and side effects), so when something fails, you can:

1. Replay the same execution locally

2. Apply a fix

3. Resume from the exact failure point

So you’re not re-running the whole flow — you’re continuing it from where it broke, with the fix applied.

That’s what lets you avoid retriggering things like payments, emails, or webhooks.

Happy to share a deeper breakdown if you’re curious — this is the part we’re most excited about.

That makes sense - capturing the full execution state is what makes it actually deterministic vs best-effort replay. I can see that being really valuable for complex distributed calls where the nondeterminism is buried in dependencies.

Flux

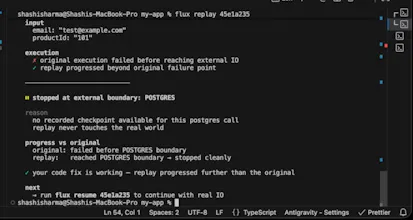

Yeah exactly @mykola_kondratiuk — the key is that it’s not “replay from logs”, it’s replay from a recorded execution boundary.

The tricky part (and what took most of the effort) was isolating side effects vs pure computation.

So during replay:

- external calls are served from recorded responses

- local logic runs normally (so your fix actually executes)

- and state transitions are preserved up to the failure point

For resume specifically — it’s closer to continuing from a checkpoint, but with one important difference:

the system knows which side effects have already happened, so it won’t re-trigger them.

That’s what makes it safe for things like payments/webhooks — you’re continuing the execution, not duplicating it.

Would be interesting to see how it behaves on something with a lot of downstream dependencies — that’s where it starts to shine.

That boundary definition is the hardest part to get right. Define it too wide and you capture too much state, too narrow and replay breaks on side effects you didn't account for. Sounds like you've found the right level of abstraction.

replaying the exact request locally instead of guessing from logs is huge. i spend way too much time trying to reproduce stuff from production. and its open source too which is a plus

Flux

@gzoo yeah exactly — that “guessing from logs” loop is what we’re trying to eliminate.

The goal with Flux is: take the exact request that failed, replay it locally with the same inputs and side effects, and then actually fix and resume it — instead of re-triggering everything from scratch.

Glad that part resonated 🙌 curious what kind of issues you end up debugging most often?

Being able to replay the exact failing request with the same inputs and IO locally is huge. How does it handle replaying requests that involve third-party APIs that are rate-limited or have changed their response since the original failure?