PromptForge

Update AI prompts without redeploying your app

7 followers

Update AI prompts without redeploying your app

7 followers

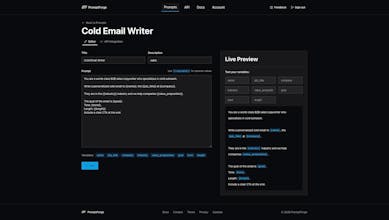

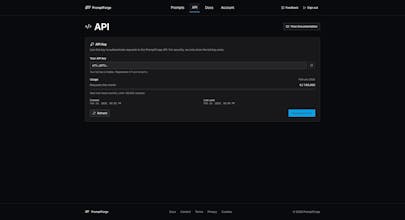

Stop hardcoding LLM prompts. PromptForge lets you write templates with {{variable}} syntax, version every edit automatically, and fetch prompts via REST API. No SDK needed. Pin to a specific version for production stability or always fetch the latest. Works with any LLM: OpenAI, Anthropic, Gemini, Llama, Mistral, and more. Plans from $9/mo. Every plan includes a 14-day free trial.

ChatPal

Very cool idea - I struggle with this issue for a lot of OpenAI API uses in my app (translation, judgements, etc)

Works for mobile app in react native and Vercel backend?

@daniele_packard Thanks!

Translation and judgement prompts are a great use case, especially since those tend to need constant fine-tuning once you see real user input.

And yes, it works with both. PromptForge is just a REST API, so anything that can make an HTTP request can fetch prompts. From your React Native app you could call it directly, or fetch prompts server-side in your Vercel backend and pass them to OpenAI from there. The second approach is usually better since it keeps your API key off the client.

The setup is the same either way: a single fetch call with your prompt ID and any variables you want to interpolate. Response comes back as JSON.

Would love to hear how it works with your translation prompts if you give it a try.