MiniCPM

Ultra-efficient on-device AI, now even faster

324 followers

Ultra-efficient on-device AI, now even faster

324 followers

MiniCPM is a family of ultra-efficient, open-source models for on-device AI. Offers significant speed-ups on edge chips, strong performance, and includes highly quantized BitCPM versions.

This is the 7th launch from MiniCPM. View more

MiniCPM-V 4.6

Launching today

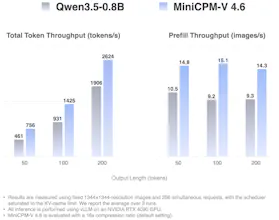

MiniCPM-V 4.6 is an open MLLM for image and video understanding on phones and consumer hardware, with mixed 4x/16x visual token compression, iOS/Android/HarmonyOS demos, and support for vLLM, SGLang, llama.cpp, and Ollama.

Free

Launch Team

Flowtica Scribe

Hi everyone!

MiniCPM-V 4.6 is a 1.3B open MLLM for image and video understanding, built for phones and consumer-grade hardware. It is the smallest MiniCPM-V model to date, and probably the cleanest efficiency play in the series so far.

Visual understanding can get expensive very quickly, especially with high-res images, video inputs, and on-device use cases. MiniCPM-V 4.6 focuses on making that workload lighter, faster, and more practical to deploy.

It also has a pretty complete developer path: mobile demos across iOS, Android, and HarmonyOS, Apache-2.0 weights and code, quantized versions, and support for frameworks like vLLM, SGLang, llama.cpp, and Ollama.

Small multimodal models are getting a lot more interesting when they are designed around real edge constraints!

@zaczuo Thank you for posting this. How large is the model in memory? It's 1.3B parameters, is that 16 bit, 8 bit, 4 bit, or 1 bit?