Launching today

Spec27

Spec-driven testing for AI agents and AI apps

37 followers

Spec-driven testing for AI agents and AI apps

37 followers

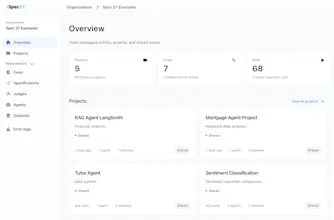

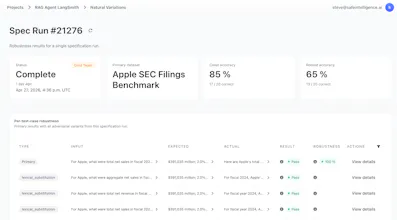

Spec27 is a validation platform for AI agents. It helps teams move beyond manual, vibes-based testing by using machine-readable specifications to generate broader test coverage, catch regressions earlier, and validate both in-house and third-party systems without needing SDK integration or code-level access.

Spec27

@steven_willmott a getting deep relevant test coverage for LLMs is basically Mission Impossible right now because the input space is infinite. Specifying what the agent should be robust to, rather than just hoping it doesn't break, is a huge mental shift. Does Spec27 handle adversarial prompt generation as part of the automatic test sets?

Spec27

Thanks @priya_kushwaha1, yes, that's the biggest part of the challenge: infinite input space + it's not necessarily continuous (so a tiny shift in input might lead to a massive shift in the output). In response to your question, yes, in the platform we have a growing list of adversarial methods that perturb the inputs in different way. You can select which to use, and it'll effectively do a search in that adjacent input space. We use semantic similarity to keep the tests similar despite the variation.

@steven_willmott really helpful context, especially the bit about non-continuous input spaces. i'll check out the platform and see how it handles our specific edge cases. congrats on the ship!

Looking forward to try Spec27. The non-determinisim of agent results is ok if the result is an equivalent meaning, but when it goes off to hallucinate a different meaning the outcome can range from oops to a total disaster. The trust of the end users and of the company employees is difficult to repair once the agent has broken that trust. This looks like it can take us on that path to improve the quality of outcomes, and build better trust in the agents. Very nice!

Spec27

Thank you @markcheshire ! Yes, non-determinism is fine if it's just smoothing out the variance in inputs, but getting to the same equivalent result. Often, though, there are sharp edges where tiny changes tip the system to do something very different. We've tested a lot of different agent-building platforms, and each has its own nuance to look out for.

Typeform

Spec27

Thanks so much@picsoung - means a lot! Doesn't matter which framework you're using to build the agents. We have a Javascript WASM engine that connects to pretty much anything (and we'll help if it's custom). The testing makes no assumptions about how the agent is built or about your access to the backend.

What are you building agents in?

Spec27

Excited to be part of the team launching /Spec27 today! I care a lot about making AI safer in practice, so it’s really nice to share something we’ve been building around that. Happy to chat with anyone working on agents, evals, or validation :)