Logic, Inc.

Build and operate fleets of agents.

561 followers

Build and operate fleets of agents.

561 followers

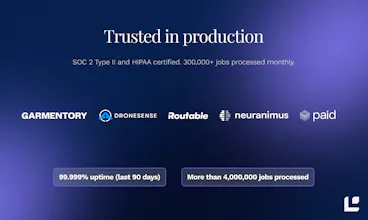

Shipping a real AI agent can mean weeks of wiring up frameworks, prompts, retries, and eval harnesses before you see production. Logic replaces that stack. You write a structured spec that describes what the agent should do, and Logic gives you a fully managed agent, with evals, observability, and versioning built in. Used in production by teams at Routable, Paid.ai, Neuranimus, Garmentory, and DroneSense.

This is the 2nd launch from Logic, Inc.. View more

Logic

Launching today

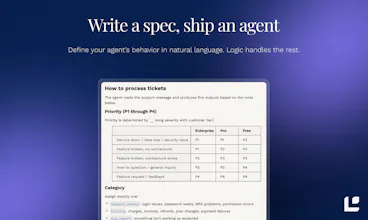

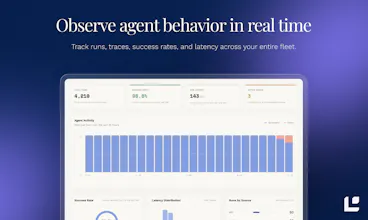

Shipping a real AI agent can mean weeks of wiring up prompts, retries, eval harnesses, and logging before you see production. Logic solves that.

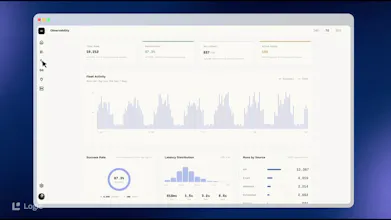

You write a structured spec that describes what the agent should do, and Logic gives you a fully managed agent, with evals, observability, model routing and more built in, ready to be called from anywhere.

Free Options

Launch Team

Logic, Inc.

Hey Product Hunt. I'm Steve, co-founder of Logic.

When you build an AI agent, the call to the LLM API is the easy part. The hard parts are evals, RAG, observability, prompt refinement, model selection, fallback, cost and latency tuning, system integrations, and giving the agent tools to do useful work in the rest of the world.

Logic gives you an out-of-the-box answer for all of that, while also improving how reliably your agents follow instructions.

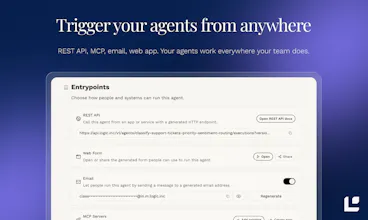

With Logic, you write a simple spec that explains what the agent should do. We give you back a managed agent that can be called via MCP, REST, a web UI, or a dedicated email address. We generate well-typed schemas and synthetic tests, handle versioning, observability, and RAG, and give your agents a "batteries included" tool suite:

Real-World Capabilities: All Logic agents can read 130+ document formats, fill out PDF forms, semantically search your knowledge library, send and receive email, do research, generate and annotate images, and call HTTP APIs.

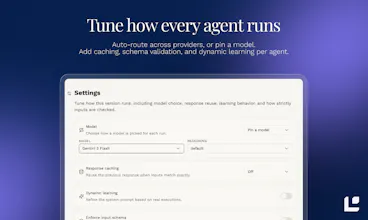

Smart Model Routing: Route across OpenAI, Anthropic, Google, and hardware-accelerated open-source models, with fallback and cost/latency tuning, so you can improve reliability without being locked into one provider.

Deep Integrations: Easily connect to external tools like Linear, Notion, and any MCP endpoint.

We make your agents smarter.

When Logic's agent harness was measured against Allen AI's IFBench, one of the hardest public tests for precise instruction following, Logic scored 83.3% – higher than any model on the Artificial Analysis leaderboard. This is a six-point gain for the agent harness above the same base model (Gemini 3.1 Pro) when called directly.

So far, 250+ organizations have automated over 4M agentic tasks with Logic. Common use cases include things like content moderation, document parsing, data extraction, medical coding, and user onboarding.

Logic is SOC 2 Type II and HIPAA certified, there's a free tier, and paid plans that scale with usage.

Jess, my co-founder and CTO, and I will be in the comments. We're excited to see what you build with it, and we'd love to hear what else you wish it could do.

Thanks for taking a look.

The spec-driven approach is the right abstraction here. When I was CTO scaling from 15 to 120 engineers, the biggest pain with internal AI tooling wasn't the LLM call itself - it was everything around it: eval harnesses that nobody maintained, prompt versions scattered across repos, and zero observability into why an agent started failing on Tuesday. The fact that Logic handles model routing, versioning, and evals out of the box means teams can skip the 3-month infrastructure detour and actually ship. Curious how you handle spec evolution - when a team realizes their agent needs a fundamentally different approach mid-production, how smooth is the transition between spec versions?

Logic, Inc.

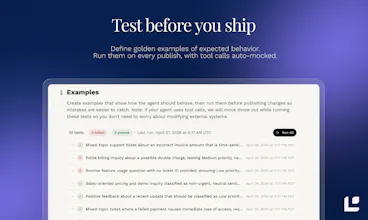

@avrisimon thank you! The transition is actually pretty smooth. Teams update their spec, Logic keeps the API contract stable, runs generated tests and evals, and lets you publish with approval if needed. Each version is immutable. If it regresses, you can roll back in one click and inspect the execution history to see what changed. If the new approach is better, it ships instantly without any code deploy.

It ends up feeling much more like a versioned release process than prompt editing in production. You can even use multiple versions of the agent in parallel at the same time.