Provara

The intelligent, self-hosted LLM gateway

2 followers

The intelligent, self-hosted LLM gateway

2 followers

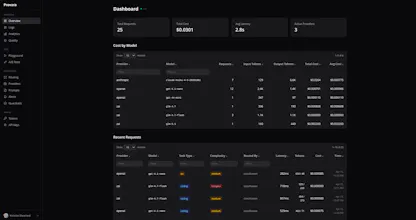

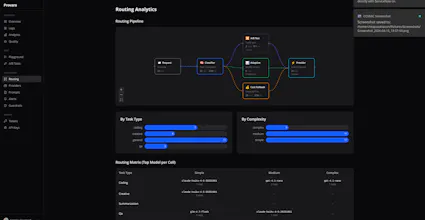

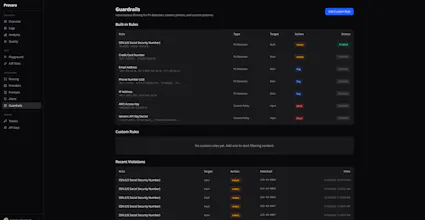

Provara is an gateway that routes LLM requests across providers and learns which model works best for each type of task. Adaptive quality-based routing with LLM-as-judge A/B testing between models on real traffic Full observability Guardrails for content Spend and latency alerting Self-hosted with Docker Who it's for: Teams using multiple LLM providers who want one dashboard Anyone optimizing LLM costs without sacrificing quality Companies that need self-hosted AI infrastructure

Hey Product Hunt! I'm Nicholas, the builder behind Provara.

I started this because I was frustrated with the "pick one model and hope for the best" approach. Different tasks need different models, but manually managing that routing is a nightmare.

Provara solves this by learning from real usage data which model performs best for coding vs creative writing vs simple Q&A. The routing gets smarter over time — no rules to configure.

I'd love your feedback on what features would make this most useful for your workflow. Thanks for checking it out!