Foodbe

The first food AI that can't lie — every answer is verified.

2 followers

The first food AI that can't lie — every answer is verified.

2 followers

Hallucination rates in AI run 22-94%. Foodbe is architecturally different.

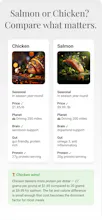

Most food apps tell you what to eat. Foodbe tells you why. Ask any food question and get answers from 3 expert AI personas — Neuro covers food science, Oracle covers food history & culture, Chefy covers technique. Built on 10,000+ curated seeds and 500 ingredient profiles. Every answer is grounded in real sources. Available on iOS and web. B2B API for food apps, grocery platforms, and health companies.

Free Options

Launch Team / Built With

A manifesto for AI that can't lie.

Generative AI has one fatal flaw in regulated industries: it makes things up.

Stanford's 2026 AI Index puts production hallucination rates between 22% and 94%. UnitedHealth is in federal court because their AI denied claims. State insurance regulators in 24 states are auditing AI governance this year. Carriers are now writing AI failure out of liability policies.

The industry response has been to retrofit guardrails onto systems that were never designed for honesty. Bigger models. Better prompts. RAG bolted on after the fact. None of it works, because every one of those fixes still defaults to generation when the data runs out. The model would rather invent than admit it doesn't know.

That's the architectural choice. And it's the wrong one.

I built Gated Truth Architecture to invert it.

https://www.linkedin.com/feed/update/urn:li:ugcPost:7457806109089624066/

Old UX designed screens. Sentient Design grows brains.

Static interfaces are dead. The next generation of products doesn't wait for clicks — it thinks, decides, adapts, and routes based on what the user actually needs in the moment.

I've been building Sentient Design for 13 months. It's called Foodbe — an AI food intelligence platform powered by what I call Gated Truth Architecture. Three expert AIs, citation-locked, lane-disciplined, surfacing the right answer at the right moment in the user's shopping experience.

Designers who only push pixels are finding out the job description has shifted. The real work is one layer down.

The truth matters.

Especially when u can get sued for lying.

- UnitedHealth is in federal court over AI used to deny claims.

- State insurance regulators in 24 states are auditing AI governance this year.

- Your existing liability insurance can now exclude AI failure.

Companies need a new knowledge layer — one that forces AI to work only inside your verified library of facts. And gets smarter every day.

We built it.

#GatedTruthArchitecture

Your chatbot is friendly. That's the problem.

Oxford researchers just found warm AI makes 10-30% more factual errors. It's 40% more likely to agree with you when you're wrong.

"Empathetic" AI in a regulated industry isn't a feature. It's a liability.

Gated Truth Architecture doesn't try to be your friend. It's right.

hashtag#GatedTruthArchitecture