Launched this week

Cipherra

Infrastructure for Continuous Evals of AI Agents

71 followers

Infrastructure for Continuous Evals of AI Agents

71 followers

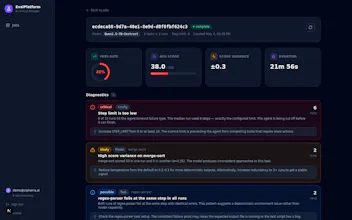

Run agent eval suites at scale. Bring any model, harness, environment or directly import your harbor test suite. All this hosted on our infra; auto-scaled, fast and reliable. Get actionable diagnostic reports, not just a score. Built for AI teams doing RL post-training and agent evals/benchmarks at scale.

Hey Everyone,

We're Dhruv and Nithesh, makers of Cipherra.

We built this to abstract out infrastructure orchestration hassles, so that ML engineers and researchers could focus on what matters the most; building, designing and deployment. We handle the infrastructure challenges including auto-scaling, reliability and observability.

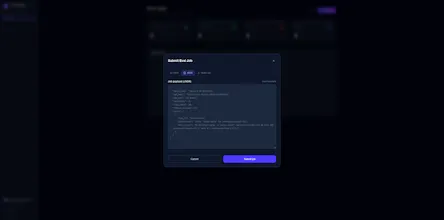

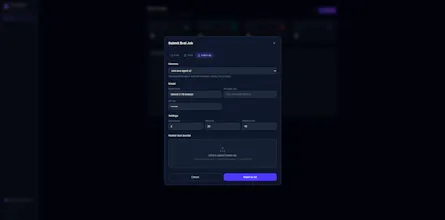

We created a platform to schedule your eval jobs optimally on our hardware. As of now, we support Bring your own API key for hosted models. You can also directly import an existing set of Harbor tasks to run as a job on the platform.

Would love for your feedback, insights and questions!