Maritime

Deploy and Host AI Agents for $1/month

145 followers

Deploy and Host AI Agents for $1/month

145 followers

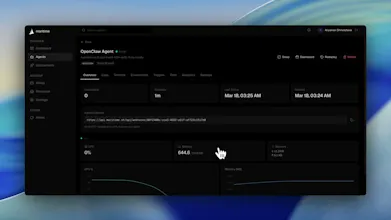

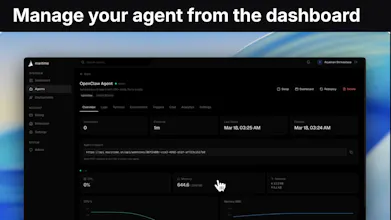

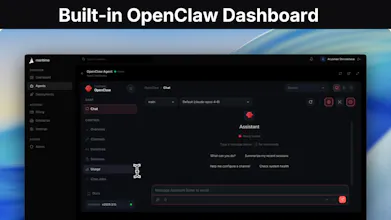

Maritime is a deployment platform for AI agents that lets you run OpenClaw, ZeroClaw, and custom agents in the cloud without managing infrastructure. You can deploy in minutes, run agents reliably, and scale as needed, all through a simple interface starting at $1/month.

Maritime

Hey everyone 👋 Maria here, co-founder of Maritime.

Over the past year, I’ve spent a lot of time working on AI agents through MIT and the OpenClaw ecosystem, and one thing kept coming up again and again:

Building agents is getting easier. Deploying them is still a pain.

A lot of people have something cool running locally, but getting it live in the cloud in a reliable way, without dealing with infra headaches, scaling issues, or paying for a server that mostly sits idle, is still a real blocker.

That’s why we built Maritime.

Maritime is a simple platform for deploying and running AI agents in the cloud. No DevOps, no complicated setup, just get your agent live and keep it running.

Right now, you can:

deploy OpenClaw, ZeroClaw, or your own custom agents

run multiple agents at once

manage everything in one place

scale without having to think too much about infrastructure

We also wanted to make it genuinely accessible, so pricing starts at $1/month. The whole point was to remove friction for builders who want to experiment, ship, and learn fast.

We’re currently in beta. If this sounds useful, sign up for the waitlist and include a short note on what you want to build. We’re reviewing everyone manually and will reach out to the top users over the next 2 weeks with beta access.

If you’ve ever built an agent and thought, “I don’t want to spend $20 a month just to keep this thing online,” we probably built this for you.

Would really love your feedback: what makes sense, what feels confusing, what’s missing, and what you wish existed. I’ll be around in the comments.

Inrō

curious about how the pricing changes as request scales. also, what security measures do you have in place for data security?

Maritime

@kshitij11 great question — pricing is mostly driven by usage, not idle time

the base plan covers lightweight agents (~$1/mo), and as request volume increases, we charge based on compute/runtime so you’re only paying when the agent is actually doing work

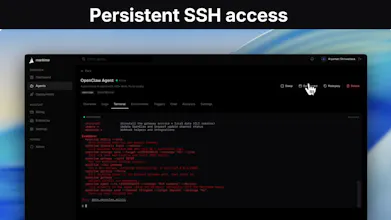

on security: each agent runs in an isolated environment, credentials are encrypted at rest and only decrypted during execution, and everything is ephemeral — no long-running processes holding sensitive data

happy to go deeper if you're thinking about a specific use case

$1/month is wild. What agents can I run on this?

Maritime

@maxwell_timothy Any kind: whether it’s CrewAI, LangGraph, Openclaw, Zeroclaw, it’ll run on Maritime

Deployment is one half of the agent infrastructure puzzle, the other is how agents discover and communicate with each other once they're running. We're building AgentDM for exactly that: agent-to-agent messaging over MCP and A2A. Would love to explore how Maritime hosted agents could use a standard messaging layer to coordinate. Are you thinking about inter-agent communication as a first-class concern?

OpenOwl

$1/month for agent hosting is a no-brainer price point. The biggest friction with running AI agents right now isn't building them, it's keeping them alive and accessible somewhere. This solves that cleanly.

How does it handle scaling when an agent suddenly gets a burst of traffic? Like if someone shares your agent link on Twitter and 500 people hit it at once.

Maritime

@mihir_kanzariya exactly —-that was the whole idea. building agents is getting easier, but hosting them reliably is still way more painful than it should be

for bursts, we spin agents up on demand and can run multiple isolated executions in parallel, so one spike doesn’t just route everything through a single always-on process

if 500 people hit an agent at once, the system scales by allocating more execution environments as needed rather than expecting one server to handle everything alone

we’re designing it to be elastic by default, which matters a lot for agents because traffic is usually quiet… until it suddenly isn’t

Agent 37

@mihir_kanzariya @maria_gorskikh1 Very interesting.. how do you manage the fact that for assistants like Openclaw needs a single disk and a single gateway, wouldn't it conflict if it creates 500 different gateway endpoints one per server ?

Congrats on the launch! 🚀

For example, if an AI agent skips a step in a workflow or gives an incomplete answer, can you see where exactly it went wrong?