DataKid AI

Data in. Deep insights out. Prompt not needed.

11 followers

Data in. Deep insights out. Prompt not needed.

11 followers

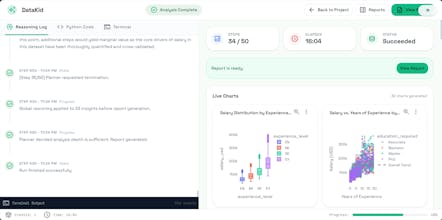

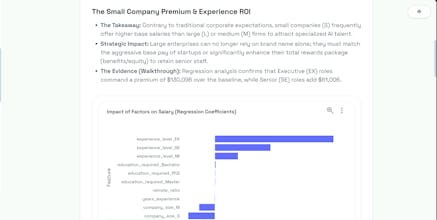

DataKid actually thinks for itself: it scans your data, comes up with smart questions and hypotheses on its own, writes and runs Python code, creates charts, tests assumptions, decides what’s worth digging into next, keeps looping until the insights stop getting better, then stops and writes up a clean, readable report — executive summary, visuals, key findings, and actionable conclusions.

Most AI analytics tools generate answers.

You’re claiming to generate curiosity.

That’s a much harder problem.

Autonomous data exploration sounds powerful — but the real risk is hallucinated patterns dressed as insight.

The real moat won’t be Python execution.

It’ll be epistemic discipline.

How do you ensure the agent isn’t just getting more confident — but actually getting more correct?

@zapuskatel Thanks for the sharp question — you're absolutely right that nobody has fully solved reliable autonomous exploration yet. Generating true curiosity without slipping into confident hallucinations is brutally hard.

That said, we've put serious effort into it, and the results have been pretty solid so far.

Our strongest guardrail: every single insight must be directly grounded in the actual data and code execution results. This alone kills most hallucinations.

The other big win is our staged validation process: here's a dedicated phase where the agent actively tries to deepen/ falsify simple hypotheses with more rigorous checks. We've seen it reliably discard a large chunk of shaky insights on its own — which feels like real progress toward epistemic discipline.

Still early days, and we're iterating fast.

@tigerkid_yang That’s a thoughtful approach — especially the explicit falsification phase.

Most AI systems optimize for generating insights.

Very few optimize for killing weak ones.

Grounding everything in actual execution results is a strong baseline.

Without that, autonomy just amplifies noise.

The staged validation loop is what makes this interesting.

Curious about one deeper layer:

How do you define the stopping condition in practice?

Is it statistical saturation, marginal lift decay, or some heuristic threshold?

Because in real decision environments, over-iteration can be as risky as premature conclusions.

There’s a fine line between disciplined exploration and recursive overfitting.

If you’re getting that balance right, this is less “AI analytics”

and more epistemic infrastructure.

That’s a big deal.