AdsTurbo

Create ads with AI actors that look truly human

705 followers

Create ads with AI actors that look truly human

705 followers

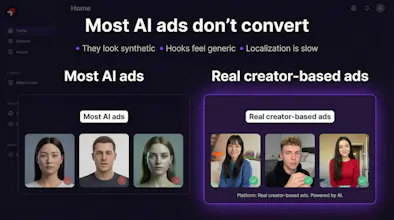

AdsTurbo AI creates UGC-style video ads using AI Talking Actors trained on real creators—so expressions, gestures, and delivery look natural instead of “obvious AI.” Generate on-camera performances fast, test more creatives, and scale ad production without losing the human feel that builds trust and drives conversions.

Hi Product Hunt! 👋

I’m Oscar, co-founder of AdsTurbo AI.

After years in video production and digital marketing, we kept seeing the same gap: AI made ads faster to produce, but most outputs still felt synthetic—flat expressions, stiff delivery, and that “AI vibe” that hurts trust.

So we built AdsTurbo AI around AI Talking Actors trained on real creators—real expressions, gestures, speaking rhythm, and on-camera presence—so the result feels closer to real UGC and real ad performance.

What you can do with AdsTurbo today:

Create UGC-style video ads with more natural, believable actors

Produce more variations and test creatives faster

Scale production without losing the human feel that helps people stop scrolling

We originally built this for our own ad workflows, and after other marketers and brands asked to use it, we decided to launch publicly.

Would love your feedback: What’s the hardest part of making high-performing video ads right now—speed, cost, performance, or “looking real”?

@dr_simon_wallace Thanks Simon — really thoughtful point, and I completely agree.

“Looking real” is only part of the issue. Underneath that, the bigger concern is often brand trust and reputational risk — whether AI-generated creatives feel safe and credible enough for clients to actually use.

That’s a big part of why we built AdsTurbo the way we did: not just to make ad creation faster, but to make the output feel more natural, more controllable, and more usable in real workflows.

And yes — creative variant testing and briefing are exactly where we’re seeing a lot of value early on as well.

Really appreciate you sharing this, and thanks a lot for the support!

Fastlane

@oscar_chong1 @dr_simon_wallace Hyper-realism for talking AI i feel like is sooo close, but silent AI UGC (e.g. wall of text style TikTok videos) are definitely already there!

@oscar_chong1 congrats to your launch! I guess the "looking real" part is a temporary issue, as technology (including yours) advances super fast. what is key for long term success & acception though, is transparency. nobody likes to be fooled - whether in an ad or in a viral "fake animal x does something incredible" video. AI generated content should ideally be embraced for all the new opportunities and advantages it offers - it should not be "hidden" (and thus ultimatively considered fake) imho.

@oscar_chong1 The "looking real" gap is real. In my experience with UGC-style content, the uncanny valley problem isn't just visual -- it's pacing and hesitation. Human creators pause, stumble slightly, look away. Does AdsTurbo model that kind of micro-imperfection, or is the delivery still optimized for polish?

Tobira.ai

@oscar_chong1 @chinedu_chidi_ikejiani The micro-imperfection question above is one I keep thinking about too. Natural delivery isn't just tone — it's the small breaks and off-rhythm moments that make someone feel real. Is that something you're actively modeling, or is the current output closer to polished than imperfect?

@oscar_chong1 Congrats on the launch, Oscar! 🎉 The “AI vibe” problem is real and it’s the thing that kills conversion — people can feel synthetic delivery even when they can’t articulate why. Training on real creators rather than just generating from scratch is the right approach. About to launch OceanMind, an AI-powered breathwork app, and UGC-style creatives are exactly what performs in the wellness space — authentic feel matters a lot when you’re asking someone to trust you with something as personal as their anxiety or sleep. Curious how well it handles softer, more intimate delivery styles versus high-energy DTC ads — that’s the tone we’d need. Will try it!

I guess it's obvious, but I wanted to still ask that since you trained it on real creators, and I hope that it was ethically done with their permissions, etc., yes?

@zerotox hanks for asking — and yes, absolutely.

This is something we take very seriously. Our work with real creators is done with permission and through proper agreements, and we’re very mindful about consent, usage rights, and how creator likeness is used.

For us, this isn’t just a legal question — it’s a trust question. If we want AI-generated ads to be sustainable, they have to be built in a way that respects the people behind the content.

Really appreciate you raising this.

Congrats on the launch!!

Where are the top performing ads sourced from? Is it from the ad library of our competitors or the platform curates by itself based on the major industries?

@himani_sah1 Thanks Himani — great question.

It’s a mix. We source inspiration from publicly available ad examples and broader market signals, then organize and surface them in a way that’s actually useful for marketers by category, industry, and creative angle.

So it’s not just a raw dump of competitor ads, and it’s not purely manual curation either — the goal is to help users discover patterns, winning formats, and useful references faster.

Over time, we’re also working toward making this more structured by major industries and use cases, so people can go from inspiration to production much more efficiently.

How do you handle different brand voices across campaigns? Like if a client wants a more serious tone vs a playful one, can the AI actors adapt to that? Congrats on the launch!

Fastlane

@borrellr_ feel like having different company workspace/context would be a good solution for this

@borrellr_ Thanks Ignacio — really appreciate it, and great question.

Yes — that’s a big part of what we’re working toward. Different campaigns need very different delivery styles, so the goal isn’t just to generate an actor video, but to make the output adaptable to different brand voices, tones, and creative directions.

So whether a client wants something more serious, playful, energetic, or polished, we’re building AdsTurbo to make that easier through script direction, actor selection, and creative variation workflows.

That flexibility is really important for performance marketing, since what works for one brand — or even one campaign — may not work for another.

Vozo AI — Video localization

Congrats on the launch! What’s your key differentiation from Heygen please?

@jojo_li Thanks JoJo — really appreciate it.

I’d say the key difference is that HeyGen is very strong for avatar/video communication use cases, while AdsTurbo is built more specifically for performance marketing and ad creation workflows.

With AdsTurbo, we’re focused on helping teams:

create more UGC-style ads that feel natural

generate more creative variations faster

move from ad inspiration / winning patterns to production

build assets that are closer to real ad testing workflows, not just presenter-style videos

So in short: HeyGen is great for avatar-led video creation, while AdsTurbo is more focused on creating ad creatives that are meant to perform.

Really good question — and respect to the HeyGen team as well.

The facial micro-expressions are unsettlingly good - I kept waiting for the uncanny valley to kick in but it never did. Have you tested what happens when you feed it a script with deliberate emotional contradictions (like someone smiling while delivering tragic news)? I'd love to see if the AI can navigate those subtle human inconsistencies that separate convincing from just technically correct.

@lliora Thanks so much, Liora — really thoughtful comment.

We spent a lot of time on facial nuance because, like you said, that’s usually where uncanny valley shows up first. I’m glad it felt natural on your end.

And yes, we’re very interested in exactly that kind of test — scripts with emotional contradiction, suppressed reactions, or expressions that don’t map neatly to the words being spoken. That’s where “technically correct” stops being enough, and true believability starts.

We’ve been pushing in that direction, and your example is honestly a perfect stress test for the system. Really appreciate you surfacing it.

The UGC-style angle is what makes this different from other AI video tools. Real question though: how do advertisers handle the disclosure side? Do the AI actors get flagged on platforms like Meta that require AI-generated content labels?

@greythegyutae Thanks, Gyutae — really appreciate that.

The UGC-style realism is exactly the point for us.

On disclosure, we believe advertisers should be transparent and follow each platform’s latest rules. Our AI actors are built from licensed creator assets with a clear commercial-use path, but we still encourage brands to review platform-specific guidance before publishing.

We want this to be useful for advertisers — and responsible, too