Launched this week

Contextual Moderation for Chat

AI-powered moderation for safer chat experiences

52 followers

AI-powered moderation for safer chat experiences

52 followers

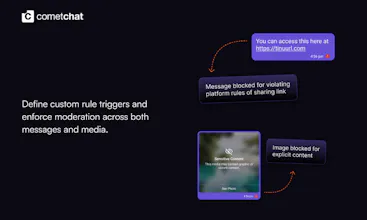

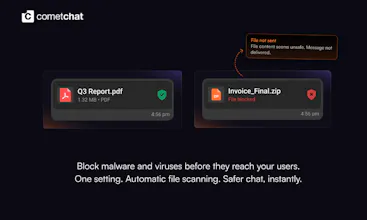

Build safer, smarter chat with CometChat Moderation, the all-in-one safety layer for conversations. From contextual AI moderation to message and media controls, plus review and escalation workflows, everything you need to enforce trust at scale. Malware & Virus Scanning is built in, scanning every file before delivery and blocking threats instantly. Turn it on from the Dashboard in minutes, no extra code required.

CometChat

Hey Product Hunt! 👋

Pourav here from CometChat.

We’ve been investing heavily in CometChat Moderation - a flexible system designed to help developers build safer, more controlled chat experiences from day one.

With Moderation, you can go beyond basic filtering. Think contextual AI that understands conversations (not just messages), controls for text and media, and built-in review and escalation workflows to manage edge cases with precision.

It’s designed to help teams enforce safety, compliance, and platform policies at scale - without stitching together multiple tools.

Malware & Virus Scanning is one part of this system, automatically scanning every file before delivery and blocking threats in real time.

Setup takes just a couple of minutes from the Dashboard. If you’re already using our UI Kits or SDKs, there’s nothing extra to build.

Curious how teams here are approaching moderation today - rules, AI, human review? Would love to hear and answer any questions. 🚀

CometChat

Hey@lakshminath_dondeti, good questions 👋

Quick context: this is for platforms that have user-to-user chat (marketplaces, communities, social, dating, gaming etc.) - not a CS tool.

On the regulatory angle - yes, increasingly so. EU's DSA (in force since 2024) requires platforms hosting user content to actively moderate illegal content, publish transparency reports, and provide takedown mechanisms. Non-compliance fines go up to 6% of global turnover. UK's Online Safety Act and India's IT Rules push in similar directions. So for any platform serving these markets, moderation is no longer optional.

What actually gets moderated:

Text - abuse, harassment, scams, spam, CSAM, illegal content

Media - NSFW, violence, deepfakes

Files - malware, phishing

But even outside compliance, most of our customers care about it because abuse and spam destroy chat product retention. Compliance is the floor, not the ceiling.

Hope that helps!