Launching today

LeafMarker

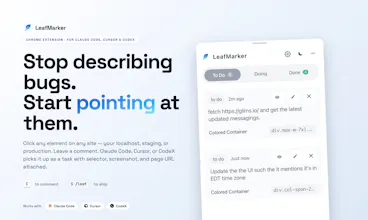

Visual UI feedback for Claude Code, Cursor & Codex

16 followers

Visual UI feedback for Claude Code, Cursor & Codex

16 followers

Click any element (or snip a region), type what's wrong — LeafMarker writes it as a task in your repo with selector + screenshot + URL. Run /leaf in Claude Code, Cursor or Codex and your AI ships the fix. Visual feedback that lives in your code.

Free Options

Launch Team / Built With

VideoToScreenshots

Hey PH 👋 I'm Surya, maker of LeafMarker.

I built this because I was watching myself waste hours in a loop I ran a hundred times a week.

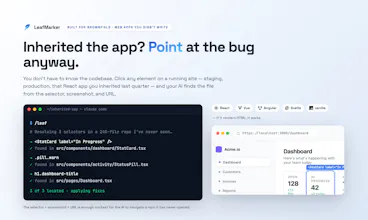

Rebuilding parts of a brownfield React app with Claude Code. I'd spot something visually off on the page — a button misaligned, a modal trapping focus, a margin off by 8px. Then I'd pause everything to:

Take a screenshot

Switch to my terminal

Paste it into Claude

Describe in English what was wrong ("the button under the avatar — it should align left on mobile")

Wait while it searched the codebase trying to figure out which button I meant

Get a fix that was sometimes the wrong button

Each "this should look slightly different" cost more time than the fix itself was worth. And the friction wasn't the AI — Claude was great at the actual code change. The friction was getting "what I'm pointing at on screen" into "what the AI sees in the codebase."

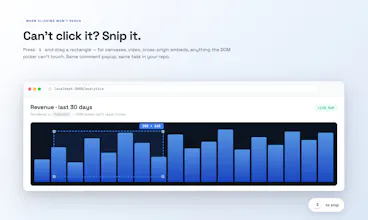

LeafMarker is the bridge. Press C and click any element on the running app — or press S and drag a region when there's no clean element to click (canvases, video, cross-origin embeds). Type a comment.

The task lands in your repo — selector or screenshot, URL, all the context. Run leaf in Claude Code, Cursor, or Codex. The AI reads it, finds the file, ships the fix, marks it done.

A few things people have asked me:

"Doesn't Claude Design already let me comment on UI?"

Claude Design is great for starting fresh inside Claude — it works on UI Claude just generated. For brownfield projects it can try to recreate your app from the repo so you can comment on it, but the recreation rarely matches your actual live one. So you end up tweaking a near-replica, not the real thing. LeafMarker works directly on the real app running in your browser. No recreation, no replica. You point at the broken thing on screen, the AI finds it in your own code.

"Which AIs does it work with?"

Mostly tested with Claude Code on Opus and Sonnet — that's where the workflow shines. Cursor and Codex work too. The output is just JSON + PNG files in your repo, so anything that reads files plays well with it.

"Does it work on localhost / staging / preview deploys?"

Yes — anywhere you can open in Chrome. Localhost, internal staging, a Vercel preview, your live site.

"Where does the data go?"

Into your repo. Screenshots, selectors, comments — all written locally. Only Google sign-in and Stripe billing touch our servers.

"Free tier — real?"

Yes — 18 lifetime annotations across 3 projects, no time limit. Plenty to find out if it fits your workflow before you decide.

---

Three places this has saved me real time:

Pulling real content from an old site into a new design

Revamping a client site. The new design was ready, but every card still had AI-generated placeholder copy. Their old site — still live — had years of real projects with proper content. I opened the old URL, used LeafMarker to select the card grid, and typed: "Fetch all the projects from this site, keep the new design, replace the placeholder content." That was it. Claude pulled the content across, kept my new layout intact, shipped it. An hour of manual scraping became one comment.

Fixing production bugs without reproducing them locally first

Caught a copy typo and a misaligned button after we'd already deployed. Normally that's: notice on prod → repro on localhost → find the file → fix → push → deploy. With LeafMarker I just opened the live production URL, pointed at the broken text and the misaligned button, typed the fixes. The comments landed in my local repo (the project folder I had connected). I ran leaf locally, Claude changed the actual codebase, I deployed. The bug was on prod; the fix happened in code; I never had to repro it locally.

The everyday loop on localhost

Most days it's the boring one — running my dev server, clicking around, leaving little comments. Anything I can't click cleanly (a video preview, a charting canvas, a sliver of UI inside a third-party iframe) I snip as a region instead. Then a single leaf run later in the day works through everything I queued up. See-it-fix-it goes from minutes to seconds.

---

The question I'd actually love to hear from this community: in your AI-coding loop, where does the screen-to-code translation break down for you? What's the first thing you'd point at?

— Surya 🌿

Congrats Surya. Quick question: When I press C and click, how does it pick the "right" element vs. a wrapper or child? Does it usually nail it out of the box, or is S your recommendation for most cases?

VideoToScreenshots

A click captures the element + its parent chain (think: Button inside Card inside Section), plus the class names, the text on it, and the page URL. The AI uses all of that to find the matching file in your code — names on screen usually mirror names in code. S is for when there's nothing clean to click (canvas, video, embeds) — it just sends a screenshot + URL instead.